Apple Patents a System That Tracks Your Eye and Maps It in 3D at the Same Time

Apple's new patent describes a system that doesn't make you sit through a separate eye-enrollment process — instead, it quietly builds and refines a 3D model of your eye in the background while it's already tracking your gaze.

What Apple's simultaneous eye-mapping system actually does

Imagine you put on a headset and, instead of staring at a dot that bounces around the screen for 30 seconds so the device can learn your eyes, it just... figures it out while you're using it. That's the core idea here.

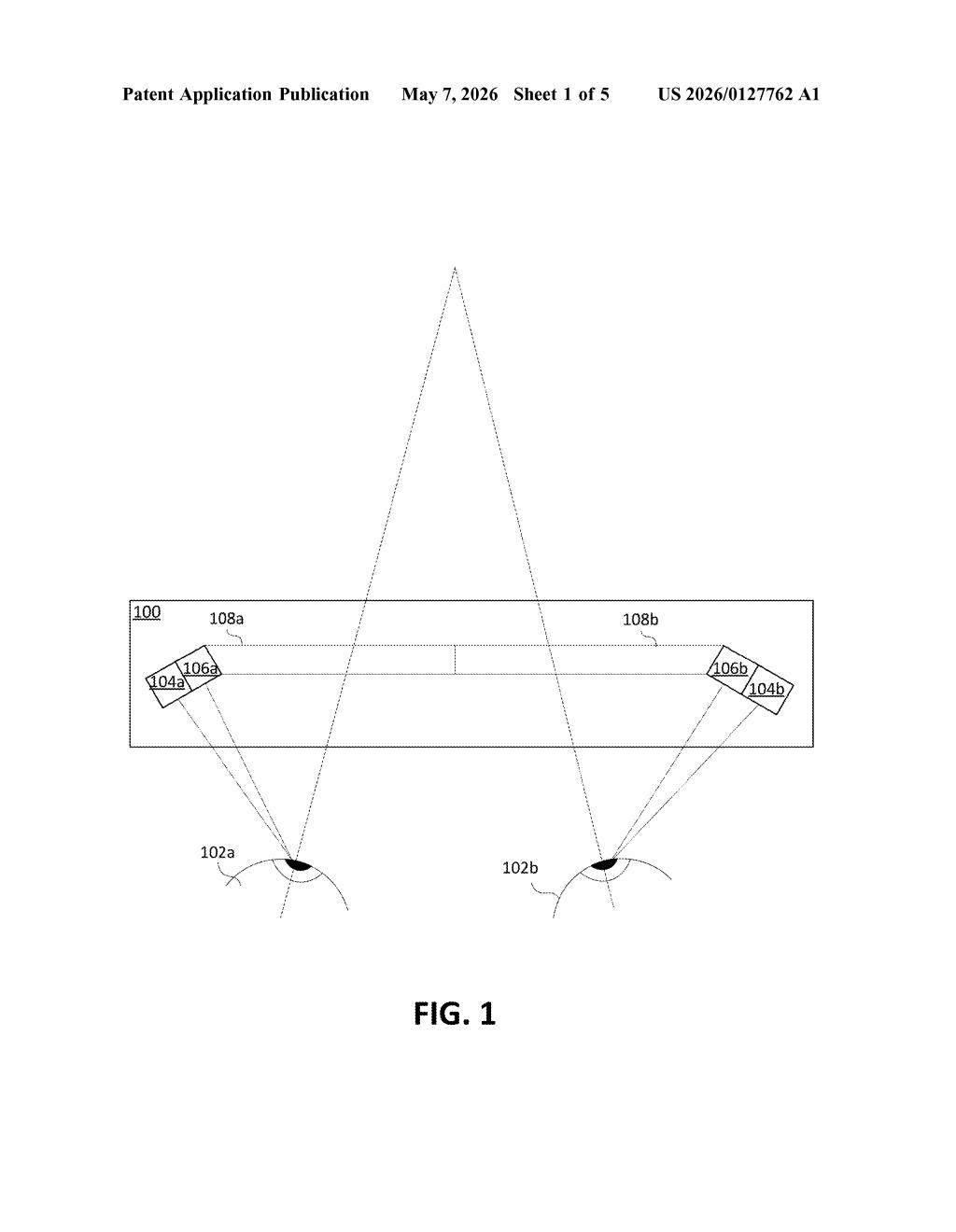

Apple's patent describes a method where tiny infrared lights bounce off your eye, creating reflections called glints. A sensor captures those reflections as a stream of image frames. While that's happening, the system is doing two things at once: tracking where your eye is pointing and updating a 3D model of your eye's unique shape.

The clever part is efficiency. Rather than processing every single frame to build that model, the system only picks frames that show parts of your eye it hasn't seen well yet — like filling in blank spots on a map. The result is faster, less intrusive eye enrollment that happens invisibly in the background.

How Apple selects frames to build the live 3D eye model

The patent describes a pipeline with two parallel jobs running between consecutive image frames. The first is a conventional eye tracker — it uses glint reflections (tiny bright spots created by IR illuminators bouncing off the cornea) to compute gaze direction in real time. The second is an eye model updater that continuously refines a 3D geometric model of the user's eye.

The key innovation is how the system decides which frames to use for model updates. It maintains a coverage map — essentially a record of which portions of the cornea and pupil have already been captured and incorporated into the 3D model. When a new frame arrives, the system compares the eye regions visible in that frame against what the model already knows. Only frames that add new coverage — showing previously unmapped parts of the eye — are fed into the reconstruction pipeline.

This selective approach matters because building a 3D eye model is computationally expensive. By skipping redundant frames, the system keeps pace with real-time tracking without overwhelming the processor.

The patent also references a cornea/pupil reconstructor component, suggesting the 3D model captures both the curved corneal surface and pupil geometry — two features that vary enough between individuals to serve as a biometric identifier, which is why accurate modeling improves gaze accuracy over time.

What this means for Vision Pro's eye-tracking enrollment

For anyone who has set up Face ID or gone through a VR headset's eye-calibration screen, the friction is real. Apple's approach eliminates a dedicated enrollment step entirely — the device gets smarter about your eyes the longer you use it, without asking anything of you. That kind of passive personalization is a meaningful UX improvement for a wearable you put on and take off repeatedly.

On a technical level, better per-user eye models translate directly to more accurate gaze-based UI on Apple Vision Pro and any future headsets. Eye tracking is the primary input method on Vision Pro — if the model of your eye is more precise, so is every tap, scroll, and selection you make with your gaze.

This is genuinely useful work. The coverage-map approach to selective frame sampling is an elegant solution to a real problem: eye-model calibration is annoying, and doing it invisibly in the background is a clear win for wearable UX. It's not a flashy AI story, but it's exactly the kind of quiet infrastructure improvement that makes or breaks a device people wear on their face.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.