Meta Patents an AI Image Editor That Picks Its Own Edit Tasks From Plain-Language Instructions

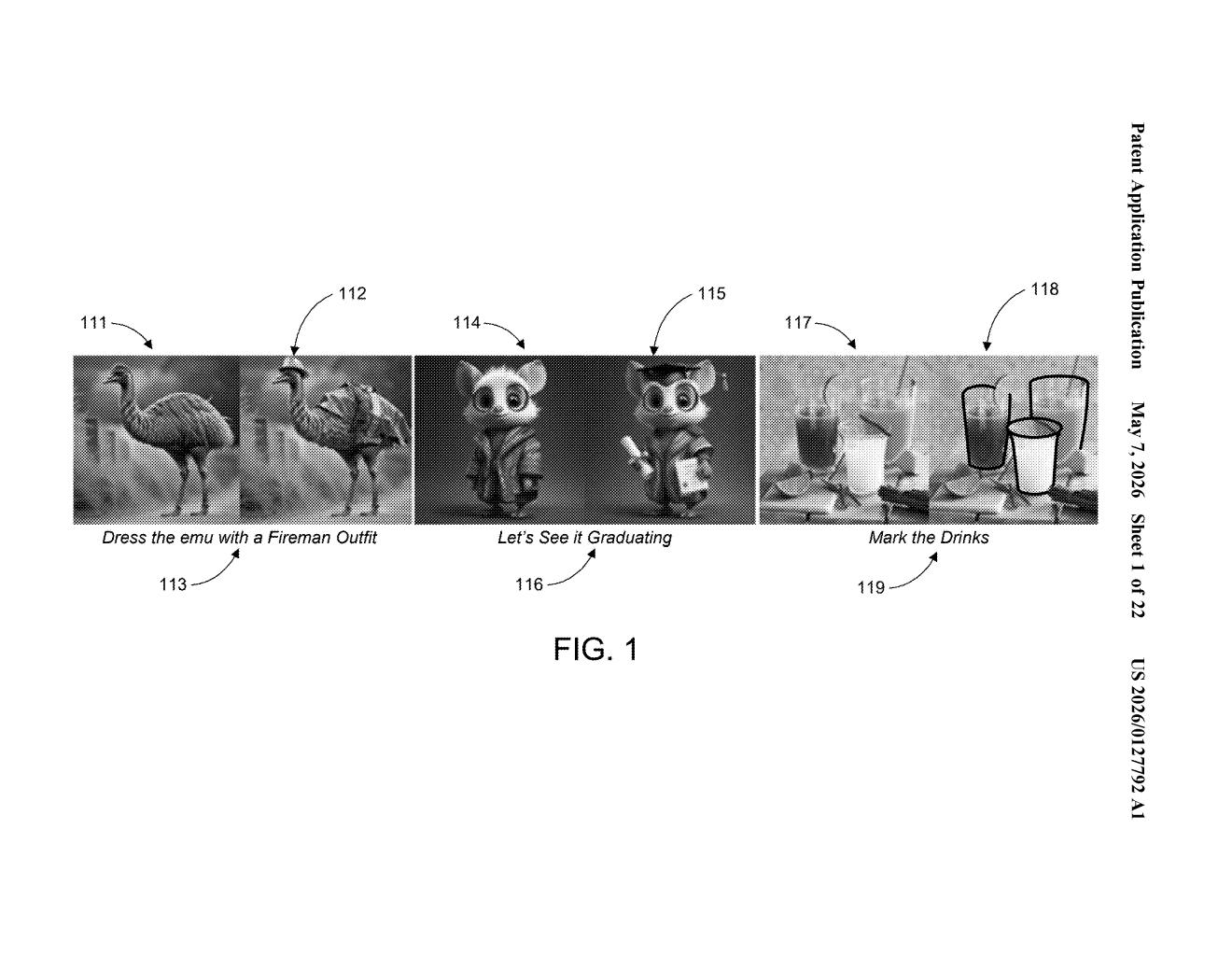

Instead of asking you to pick from a menu of filters or tools, Meta's new patent describes a system that reads what you want done in plain English — and figures out which editing operation to run on its own.

How Meta's instruction-driven image editor works

Imagine you hand someone a photo and say, "make the sky look like sunset" or "remove the person in the background." You don't tell them how to do it — you just describe what you want. Meta's patent is trying to give an AI the same capability.

The system takes your photo and your plain-language instruction, then automatically decides which type of edit to apply — from a pre-built list of edit operations — before generating the updated image. You don't pick a tool; the AI does that routing step for you.

Under the hood, a student model (a smaller, faster AI trained to mimic a larger one) handles both the task-classification step and the image-generation step. That two-in-one design hints at something built for speed and on-device use, not just cloud rendering.

How the student model routes instructions to edit tasks

The patent describes a pipeline with two main stages: edit task selection and image generation.

In the first stage, the system analyzes an input image alongside a natural-language instruction. It then classifies that instruction against a set of predetermined edit tasks — think operations like object removal, style transfer, color adjustment, or background replacement. Rather than treating every edit as a free-form generation problem (which is expensive and unpredictable), the system constrains the problem by routing to a known task type first.

In the second stage, a student model — a compressed neural network trained via knowledge distillation (where a smaller model learns to replicate a larger "teacher" model's outputs) — executes the selected edit and produces the output image. Using a student model matters because it's faster and lighter than running a full diffusion model from scratch on every request.

Key components called out in the patent:

- Instruction parsing to extract a description of the desired content change

- Task classification against a fixed edit-task vocabulary

- A unified student model handling both recognition and generation

- Output image generation conditioned on the selected task and the original image

The claim is notably broad — it covers images and video, and the edit-task list is described as "predetermined" rather than open-ended.

What this means for AI-powered photo editing on Meta's apps

For Meta, this is infrastructure for the kind of one-tap AI editing that would fit naturally into Instagram and WhatsApp. If your phone can understand "make this look like it was taken at golden hour" and execute it without you navigating an edit menu, that's a meaningfully different user experience — and it keeps people inside Meta's ecosystem rather than jumping to a third-party app.

The student model framing is the technically interesting part. It suggests Meta is optimizing for inference speed and potentially on-device deployment, which would have real implications for privacy (your photo doesn't have to leave your phone) and latency. The constrained task-routing design also makes the system more predictable and auditable than a fully open-ended generative model.

This is a well-trodden space — Adobe, Google, and Apple have all filed similar instruction-following image-edit patents — but Meta's explicit use of a student model for both routing and generation is a specific architectural bet worth noting. The real question is whether the predetermined task vocabulary is broad enough to cover what users actually ask for, or whether it becomes a ceiling on what the system can do.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.