Nvidia Patents a Bird's Eye View Generator for Embedded Vision Systems

Nvidia has filed a patent describing a way to convert raw stereo camera depth data into a bird's eye view image — all on a low-power embedded chip, no beefy GPU required.

How Nvidia turns stereo camera depth into a top-down map

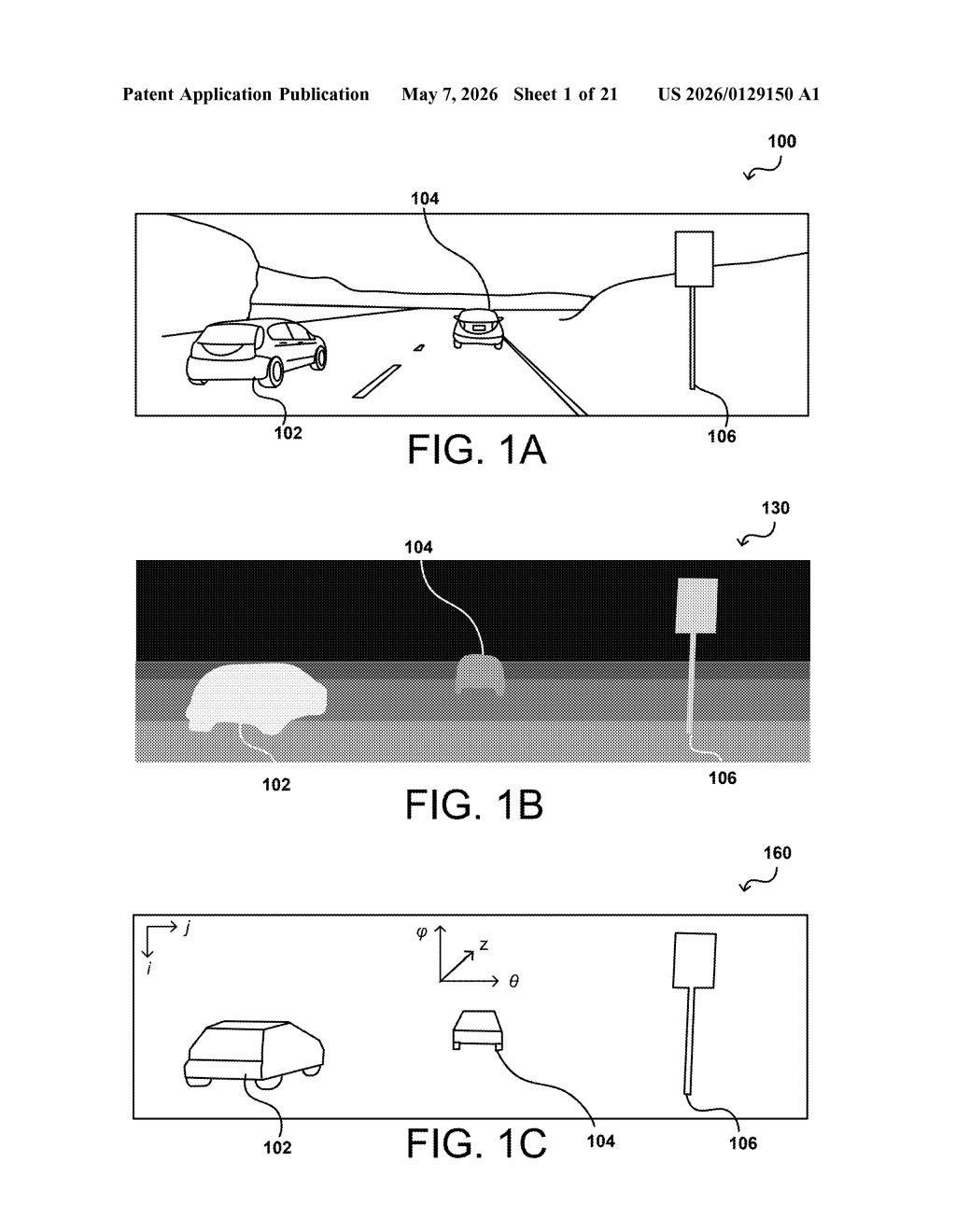

Imagine you're driving and your car's cameras are trying to figure out where every pedestrian, cyclist, and parked truck is around you. A front-facing stereo camera pair gives you depth information — but that raw data is hard to act on directly. What's more useful is a top-down map that shows where everything is relative to your car, like a chess board view of the world.

That's exactly what this Nvidia patent describes. It takes the depth information from a stereo camera (called disparity data) and converts it into a clean bird's eye view image. Crucially, the whole process is designed to run on a small, low-power embedded processor — the kind you'd find in a car's vision system — not a data center machine.

The clever bit is an intermediate step: it first converts the depth data into a 2D histogram organized by angle and distance, then transforms that into the final overhead map. This keeps the math lightweight enough for constrained hardware.

How the 2D histogram bridges disparity data and bird's eye view

The system starts with stereo disparity data — the pixel-level depth map you get when two cameras see the same scene from slightly different positions. Instead of processing that raw depth map directly into a top-down image (computationally expensive), Nvidia's approach adds an intermediate step.

First, the embedded processor (a low-power chip with DMA — Direct Memory Access — meaning it can read/write large chunks of memory efficiently without leaning on a main CPU) builds a 2D histogram of the scene. This histogram organizes detected objects as a function of angle from the camera and distance to the camera plane. Think of it as a polar coordinate snapshot of the scene.

From this histogram, the system computes centroids and statistics for each detected object — essentially finding the center and shape of each blob in the histogram — then converts those into a Cartesian coordinate system (standard X/Y grid). The final output is a bird's eye view image suitable for downstream tasks like object tracking or path planning.

One notable detail: morphological filtering (image cleanup operations like smoothing or filling gaps) is applied using the same filter size for all objects regardless of their distance from the camera. This simplifies processing on hardware that can't afford per-object variable-size operations.

What this means for real-time object detection in vehicles

Bird's eye view generation is a core building block in autonomous driving and ADAS (Advanced Driver Assistance Systems). The challenge has always been doing it fast enough and cheaply enough to run on the embedded hardware inside a car rather than offloading to a powerful compute module. This patent is squarely aimed at that constraint — making a computationally lean pipeline that works on limited silicon.

For you as a driver, this kind of technology is what lets a car's safety system accurately place a cyclist in your blind spot or warn you about a pedestrian about to step into the road. More efficient processing means faster response times and potentially lower-cost hardware in production vehicles — which matters a lot as automakers try to bring these features down-market.

This is solid, unglamorous engineering work — the kind of patent that quietly ends up inside shipping products. Nvidia's automotive ambitions (DRIVE platform) make this a natural fit, and the explicit focus on embedded/DMA hardware tells you this is aimed at real production constraints, not a research demo. Worth watching if you follow the ADAS or autonomous vehicle space.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.