Waymo Patents Transformer-Based AI System for Building Robotaxi Navigation Maps

Waymo is borrowing the same AI architecture that powers ChatGPT to help its self-driving cars understand what's around them — and turn that understanding directly into navigation decisions.

How Waymo's AI reads the road in real time

Imagine you're driving through an unfamiliar neighborhood. You glance around, mentally note the stop sign ahead, the cyclist on your right, the parked truck blocking the lane — and instantly build a mental picture you use to steer. Waymo's patent describes a system that does something very similar, but with AI.

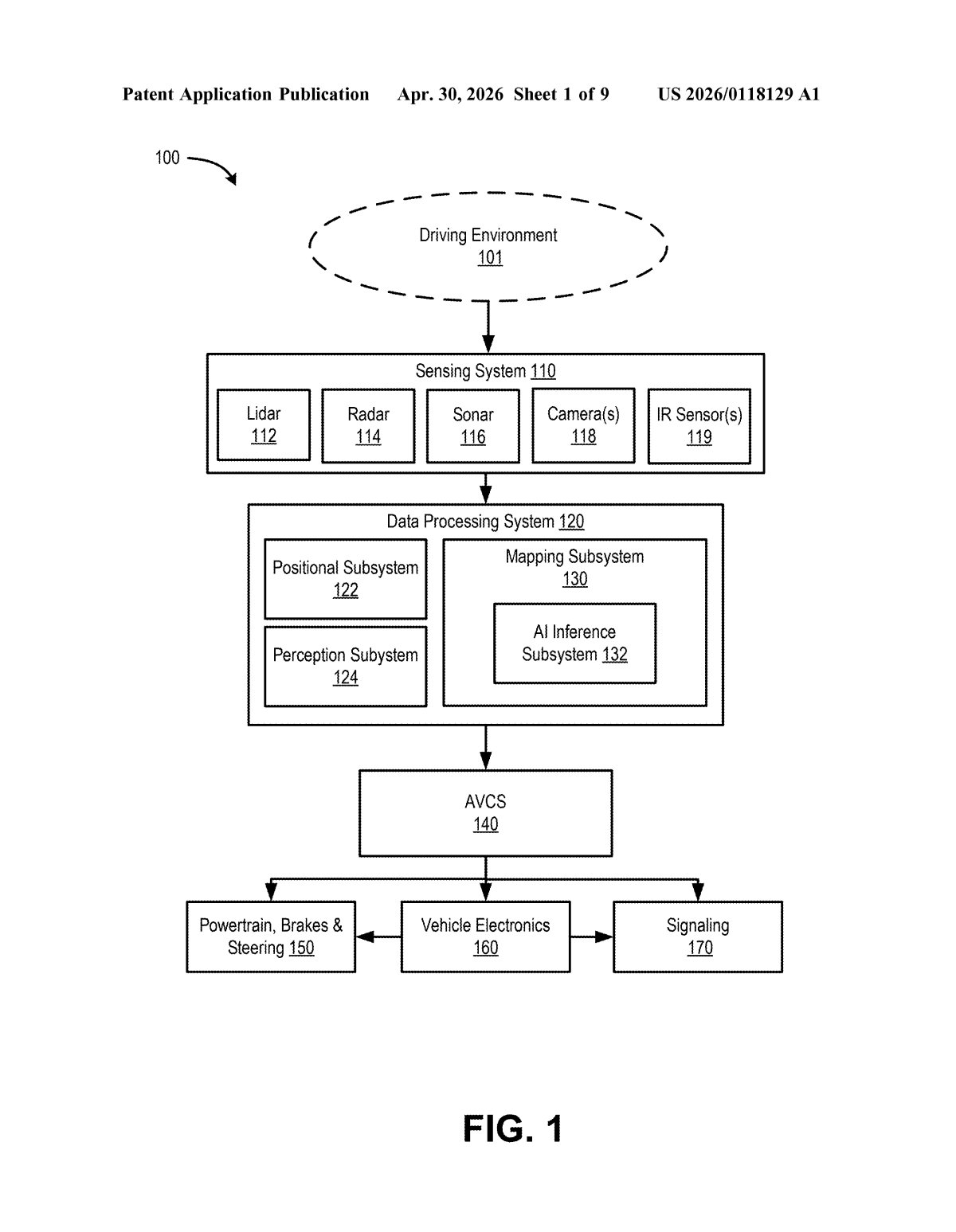

Sensors on the robotaxi — cameras, lidar, radar — feed raw data into an AI model. That model, using the same transformer architecture behind modern large language models, converts the raw sensor soup into a compact, structured map of the driving environment. That map is then handed off to the car's navigation system to decide where to go next.

The clever part is the targeted querying: instead of processing every sensor reading with equal attention, the system uses specific "queries" to direct the AI toward the parts of the scene that actually matter for navigation — think of it like a spotlight that focuses on relevant details rather than drowning in noise.

How the transformer decoder queries build driving embeddings

The patent describes a mapping subsystem that sits between a self-driving car's raw sensors and its navigation planner. Here's the pipeline:

- Input embedding generation: Sensor data (lidar point clouds, camera frames, etc.) is converted into an input embedding — a dense mathematical representation that encodes the full driving environment as a high-dimensional vector.

- Transformer decoder queries: The system selects one or more decoder queries — think of these as questions directed at specific portions of the embedding, like "what's in the lane ahead?" or "is there a pedestrian at the crosswalk?" This is the same attention mechanism used in models like GPT, but aimed at spatial scene understanding rather than text.

- Driving environment embeddings: The transformer decoder processes those queries against the input embedding and outputs driving environment embeddings — compact vector representations of individual features in the scene (a lane boundary, an obstacle, a traffic light state).

- Navigation handoff: Those embeddings are passed directly to the navigation system, which uses them to plan the vehicle's path.

The key architectural insight is using transformer decoders with learnable queries — a technique also seen in object detection models like DETR — to produce structured, navigation-ready scene representations rather than generic feature maps.

What this means for the future of Waymo's robotaxi maps

For Waymo, the ability to generate compact, query-targeted scene representations matters because it potentially replaces or augments the painstaking process of pre-building HD maps of every street the car will ever drive. If the car can reliably build its own real-time map from sensor data, it becomes less dependent on those expensive, frequently-outdated static maps — which is a genuine bottleneck to scaling a robotaxi fleet into new cities.

More broadly, applying transformer-based attention to AV perception is a bet that the same scaling laws that made LLMs so capable will also improve autonomous driving. You wouldn't notice any of this as a Waymo passenger — but under the hood, it represents a meaningful architectural shift in how the car understands the world around it.

This is a technically meaningful filing, not marketing fluff. Using transformer decoders with learned queries for AV scene understanding is a real architectural choice with real tradeoffs — it aligns Waymo's perception stack more closely with the broader ML research mainstream, which makes it easier to benefit from advances in foundation models. Whether it beats their existing approaches in production is the interesting open question.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.