Nvidia Patents a Compiler That Auto-Partitions Code Into GPU Warps

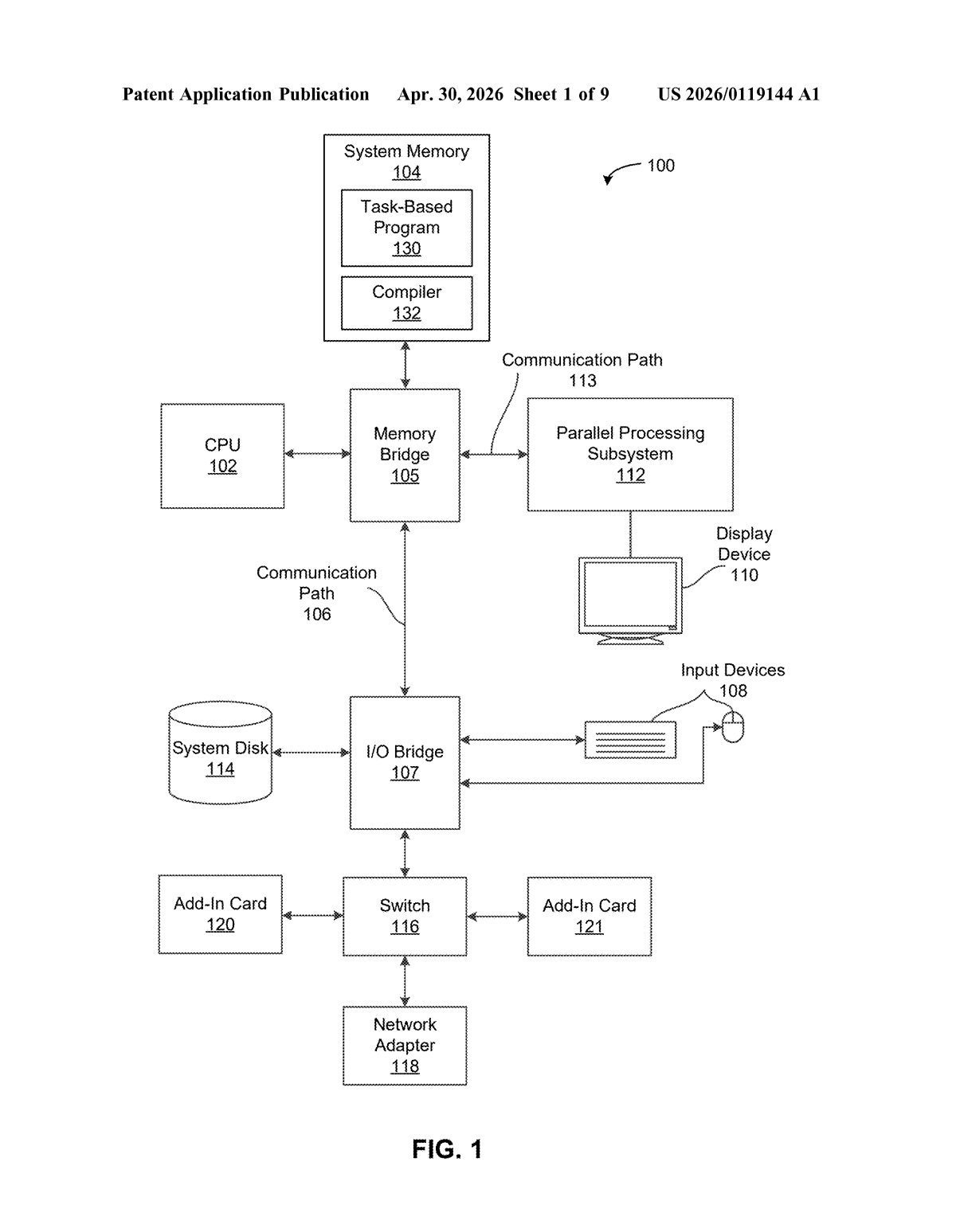

Writing GPU code that actually runs efficiently requires hand-tuning how work is divided across hardware thread groups called warps — a notoriously tedious job. Nvidia's new patent describes a compiler that does that partitioning automatically.

How Nvidia's compiler handles warp assignment for you

Imagine you're cooking a big meal and you have to manually assign every task — chopping, boiling, seasoning — to each cook on your team. Miss a dependency (the sauce needs the chopped onions first!) and the whole thing falls apart. Writing GPU programs today involves a similar headache: developers often have to manually tell the GPU how to split work across its execution units, called warps.

Nvidia's patent describes a compiler — the tool that translates human-readable code into instructions a chip can run — that handles this division automatically. You write your program, and the compiler figures out which pieces of work can safely run in parallel, strips out the bookkeeping overhead, and assigns chunks of work to the right warps on its own.

The practical upshot: developers could write cleaner, higher-level code without worrying as much about low-level GPU scheduling details, and the compiler takes care of making it run efficiently on the hardware.

How the dependence graph drives warp partitioning

The compiler works by building a dependence graph — essentially a map of which operations in your code depend on the results of other operations. Think of it like a flowchart showing what has to finish before something else can start.

From there, the patent describes a three-stage simplification pipeline:

- Remove parallel loops: Loops that can safely run in parallel are identified and flattened out of the graph, making the structure easier to analyze.

- Remove copy operations: Redundant data-movement steps (copies that exist just to move data around without transforming it) are pruned away, tightening the graph further.

- Allocate memory and assign sub-graphs to warps: The compiler then decides where data lives in memory and which sub-graph — meaning which chunk of the overall computation — gets handed off to which warp (a group of 32 GPU threads that execute in lockstep).

The output is new, optimized program code built from those warp-assigned sub-graphs. The key insight is that by working at the graph level rather than the raw instruction level, the compiler can reason about parallelism and data movement more cleanly before committing to a hardware layout.

What this means for GPU programmers and CUDA tooling

GPU programming is one of the biggest bottlenecks in AI and scientific computing today. Tools like CUDA gave programmers access to GPU parallelism, but they still demand a lot of manual tuning. A compiler that automates warp partitioning could lower the barrier for writing high-performance GPU code — which matters a lot as more workloads (inference, simulation, rendering) move onto Nvidia hardware.

For Nvidia specifically, better compiler tooling strengthens the moat around its software ecosystem. If your code runs better automatically on Nvidia GPUs than competitors', that's a lock-in advantage that goes well beyond chip specs. Developers and researchers who don't want to think about warp scheduling are the exact audience this targets.

This is solid compiler infrastructure work, not a flashy product announcement — but compiler quality is one of the underrated reasons Nvidia's GPU ecosystem is hard to displace. Auto-partitioning warps removes a real pain point for GPU developers, and if this lands in a future version of the CUDA compiler or a higher-level framework, it's the kind of quiet improvement that makes Nvidia's platform stickier.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.