Sony Patents an AI That Watches Your Game and Writes What It Sees

Sony is working on an AI layer that watches gameplay footage and automatically writes descriptions of what's happening on screen — think auto-subtitles, but for the visual action itself.

What Sony's AI scene annotation system actually does

Imagine watching a cutscene in a game and having your console quietly figure out: "a warrior is charging toward a castle gate at dusk." No human writer needed. That's the core idea here.

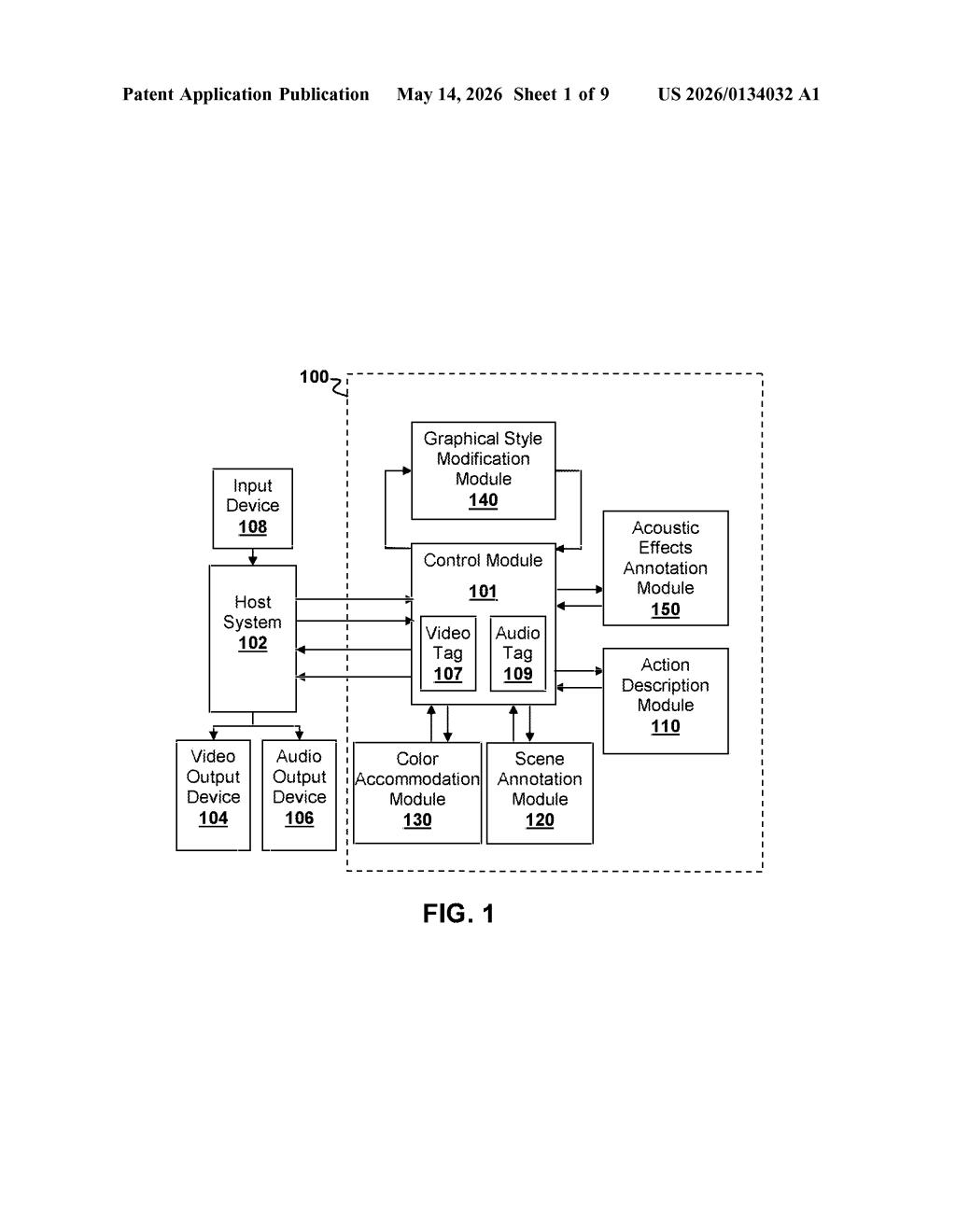

Sony's patent describes a system that takes individual video frames from a game or other audio-visual content and runs them through two AI components. The first figures out what's in the scene — characters, objects, environments. The second figures out what's happening — the action unfolding across one or more frames — and puts both into plain-language descriptions.

The system sits alongside other modules for things like acoustic effects and graphical style changes, suggesting it's designed as one piece of a larger content-enhancement pipeline. Whether that's for accessibility features, streaming overlays, or something else entirely, the patent doesn't say outright — but the building blocks are clearly there.

How the dual neural network pipeline captions frames

The patent describes a controller-coupled pipeline with two main AI modules working on incoming image frames from a host system (like a PlayStation console or cloud game server).

The Scene Annotation Module handles static understanding of a single frame. It uses:

- A first neural network that converts a raw image frame into a feature vector (a compact numerical representation of what the image contains)

- A second neural network that takes that feature vector and generates a natural-language caption describing the scene's elements — objects, characters, environments

The Action Description Module handles the temporal layer — it looks across one or more frames to recognize motion and activity, then generates a description of the action taking place. This is a meaningfully different task from scene captioning: understanding that a character is running or attacking requires tracking change over time, not just reading a single snapshot.

The patent also references companion modules — an Acoustic Effects Annotation Module, an Audio Tag Module, and a Graphical Style Modification Module — suggesting this annotation system is intended to plug into a broader content-enhancement or post-processing pipeline rather than operate as a standalone tool.

What this means for PlayStation accessibility and content tools

The most immediate application here is accessibility. Real-time scene and action descriptions are exactly what screen readers and assistive audio features need to make games playable for visually impaired users. Sony has been investing in accessibility tooling, and this kind of automatic visual narration could significantly lower the cost of building those features into games.

Beyond accessibility, auto-generated scene annotations have value for content moderation, streaming metadata, and AI training datasets. If your console can describe every frame of gameplay, that data becomes useful for search, highlights generation, and potentially feeding back into game AI systems. It's quiet infrastructure work, but the downstream applications are wide.

This is practical, well-motivated engineering rather than a moonshot. The dual-module approach — separating static scene understanding from temporal action recognition — reflects how real computer vision systems are actually built, and the accessibility angle gives it a clear, near-term use case. The filing is notably light on implementation detail (no Claim 1 available, and the abstract has garbled OCR artifacts), which makes it hard to assess how novel the specific architecture really is.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.