Google Patents a System That Tracks Intent to Cut AI Hallucinations

AI hallucinations often happen because a model loses track of *who said what* in a conversation. Google's latest patent tries to fix that by explicitly tagging each speaker's intent before generating a summary.

How Google's intent-tracking fights AI hallucinations

Imagine you're asking an AI assistant to summarize a long group chat, a customer support thread, or a back-and-forth email chain. The AI reads all of it and spits out a summary — but it mixes up who said what, or invents details that nobody actually stated. That's a hallucination, and it's a real problem.

Google's patent describes a system designed to tackle this. Instead of just feeding the whole conversation into a model and hoping for the best, the system first figures out what each participant is trying to say — their intent. It then uses a preferred or trusted source to anchor the final summary.

The idea is that by keeping tabs on the information flow — tracking which claims came from which speaker — the model has a much better chance of producing a summary that's actually grounded in what was said, not what it guessed.

How the model assigns intent across conversation sources

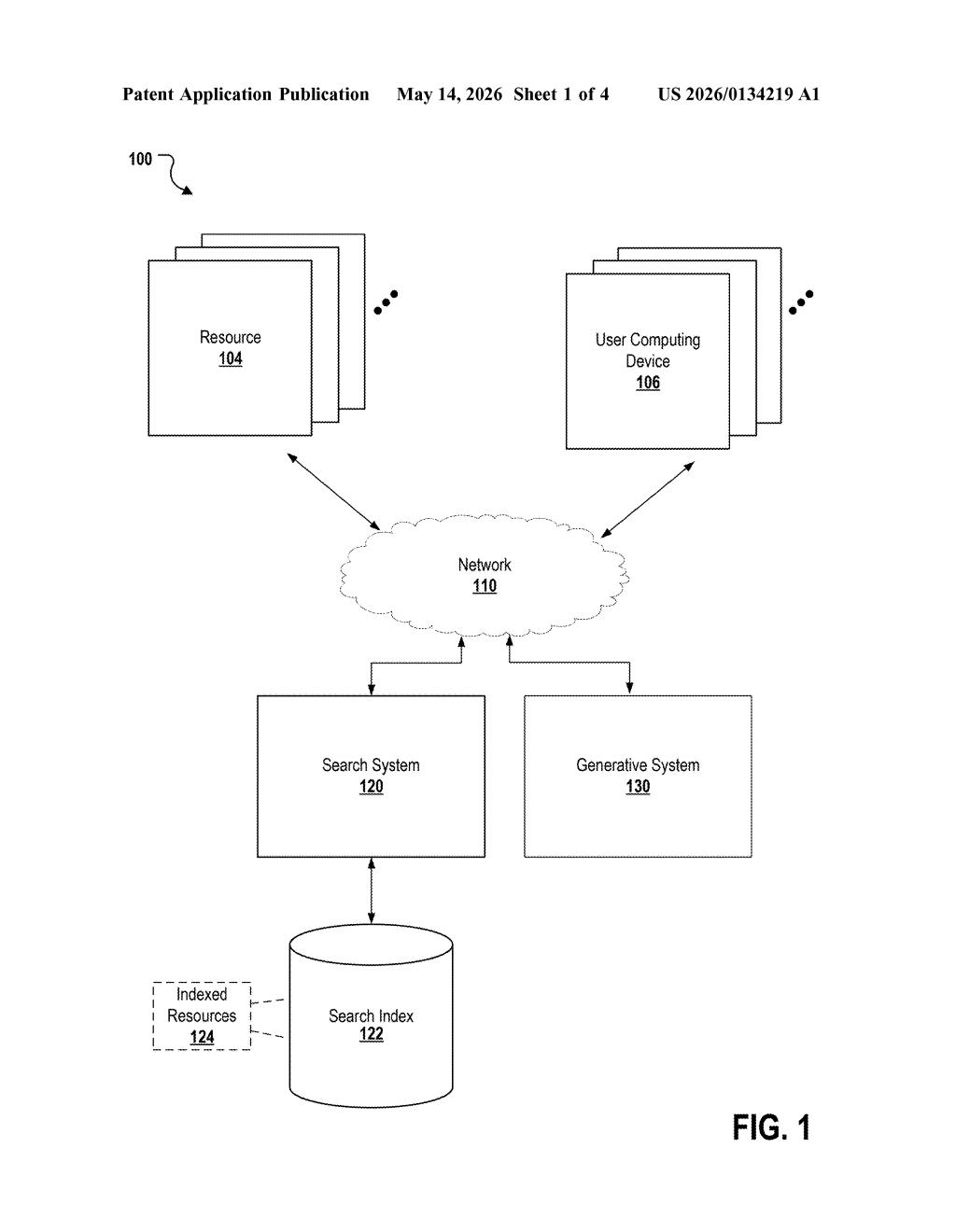

The patent describes a three-step pipeline built around a generative model (think a large language model like Gemini) combined with a search system.

- Step 1 — Ingest conversation data: The system receives a multi-party conversation through a user interface. This could be a chat log, a call transcript, or any dialogue involving multiple speakers.

- Step 2 — Intent association: The generative model processes the conversation and assigns an intent to each source (i.e., each participant). Intent here means something like the goal or claim that speaker is advancing — are they asking a question, asserting a fact, pushing back on something?

- Step 3 — Anchored summarization: A summary is generated based on a preferred source — a designated trusted or primary participant — combined with the intents mapped to all other participants. This anchoring is the key anti-hallucination mechanism.

The system architecture in the patent also references a search index, suggesting that summaries could be grounded against external retrieved facts — a technique known as retrieval-augmented generation (RAG), where the model checks its outputs against real documents rather than relying purely on memorized training data.

What this means for Google's AI summaries and Search

Hallucination is one of the biggest practical blockers to deploying AI summaries in high-stakes settings — customer service, legal review, medical consultations. A system that explicitly maps intent per speaker before summarizing is a more principled approach than simply prompting a model to "be accurate."

For Google specifically, this connects directly to features like AI Overviews in Search and Gemini's conversation summarization. If Google can reduce hallucinations in multi-party contexts, it strengthens the case for deploying these summaries in places where getting it wrong actually costs something — like a support ticket resolution or a meeting recap you actually act on.

This is a real problem worth solving, and the intent-tracking framing is a sensible architectural choice — it forces the model to do structured reasoning before generating free text. That said, the patent is fairly abstract: it doesn't specify how intent is detected, how a 'preferred source' is chosen, or how the search index is actually consulted. The hard engineering work isn't described here. Still, it signals that Google is thinking seriously about hallucination reduction at the conversation level, not just the sentence level.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.