Amazon Patents a System That Gives LLMs a Formal Logic Brain

Large language models are famously bad at following strict logical rules — they hallucinate, they drift, they forget constraints halfway through. Amazon thinks it has a fix: make the LLM work alongside a formal math solver instead of going it alone.

What Amazon's LLM logic solver actually does

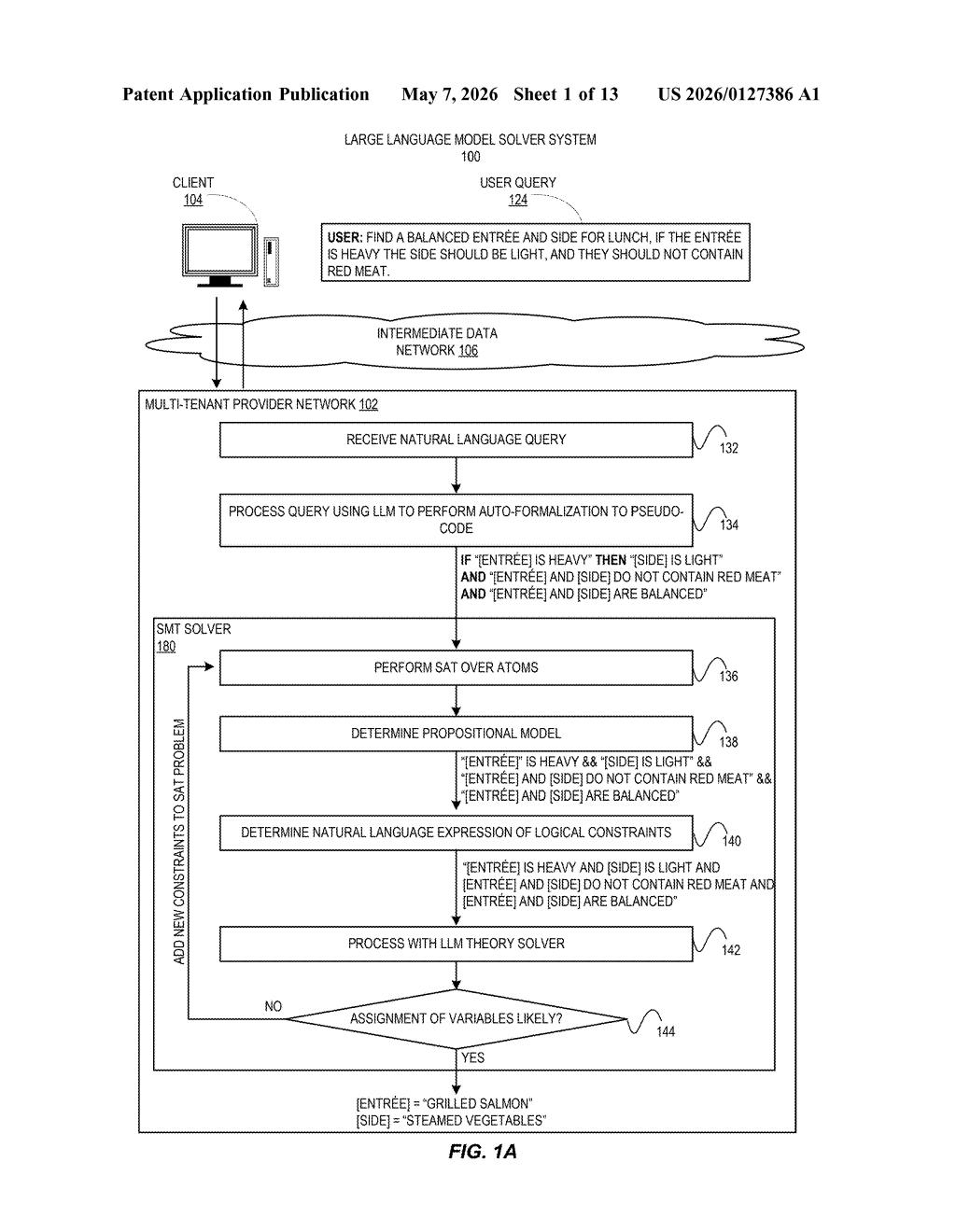

Imagine you ask an AI assistant to help you plan a lunch menu with specific rules: no red meat, the entrée and side have to be balanced, and if the entrée is heavy, the side must be light. A regular chatbot might give you a perfectly confident answer that violates one of those rules without even noticing. Amazon's patent tackles this exact problem.

The idea is to split the job in two. First, the LLM reads your question and translates your rules into a kind of structured code — think of it like converting your fuzzy English instructions into a precise checklist. Then a separate logic engine (the kind used in software verification and math proofs) checks whether any answer could even possibly satisfy all your rules at once.

Once the logic engine confirms a solution exists, it hands the verified constraint list back to the LLM, which then figures out the actual answer — like "grilled salmon and steamed vegetables." The LLM handles the language; the logic solver handles the rules. Neither has to do the other's job.

How the SAT solver and LLM divide the work

The patent describes a hybrid architecture called an LLM-enhanced SMT solver. SMT stands for Satisfiability Modulo Theories — a class of automated reasoning tools widely used in formal software verification to check whether a set of logical constraints can all be true at the same time.

Here's how the pipeline works:

- The LLM receives a natural language query and performs auto-formalization — converting the user's free-text constraints into pseudo-code logical atoms (e.g., "IF [ENTRÉE] IS HEAVY THEN [SIDE] IS LIGHT").

- A SAT solver (Boolean satisfiability solver — the engine that checks logical consistency) processes those atoms to determine a propositional model, confirming whether a valid solution space exists at all.

- The verified logical constraints are translated back into natural language and fed to the LLM as a structured prompt.

- The LLM then acts as a theory solver within the SMT framework — assigning concrete real-world values to the abstract variables (e.g., [ENTRÉE] = "Grilled Salmon").

The key insight is that the LLM never has to enforce logical consistency on its own — that burden is offloaded to the SAT/SMT layer, which is mathematically rigorous by design. The LLM only does what it's actually good at: understanding language and generating plausible, contextually appropriate answers.

Why this could fix AI's constraint-following problem

Anyone who has used an AI assistant for anything involving real rules — scheduling, dietary restrictions, budget constraints, legal conditions — knows that LLMs routinely ignore or misapply constraints when the query gets complex. This architecture directly addresses that failure mode by treating constraint-satisfaction as a separate, verifiable step rather than hoping the model gets it right implicitly.

For Amazon, the commercial angle is obvious: AWS customers building AI agents for business logic (think procurement, compliance checking, or recommendation engines) need outputs they can trust to follow the rules. A system that formally verifies constraint satisfaction before generating an answer is a much easier sell to enterprise buyers than a raw LLM that might hallucinate its way past your guardrails.

This is genuinely interesting engineering, not just an incremental LLM tweak. Neurosymbolic AI — combining neural networks with formal logic — has been a research goal for years, and Amazon is staking a concrete patent claim on a practical, productizable version of it. If this ships inside something like Bedrock or Q, it could meaningfully raise the reliability bar for agentic AI workflows.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.