Amazon Patents an LLM-Powered System That Reads Emails to Catch Cyberattacks

Amazon is patenting a security system that doesn't just look at network logs — it reads emails and conversation transcripts to figure out whether a detected anomaly is an actual attack. That's a meaningful shift in how automated threat detection could work.

How Amazon's AI security system reads your emails for threats

Imagine your company's security system flags a suspicious login at 2 a.m. Right now, most systems stop there — they see the weird login, maybe raise an alert, and wait for a human to dig in. Amazon's new patent describes something more ambitious: a system that also reads through related emails and chat transcripts to understand the context behind that alert.

The idea is that language clues — like an email saying 'I'll be traveling internationally this week' — could confirm the login is legitimate, while a suspicious phishing-style thread might confirm it's an actual intrusion. The system uses an AI language model (think a purpose-built version of a tool like ChatGPT) to process that text and combine it with the original alert.

If the combined picture looks bad enough, the system can act automatically: blocking a device, cutting off a suspicious IP address, or escalating a ticket to a human security analyst. It's essentially giving your security software the ability to read between the lines.

How Bayesian inference and a generative model combine signals

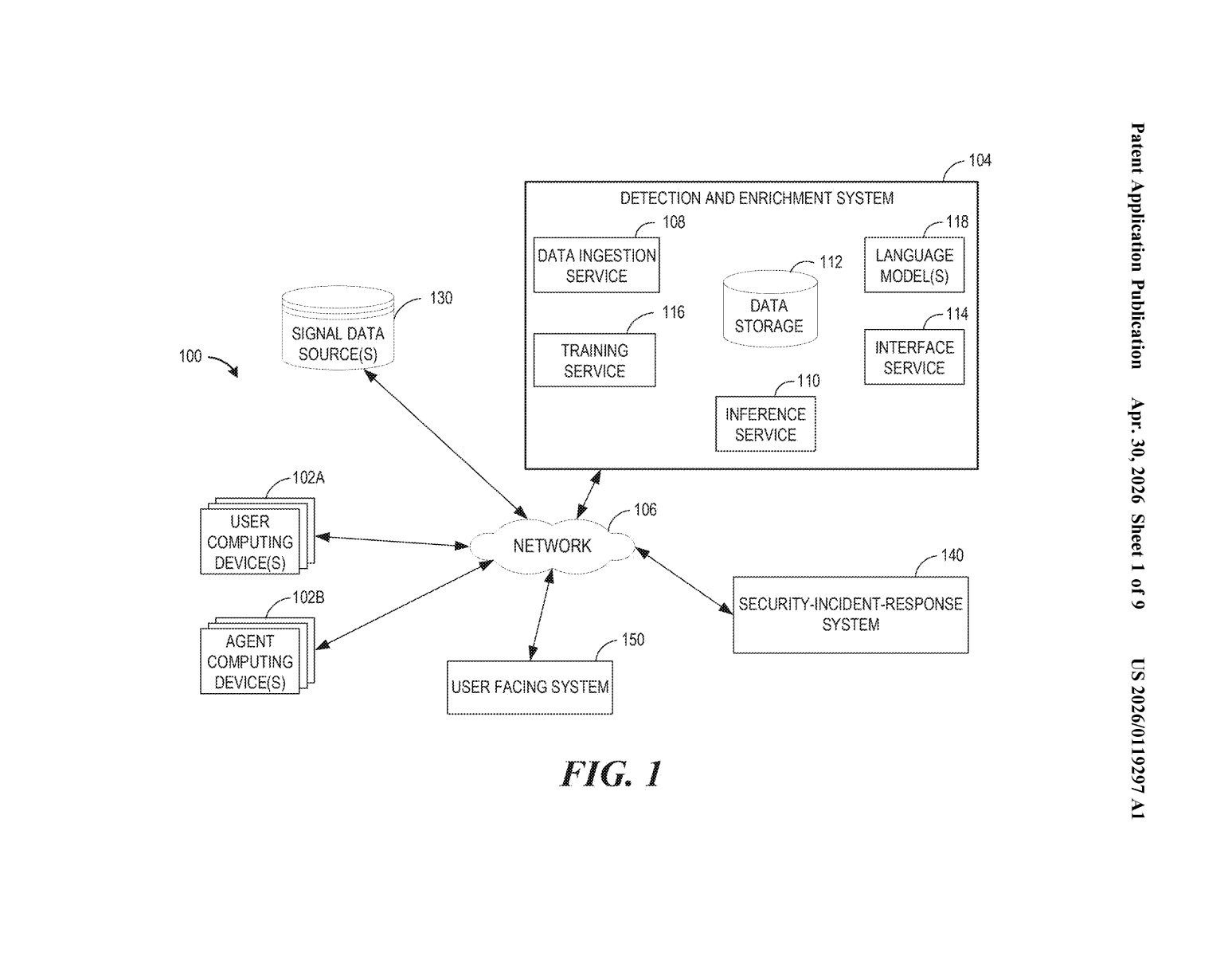

The system works in two stages: detection and enrichment.

First, it monitors signal data — network logs, access events, behavioral patterns — to identify a detection event (something that looks anomalous, like an unusual file access or a login from an unexpected location). That part is standard security tooling. What's new is the second stage.

Once a detection event is flagged, the system pulls communication text data associated with that event — emails, meeting transcripts, support tickets, whatever's linked to the relevant user or device. A generative model (a large language model fine-tuned on labeled examples of real attacks) processes that text and produces a result: essentially a judgment about whether the text suggests malicious intent or a benign explanation.

Then comes Bayesian inference (a statistical method for updating the probability of a hypothesis as new evidence comes in). The system calculates the joint likelihood that the original alert and the language model's output together indicate a real attack — not just noise. If that probability crosses a threshold, the system can:

- Block the device associated with the activity from accessing network services

- Block all traffic from the associated IP address

- Escalate a ticket inside a security-incident-response platform

The language model itself is trained on labeled datasets — emails and transcripts that have been tagged as examples of specific attack types — so it can generalize to new, unseen phishing attempts or social engineering patterns.

What this means for AWS security and enterprise threat response

For AWS and Amazon's security portfolio (which includes services like Amazon GuardDuty and AWS Security Hub), this patent points toward threat detection that's meaningfully smarter about context. Current automated systems are notoriously noisy — they generate huge volumes of alerts that security teams mostly ignore. If a language model can filter out the false positives by cross-referencing human communication, that's a real reduction in alert fatigue.

For enterprise customers, this could mean fewer 'cry wolf' notifications and faster automated responses to genuine intrusions — without waiting for a human analyst to read through a thread and make the call. The flip side is that it requires the system to have access to employee communications, which raises data privacy questions that the patent doesn't address.

This is a genuinely clever application of LLMs to a real problem in enterprise security — alert fatigue is one of the most documented and stubborn issues in the industry. Amazon is essentially proposing to give its security tooling the ability to read the room, which is a logical next step for AI-assisted threat detection. Whether the privacy trade-offs are acceptable to enterprise customers is a separate question, but the technical approach is worth taking seriously.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.