Apple Patents a System That Adjusts Avatar Quality Based on Where You're Looking

Apple is working on a video-calling system smart enough to know when you're about to look at someone — and quietly upgrades their avatar quality before you even get there.

What Apple's gaze-aware avatar streaming actually does

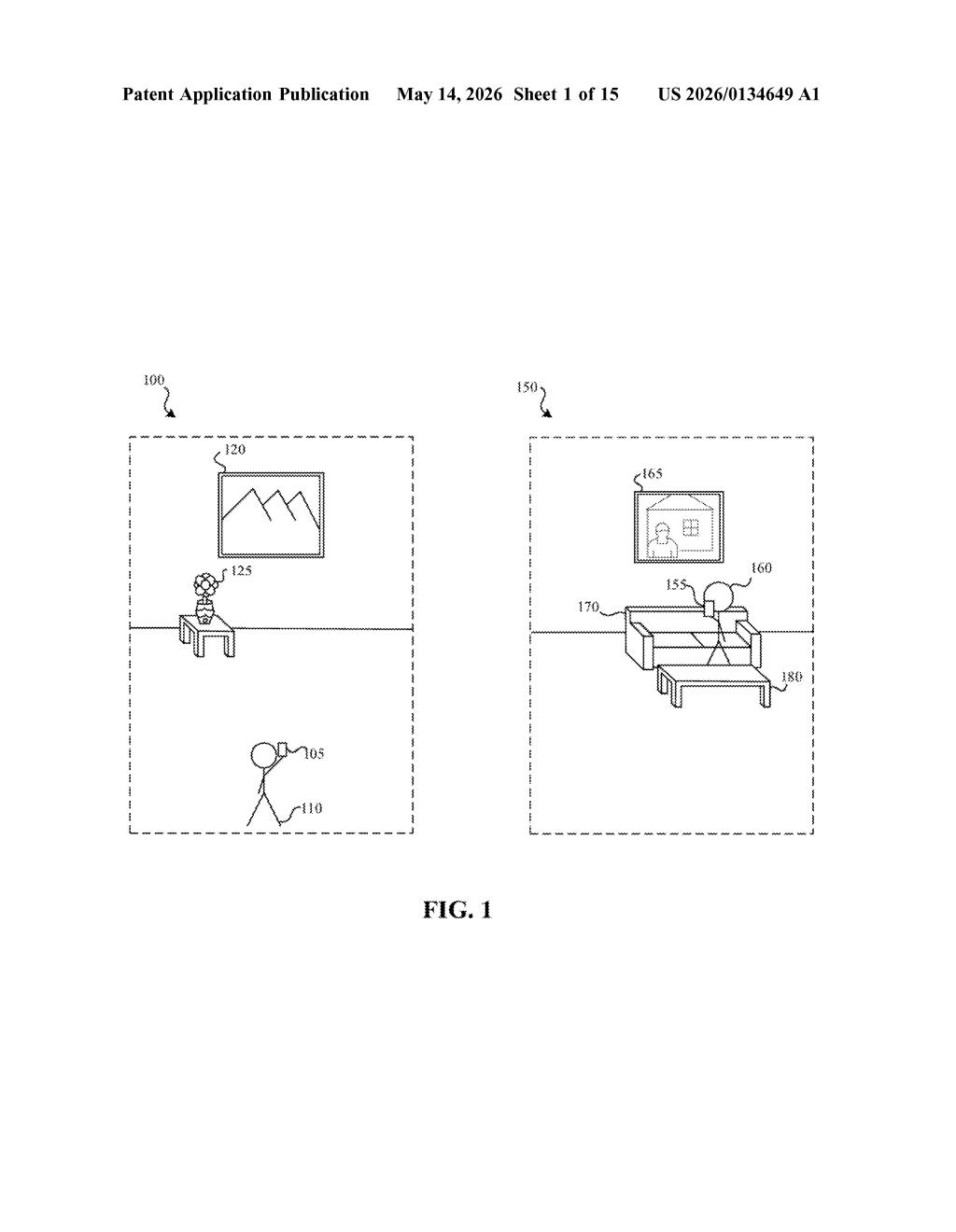

Imagine you're on a video call in a virtual space and two avatars are in front of you, but you're only paying attention to one of them. It would be wasteful to stream the highest-quality video for both. Apple's patent tackles exactly this problem.

Apple's idea is to track where you're looking — or even predict where you're <em about to look — and use that to decide how much bandwidth and processing power to spend on each avatar. If someone is on the edge of your view and you're not glancing their way, their avatar can run at lower resolution or framerate. The moment signals suggest you're about to look at them, the system fetches a higher-quality stream in advance.

This is less about making calls look better all the time, and more about making sure your device isn't burning resources rendering sharp details you'll never actually see. Think of it as smart bandwidth budgeting, guided by your eyeballs.

How Apple predicts gaze direction to pre-fetch avatar data

The patent describes a method where a first device (say, an AR headset or phone) is in a live communication session with a second device. It's already receiving streamed avatar data — think of this as the video representation of the other person.

The key step is identifying an "indicium" (a signal or cue) that predicts whether the local user's gaze will be directed toward a given avatar. This could be:

- Eye-tracking data showing the user's current foveal region (the small, sharp-focus zone of your vision)

- Head orientation or body pose as a proxy for attention

- Whether the avatar is inside or outside the user's field of view entirely

Based on that prediction, the device requests a different tier of avatar data from the remote server — higher framerate, higher resolution, or more detailed geometry — before the user's gaze actually arrives. If the user isn't looking and isn't likely to, the system sticks with a leaner data stream.

The patent specifically calls out the distinction between current attentive state and future attentive state, which is the more technically interesting piece: the system tries to pre-fetch quality upgrades proactively, not reactively, reducing the perceptible lag when you do glance over.

What this means for Apple Vision Pro and spatial calling

This patent is clearly aimed at Apple Vision Pro and whatever spatial computing hardware follows it. Rendering multiple photorealistic avatars in real time is expensive — both in compute and network bandwidth — and the Vision Pro's Persona feature already generates detailed face reconstructions during FaceTime calls. Intelligently throttling quality based on gaze is a logical next step for a device that already does eye-tracking.

For you as a user, the payoff would be longer battery life and smoother calls with more participants, without any visible quality drop. For Apple as a platform, it's about making spatial multi-person calls viable without requiring a fiber connection and a wall of compute. The efficiency gains could also benefit lower-end devices trying to participate in the same session.

This is a genuinely clever systems patent, not just an incremental tweak. Predicting gaze direction to pre-fetch quality upgrades — rather than reacting after the fact — is the kind of latency-hiding trick that separates smooth experiences from janky ones in XR. It's squarely aimed at making Vision Pro's Persona calls scale to group settings, and it's the sort of optimization that only makes sense if Apple is serious about spatial video calling as a long-term product bet.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.