OpenAI Patents a Schema-Based System for Connecting External APIs to AI Chatbots

OpenAI has filed a patent describing a structured, manifest-file-driven approach for connecting third-party APIs to a natural language model — essentially a formalized blueprint for how ChatGPT-style plugins are registered, understood, and used by AI.

How OpenAI's API plugin registration system works

Imagine you want to let ChatGPT book a restaurant for you through OpenTable, or check your flight status through an airline's app. For that to work, ChatGPT needs to understand what that external service can do, what data it needs, and how to talk to it — without a human writing custom glue code every time.

That's what this patent describes. A developer (or company) who wants their service to work with an AI assistant publishes a small manifest file — a kind of digital business card — at a known web address. The AI system reads that file to learn where the full technical documentation lives, then fetches that too. From those two sources, it auto-generates documentation and code examples so the AI knows how to call the API correctly.

You've probably seen something like this already: it's the architecture behind ChatGPT Plugins, which launched in 2023. This patent appears to formalize and protect that registration-and-discovery mechanism.

How the manifest file drives ChatGPT plugin integration

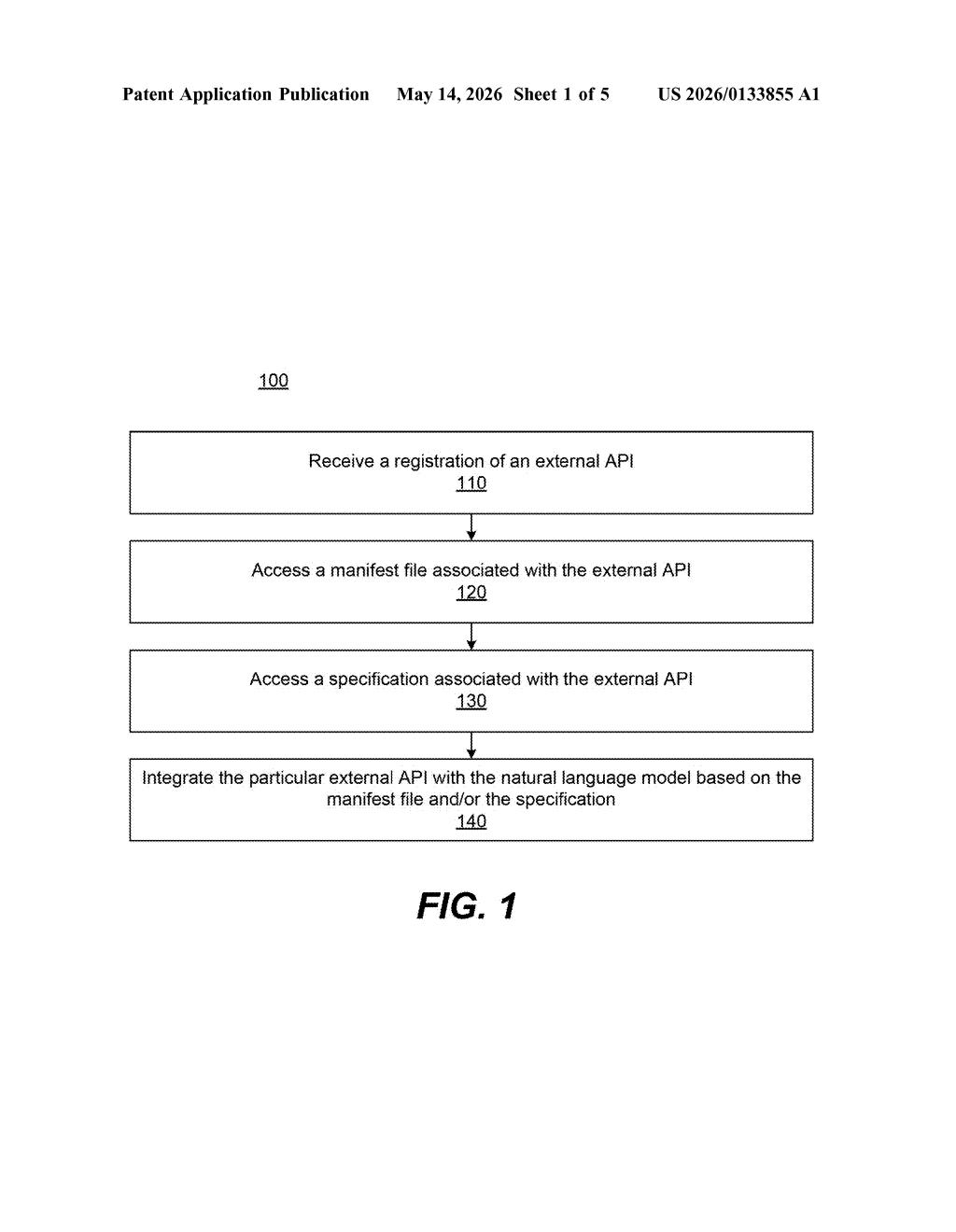

At its core, this patent describes a two-step discovery protocol for wiring external APIs into a natural language model system.

Here's how the flow works:

- A developer registers their API by submitting a URL to a manifest file — a small, structured document (think JSON or YAML) they host on their own server.

- That manifest contains key parameters for talking to the API and a pointer to a fuller specification file (like an OpenAPI/Swagger spec) hosted at a second URL.

- The AI system fetches both files, then ingests server definition code provided by the publisher.

- Using all of that, the system auto-generates documentation and code examples — essentially teaching itself how to use the new API.

The generated documentation and examples then get folded into the natural language model's integration layer, so the model can correctly invoke that API when a user's request calls for it. The key insight is that the publisher defines everything — the AI system is a consumer, not a co-author, of that API's contract.

This is architecturally similar to how OpenAPI specs work in traditional software development, but applied to a model that needs to understand intent and map it to structured API calls at runtime.

What this means for developers building on top of ChatGPT

For developers, this formalizes a workflow that was previously ad hoc: instead of OpenAI hand-coding every integration, any publisher can make their service AI-callable by hosting two files at known URLs. That's a meaningful shift toward a self-service plugin ecosystem, and patenting the mechanism gives OpenAI a potential claim on the registration-and-discovery pattern itself.

For users, it's the infrastructure behind features like ChatGPT Plugins and, more broadly, tool-use in AI agents. Every time an AI assistant browses the web, checks your calendar, or queries a database on your behalf, some version of this API-registration handshake is happening. Owning that pattern — at least in patent terms — could matter a lot as agentic AI systems become mainstream.

This is a backend infrastructure patent, not a flashy AI capability — but that's exactly what makes it strategically significant. ChatGPT Plugins are already live, and this filing looks like OpenAI locking down the architectural IP behind the plugin registration system before competitors build similar ecosystems. It's quiet, careful IP work, and probably one of the more consequential patents in OpenAI's growing portfolio.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.