Microsoft Patents a GPT Model That Remembers When — Not Just What — You Did

Most AI models trained on user behavior know what happened — but not how long it took. Microsoft's new patent tackles that gap by baking elapsed time directly into a GPT-style model's training data.

What Microsoft's time-aware activity model actually does

Imagine you log into your bank, wait three seconds, transfer money, and log out. Now imagine someone else logs in, immediately transfers the maximum amount, and logs out in under a minute. The sequence of actions looks similar — but the timing is completely different, and that difference is a red flag.

That's the problem Microsoft is trying to solve here. Standard AI models trained on sequences of events (clicks, logins, transactions) typically treat those events like words in a sentence — they know the order, but they don't know how much time passed between each one. Microsoft's patent describes a way to fix that by inserting special "time-preserving" markers into the training data that tell the model both the order and the elapsed time between each action.

The result is a single foundational model — think of it like a GPT trained on behavior instead of text — that can then be adapted for specific tasks: detecting phishing, spotting abusive accounts, or predicting what a user is likely to do next. The timing context is baked in from the start, not bolted on afterward.

How time-preserving encodings slot into the GPT training loop

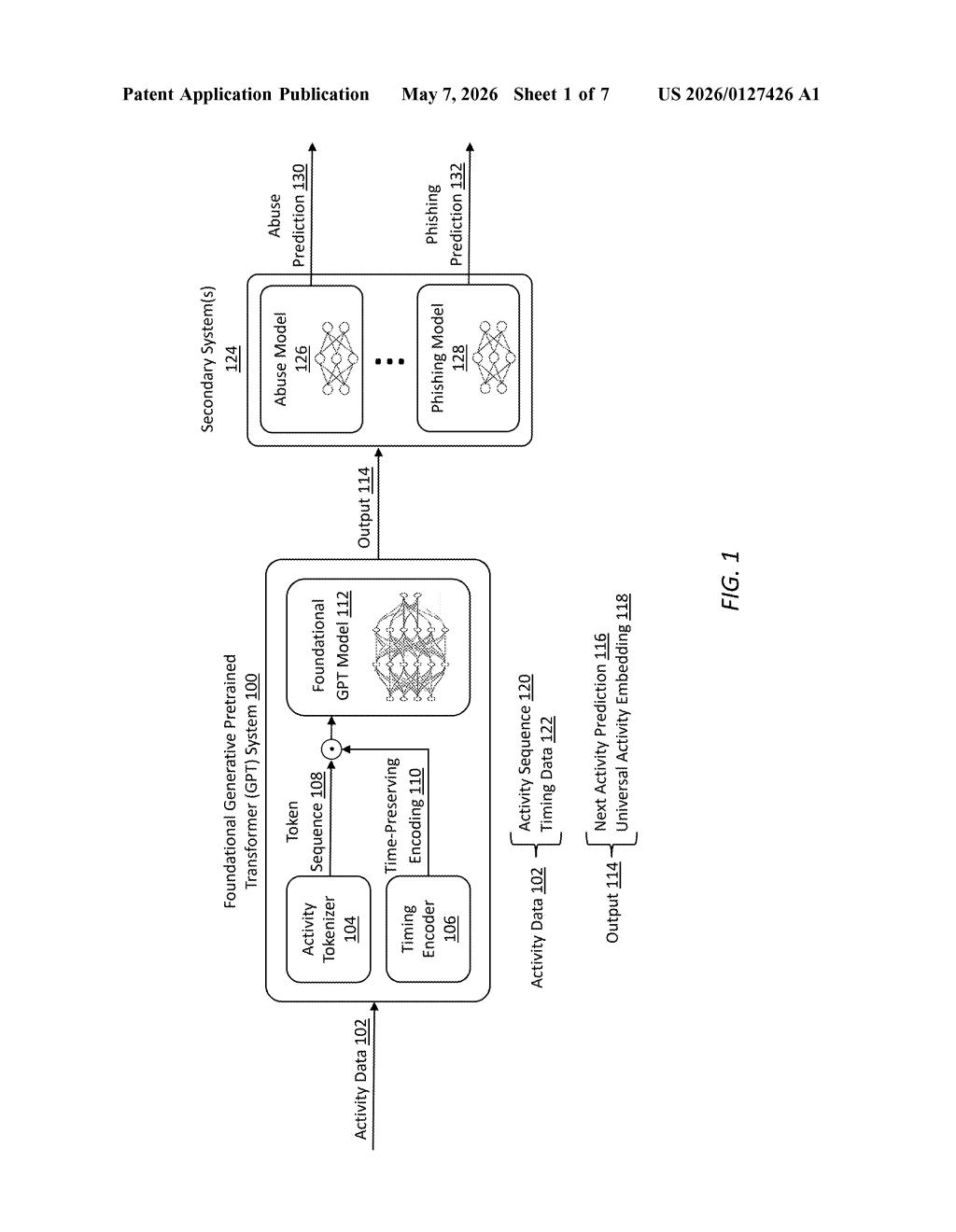

The patent describes a foundational GPT model trained on sequences of user or entity activities, where each activity is converted into a token (similar to how words become tokens in a language model). The key innovation is in how time-preserving encodings are inserted into those sequences before training.

Standard positional encodings in transformer models (the mechanism that tells the model "this token came third in the sequence") only capture order — they don't capture how long it took to get from step two to step three. This patent modifies that mechanism so the encoding also represents the actual elapsed time between consecutive tokens. If a user waited 10 minutes between a login and a file download, the model sees that gap as meaningfully different from a 2-second gap.

During training, the model learns to produce a universal activity embedding — a compact numerical representation of an entity's behavioral pattern over time. That embedding can then be fed into several downstream "secondary systems":

- Abuse prediction — flagging accounts that behave like bots or bad actors

- Phishing prediction — identifying sequences that match known phishing patterns

- Next activity prediction — forecasting what a user is likely to do next

The foundational model is trained once and reused across all these tasks, which is the classic transfer learning approach (train a big general model, fine-tune it for specific jobs) applied to behavioral sequence data.

Why timing data could sharpen Microsoft's fraud and phishing detection

Behavioral AI models are already widely used for fraud detection, account security, and anomaly detection — but most of them either ignore timing entirely or treat it as a separate input feature bolted on the side. Baking time directly into the token-level encoding is a cleaner architectural choice, and it means the model can learn temporal patterns (like "this action always happens suspiciously fast") as naturally as it learns sequential ones.

For Microsoft, which operates massive user-facing surfaces like Microsoft 365, Azure Active Directory, and LinkedIn, a single foundational behavior model that generalizes across products would be a significant operational asset. The abstract explicitly calls out phishing and abuse prediction as target applications — both are areas where timing signals (automated attacks move faster than humans) are genuinely diagnostic, so the core idea here isn't just theoretically tidy; it's practically useful.

This is a solid, well-scoped patent for a real problem — not flashy, but the kind of infrastructure work that pays off at scale. The time-preserving encoding idea is genuinely clever in that it doesn't require a separate model component to handle timing; it folds the signal directly into the existing positional encoding structure. If Microsoft ships something like this inside Entra ID or Defender, it would be a meaningful improvement over simpler rule-based behavioral detection.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.