Microsoft Patents Block-Based Floating-Point Compression Using Shared Exponents

Floating-point numbers are notoriously expensive to store and move around — each one carries redundant metadata. Microsoft's new patent proposes a smarter way to compress them in bulk by letting groups of numbers share a single exponent.

What Microsoft's shared-exponent compression actually does

Every number your computer stores in scientific notation — like the weights inside an AI model — is made up of two parts: a significand (the meaningful digits) and an exponent (the scale, like 'times 10 to the power of 6'). Normally, every single number carries its own exponent, even when nearby numbers are all roughly the same size. That's a lot of wasted space.

Microsoft's idea is to group numbers into small blocks and let the numbers within each block share a common exponent — or pick from a small set of shared exponents — rather than each storing its own. The result is a compressed version of the dataset that uses fewer bits overall, which means less storage and less time spent shuttling data around inside a computer.

This kind of compression is especially relevant for AI workloads, where you're constantly moving enormous tables of numbers between memory and processors. Shaving bits off each number, at scale, can meaningfully speed things up.

How the block-splitting and exponent-sharing mechanism works

The patent describes a compression pipeline with three main steps.

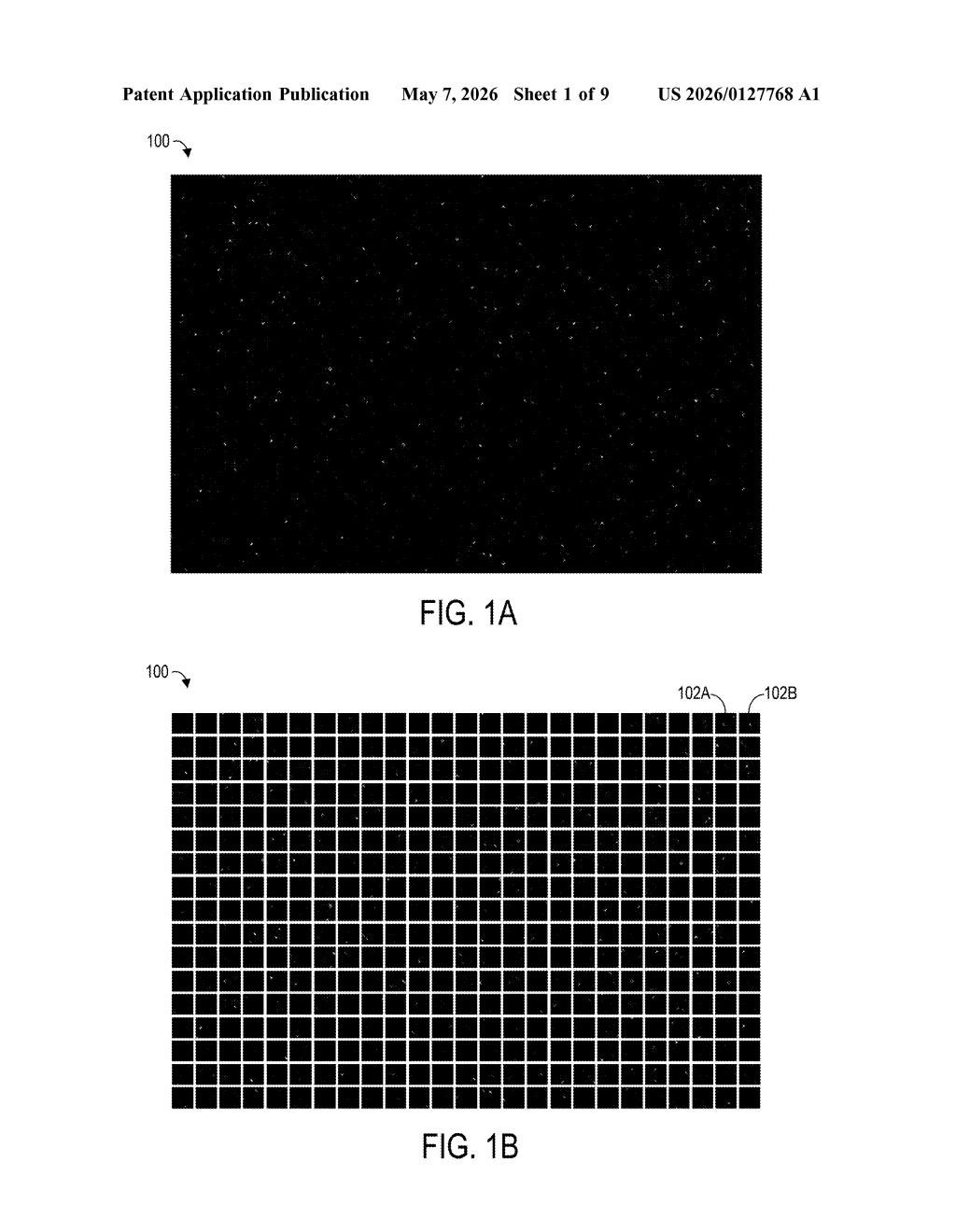

- Block division: The full dataset is split into fixed-size blocks, each containing a predetermined number of data elements (think: a chunk of floating-point weights from a neural network layer).

- Shared exponent selection: For each block, instead of every element carrying its own exponent, the system selects a set of two or more exponents that the whole block can draw from. Each element then picks the exponent from that shared set that best represents its magnitude.

- Mantissa recalculation: With the exponent chosen, each element's mantissa (the precision-carrying part of the floating-point number) is recalculated to fit the compressed format. The mantissa plus the shared exponent together reconstruct an approximation of the original value.

The key claim is flexibility: rather than forcing all elements in a block to use a single shared exponent (which is how older formats like Microsoft's own MX formats and some block floating-point schemes work), this approach allows a small set of exponents per block. That gives each element a better fit, reducing the precision loss that comes from aggressive compression.

The compressed output uses a measurably smaller number of bits than the input, which the patent explicitly frames as conserving both storage space and memory bandwidth — the bottleneck that limits how fast data can flow between RAM and compute units.

What this means for AI model training and memory bandwidth

Memory bandwidth is one of the hardest walls in modern AI infrastructure. When you're training or running a large model, the GPU or accelerator is often sitting idle waiting for data to arrive from memory. Reducing the bit-width of the numbers in transit directly shrinks that bottleneck — you can move more values per second over the same physical bus.

Microsoft's angle here fits neatly into the broader industry push toward low-precision numerical formats for AI, a space where formats like FP8, BF16, and MX (Microscaling) have all gained traction. This patent's twist — allowing a small set of shared exponents per block rather than exactly one — could offer a better precision-vs-compression tradeoff than simpler block floating-point schemes, which matters enormously when you're trying to keep model accuracy intact while cutting memory usage.

This is solid, unglamorous infrastructure work — the kind that quietly makes AI training faster and cheaper without showing up in any keynote. The 'set of two or more exponents per block' detail is the genuinely interesting part: it's a more flexible middle ground between per-element full precision and the blunt single-shared-exponent approach. Whether it's novel enough to survive a prior art challenge from the existing MX format literature is a different question, but the engineering intent is clear and commercially motivated.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.