Microsoft Patents an AI Security Agent That Investigates Cyber Threats on Its Own

Microsoft has patented a security agent that doesn't wait for a human analyst to decide what to investigate next — it asks an AI, gets a plan, executes it, and keeps looping until it understands the threat.

What Microsoft's autonomous threat-hunting agent actually does

Imagine your company's security system fires an alert: suspicious login from an unknown IP. Normally, a human analyst has to dig through logs, cross-reference threat databases, and manually piece together what happened. That takes time — time attackers use to move deeper into your network.

Microsoft's patented system hands that investigation to an AI agent. The agent takes the initial alert, feeds it to a language model along with a list of investigative tools it can use, and asks the model: "What should I do next?" The model picks a next step — say, look up that IP address or pull related log entries — and the agent executes it automatically.

The loop repeats. Each new piece of evidence gets added to what the agent already knows, and the model chooses the next move again and again until the picture is complete. No human needs to babysit each step.

How the LLM loop drives each investigative step

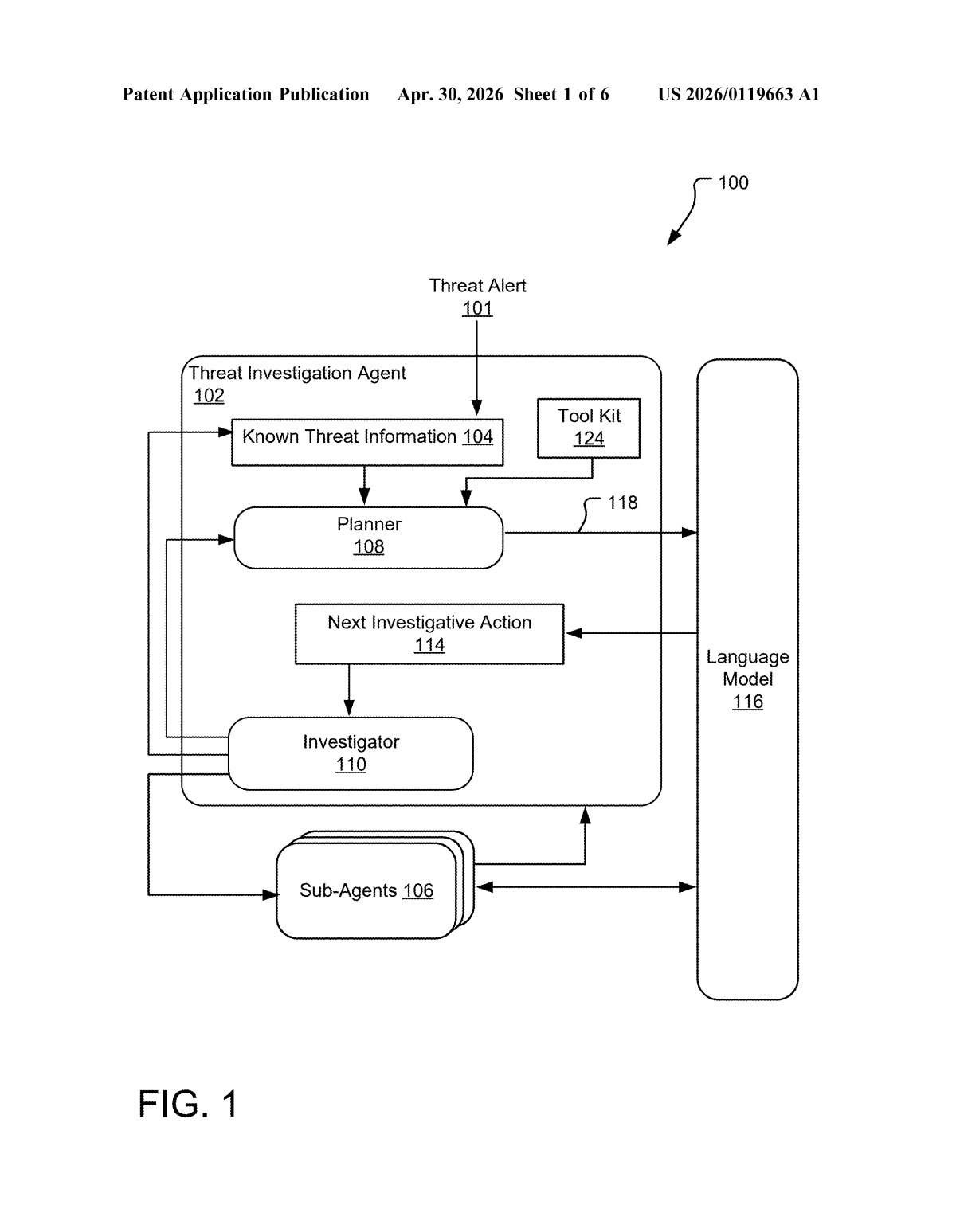

The system centers on a threat investigation agent that runs a continuous feedback loop. At each iteration, it bundles three things into a prompt for a language model (LLM): the current known threat information, a function list (a catalog of investigative tools it can call, like log queries or IP lookups), and instructions telling the model to pick the most appropriate next action.

The LLM returns a decision — essentially a function call — and the agent executes it. The result (say, a list of related events or a threat-reputation score) becomes additional threat event information, which gets folded back into the known threat context. Then the whole process repeats.

The patent describes a Planner / Investigator architecture:

- Planner: manages the high-level reasoning loop and prompt preparation

- Investigator: holds the toolkit of callable functions and executes the chosen action

- Sub-agents: specialized agents that can be delegated specific sub-tasks

This is a classic ReAct-style agentic loop (Reasoning + Acting) — a pattern where the model reasons about what to do, the system acts on that reasoning, and the result feeds back into the next reasoning step. What's notable here is the structured function-list approach, which constrains the LLM to only actions the system can actually execute safely.

What this means for enterprise security teams

For enterprise security teams, alert fatigue is a genuine crisis — analysts are buried under thousands of alerts a day, most of which require repetitive, procedural investigation steps. An autonomous agent that handles the initial triage and fact-gathering loop could meaningfully reduce the time between "alert fired" and "we understand what happened." That gap is where breaches go from bad to catastrophic.

This fits squarely into Microsoft's Security Copilot product strategy, which is already built around using LLMs to assist security analysts. A fully autonomous investigation agent is the logical next step — moving from AI-as-assistant to AI-as-first-responder. Expect this kind of architecture to show up in Microsoft Sentinel or Defender within the next few product cycles.

This is a genuinely interesting patent because it's not just slapping an LLM onto a dashboard — it's describing a real feedback loop architecture where the AI actively drives an investigation forward. The structured function-list constraint is a smart safety guardrail, keeping the model from hallucinating actions that don't exist. Whether Microsoft can make this reliable enough for production security environments is the real question, but the design thinking here is solid.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.