Microsoft Patents a Unified Contrastive Learning System for Vision AI

Training a computer vision model that's good at *everything* — object detection, image captioning, visual search — usually means training many separate models. Microsoft's latest patent describes a single foundation model trained once, then stretched to fit.

How Microsoft trains one vision model to handle many tasks

Imagine you want an AI that can describe photos, find objects in images, and answer questions about pictures — all at once. Normally, you'd train separate AI systems for each job, which is expensive and slow. Microsoft's patent describes a way to build one general-purpose vision AI that learns from a massive collection of image-and-caption pairs, then adapts to many different visual tasks.

The trick is a training technique called contrastive learning — the model learns by figuring out which images and text descriptions belong together versus which don't. Over time, it builds a rich internal understanding of both pictures and language simultaneously.

Once that foundation is trained, plug-in modules called extensibility adapters let you tune it for specific jobs — like identifying tumors in medical scans or spotting defects on a factory floor — without retraining everything from scratch.

Inside Microsoft's hierarchical image encoder and contrastive setup

The system has three main components working together.

First, a data curation engine assembles a pre-training database from weakly labeled data — meaning image-text pairs scraped from the web where the captions aren't perfectly accurate or standardized. The system is designed to be robust to this messiness.

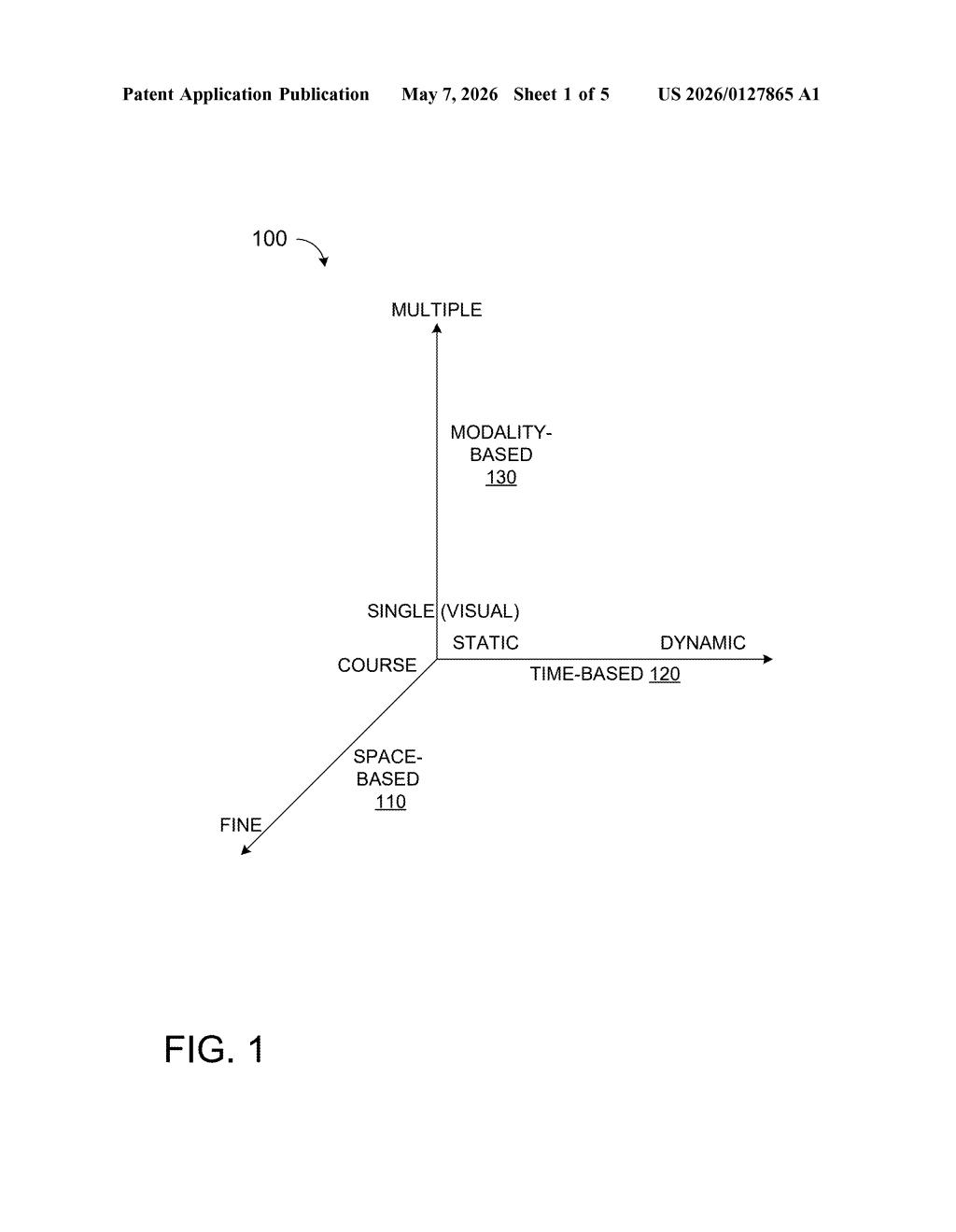

Second, the image encoder uses a hierarchical vision transformer with shifted windows — this is the Swin Transformer architecture, which processes images in overlapping local patches rather than all at once, making it much more efficient at capturing fine-grained detail at multiple scales. Convolutional operations generate the initial projection layers, blending classical CNN strengths with transformer flexibility.

Third, a unified image-text contrastive learning module aligns the image and language encoders during training — pushing matching pairs closer together in a shared vector space while pushing mismatched pairs apart. This is the same core idea behind CLIP, but applied here with the Swin backbone.

Finally, extensibility adapters tap into feature pyramids — multi-scale representations produced at different depths of the transformer — and extend the model into specific task domains without full retraining.

What this means for Microsoft's Azure AI vision services

Foundation models are increasingly the default way big tech companies build AI: train once at massive scale, then fine-tune cheaply for many downstream applications. Microsoft's patent formalizes an architecture for doing this in computer vision specifically, with explicit hooks (extensibility adapters) for enterprise use cases like medical imaging, manufacturing inspection, or retail product recognition.

For users of Azure AI Vision or Copilot's visual features, this kind of architecture is what makes it possible to get a capable, customizable vision model without waiting months for a bespoke training run. It also signals Microsoft is investing in a Swin-based alternative to architectures like OpenAI's CLIP or Google's PaLI for production vision workloads.

This is solid, methodical AI infrastructure work rather than a flashy consumer moment — it describes the plumbing that makes scalable vision AI possible. The Swin Transformer backbone and contrastive learning combination aren't new ideas on their own, but packaging them into a clean, extensible system with formal adapter hooks is genuinely useful and worth watching in the context of Microsoft's Azure AI roadmap.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.