Microsoft Patents Motion-Compensated Compression for 3D Point Cloud Video

Your phone's video codec has used motion compensation for decades — storing only what changed between frames instead of every pixel every time. Microsoft is now applying that same logic to 3D point clouds, the format that powers volumetric video, LiDAR scans, and immersive spatial experiences.

What Microsoft's 3D video compression patent actually does

Imagine watching a live 3D hologram of a basketball game. Unlike a flat video, this is a full three-dimensional cloud of millions of tiny dots — called voxels — that together form the players, the court, the ball. Sending all of that data every single frame would be enormous. You'd need a firehose of bandwidth just to stream a few seconds.

Microsoft's patent borrows a classic video compression trick: instead of resending the entire 3D scene every frame, only send what changed. It looks at a previous frame stored in memory, predicts what the current frame probably looks like based on movement, and only encodes the difference. This is called inter-frame coding, and it's the same reason your Netflix stream doesn't consume terabytes per hour.

The patent also adds a smart fallback: if motion compensation isn't efficient for a particular chunk of the scene, the system can switch to intra-frame mode — encoding that chunk independently, from scratch — and uses a cost-benefit calculation to pick the better option automatically. There's even a custom error-correction filter tuned specifically for the quirks of 3D voxel data.

How inter-frame prediction works on voxel blocks

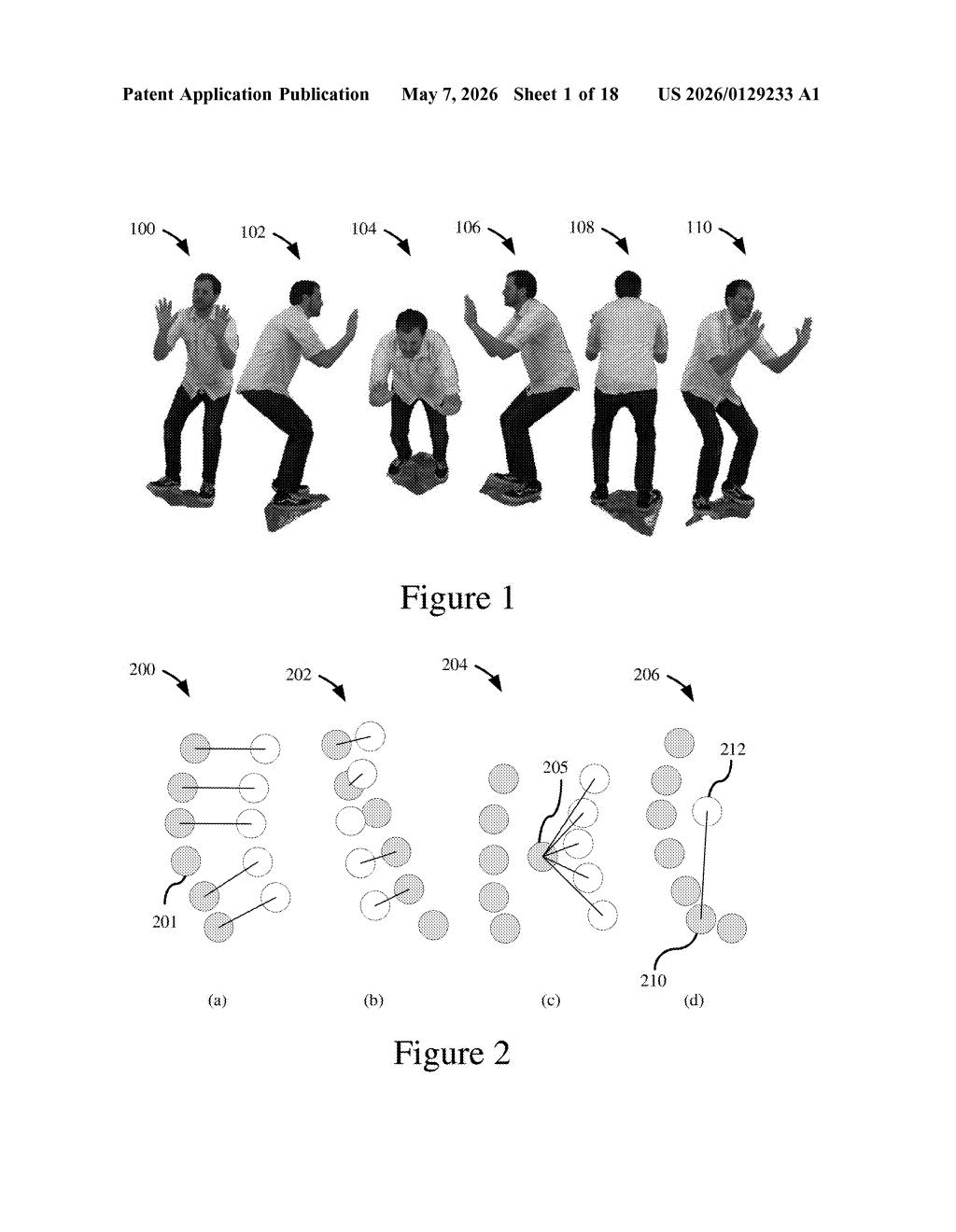

The patent describes a codec system for dynamic point cloud video — 3D scenes captured as sequences of voxel frames, like a time-series of LiDAR or depth-camera snapshots.

The core mechanism works like this:

- Block partitioning: Each 3D frame is divided into rectangular blocks of voxels (x × y × z regions, each dimension at least 2 units wide). Only occupied blocks — those containing actual scene geometry — are processed.

- Mode signaling: Each block gets a syntax element in the bitstream declaring whether it's encoded in intra-frame mode (self-contained, no reference to other frames) or inter-frame mode (predicted from a previously encoded reference frame).

- Inter-frame prediction: For inter-coded blocks, the encoder finds a matching predicted block from a reference frame buffer (think of it as a decoded frame held in memory), computes the 3D difference between the current block and that prediction, and encodes only the residual — the delta. The decoder reconstructs the block by applying that delta back onto the reference.

- Rate-distortion optimization: The system evaluates a threshold comparing encoding cost vs. quality loss for both modes, and picks whichever is more efficient for each block.

A voxel-distortion-correction filter is applied post-decode to clean up compression artifacts specific to 3D voxel data — errors that standard 2D video filters aren't designed to handle.

What this means for volumetric video and spatial computing

Volumetric video — 3D content you can walk around inside in AR or VR — is one of the most data-hungry media formats ever conceived. Without efficient compression, streaming real-time point clouds at scale is essentially impossible on current infrastructure. This patent addresses that bottleneck directly, adapting the motion-compensation techniques that made flat video streaming viable and applying them to the third dimension.

For Microsoft, this connects naturally to its investments in Azure spatial computing, HoloLens technology, and its broader push into mixed reality and 3D collaboration tools. If volumetric video ever becomes a mainstream format — for sports broadcasts, remote presence, or industrial digital twins — codec efficiency like this is the unsexy prerequisite that makes it practical. You may never think about it, but it's the kind of infrastructure work that determines whether a technology actually ships.

This is foundational codec engineering — not a flashy AI trick, but the kind of painstaking compression work that determines whether an entire media format survives contact with real networks. Microsoft filing this now, with spatial computing heating up, suggests they're quietly building the plumbing for volumetric video well before most people agree on what volumetric video even is. That's smart positioning, even if the patent itself reads like a graduate-level signal processing textbook.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.