Google Patents a Machine Learning System for Smarter Image Inpainting

Google is patenting a more principled way to train AI models that remove unwanted objects from photos — the kind of 'erase and fill' magic you already see in Pixel's Magic Eraser. The twist is in how the training pipeline itself is structured.

What Google's AI image-repair training actually does

Imagine you take a photo and there's a stranger walking through the background. You want them gone — and you want the wall, grass, or sky behind them to look like they were never there. That's called image inpainting, and AI has gotten surprisingly good at it.

Google's patent describes a smarter way to teach an AI to do this. Instead of just showing the model a damaged photo and hoping it guesses right, the system uses a paired training setup: it takes a perfect original photo, deliberately adds something unwanted (like a smudge or an object), and then trains the AI to reconstruct exactly what the original looked like. That way, there's a clear "right answer" to measure against.

The result is a model that learns not just to fill gaps plausibly, but to fill them accurately — with a calibrated sense of uncertainty so it can make confident guesses even when it hasn't seen the exact situation before.

How the dual-encoder VAE learns to fill in the gaps

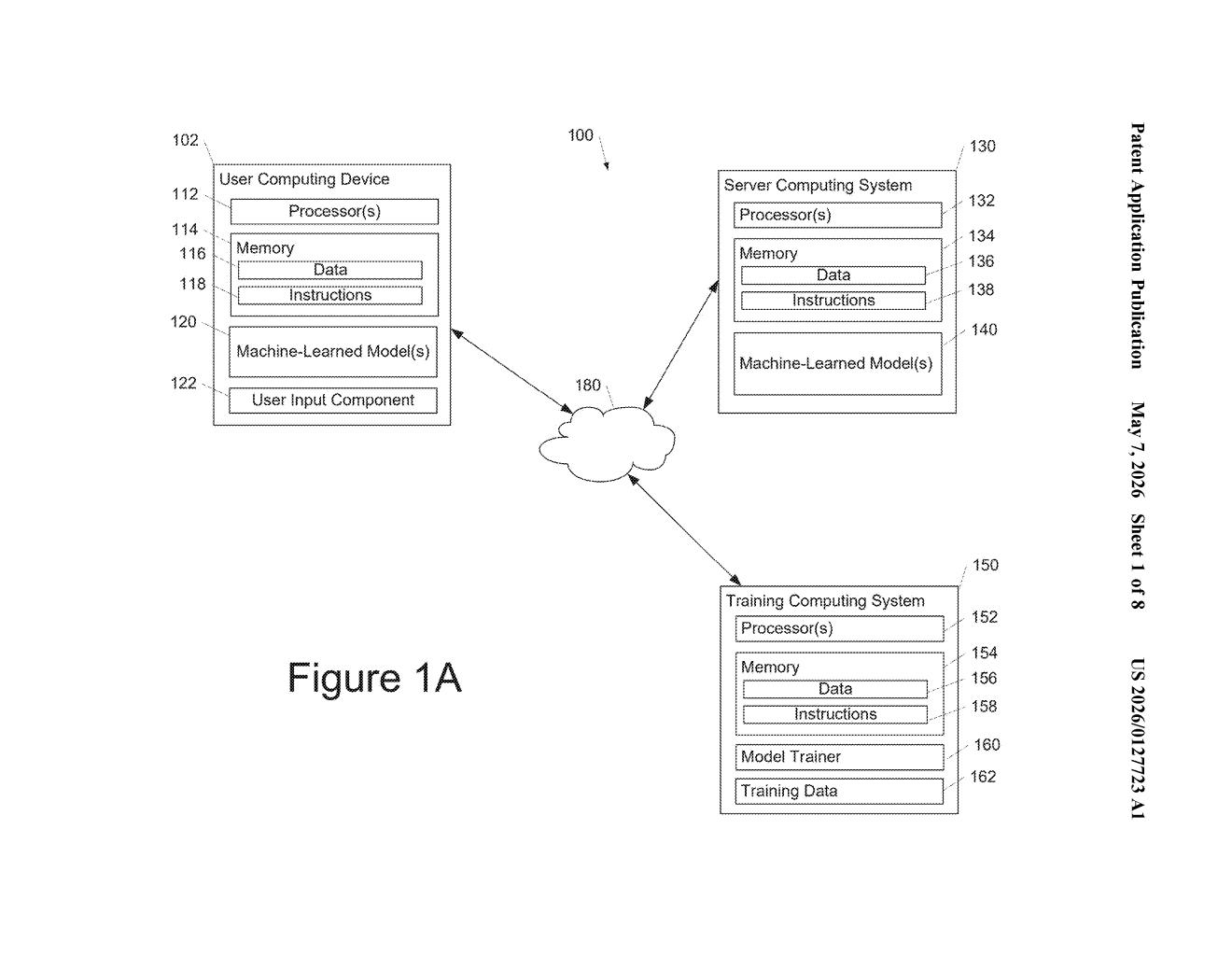

The system trains a conditional variational autoencoder (CVAE) — a type of AI architecture that learns to encode images into compressed representations and then reconstruct them. What makes it conditional is that the reconstruction is guided by additional context, in this case a mask showing where the unwanted content sits.

The training pipeline uses two separate encoder models running in parallel:

- Encoder 1 processes the damaged image (with the unwanted content added in) plus the mask, generating a compressed "embedding" — essentially a summary of what the model sees.

- Encoder 2 processes the original clean image plus the mask, producing "distribution values" — statistical signals that tell the decoder what the correct output should look like.

- A decoder then combines both encoder outputs to generate the repaired image, specifically filling in the masked region.

During training, the system compares its predicted output against the ground truth original using loss functions (mathematical measures of how wrong the prediction was), then adjusts the model's internal parameters to get closer to the right answer next time. Once trained, the model can operate without the ground-truth encoder — it relies purely on what it learned.

What this means for AI photo editing tools

Tools like Google's Magic Eraser on Pixel phones already do object removal, but the quality varies — sometimes the filled region looks obviously artificial. A more rigorously trained inpainting model, especially one calibrated with ground truth data at training time, could mean cleaner, more realistic results in everyday photo editing.

Beyond photos, the patent explicitly notes the system can handle a variety of data types — which hints at potential applications in video, audio, or other media. If this architecture makes it into Google Photos, Gemini-powered editing tools, or Android's camera stack, the practical payoff for users could be noticeably better automatic cleanup on their everyday shots.

This is solid, methodical AI engineering — not a flashy concept patent but a real training architecture with specific, defensible design choices. The dual-encoder setup is a genuinely clever way to inject ground truth signal during training while keeping inference practical. Worth paying attention to if you follow computational photography or Google's Pixel camera roadmap.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.