Google Patents a System That Splits 3D Rendering Between Your Device and the Cloud

Rendering a rich 3D scene is expensive — your phone can only do so much. Google is patenting a system that dynamically offloads parts of that work to the cloud, so you get a smoother experience without your device melting.

What Google's hybrid 3D rendering system actually does

Imagine you're exploring a detailed 3D map of a city — spinning around buildings, zooming into storefronts, watching shadows shift in real time. That kind of rendering is genuinely hard work, and your phone or laptop might not be up to all of it on its own.

Google's patent describes a system that looks at a set of rendering criteria — things like your device's current processing load, battery level, or network speed — and decides, on the fly, which parts of a 3D scene your device should draw and which parts should be handled by a remote cloud server. The two systems work together to produce the final image you see on screen.

The result is that you could get a consistently high-quality 3D experience even on a mid-range phone, because the heavy lifting can be quietly shifted to Google's servers whenever your device needs a break. It's a bit like how a streaming service handles video — your TV isn't decoding every frame; a data center already did that.

How Google's system decides where to render each visual element

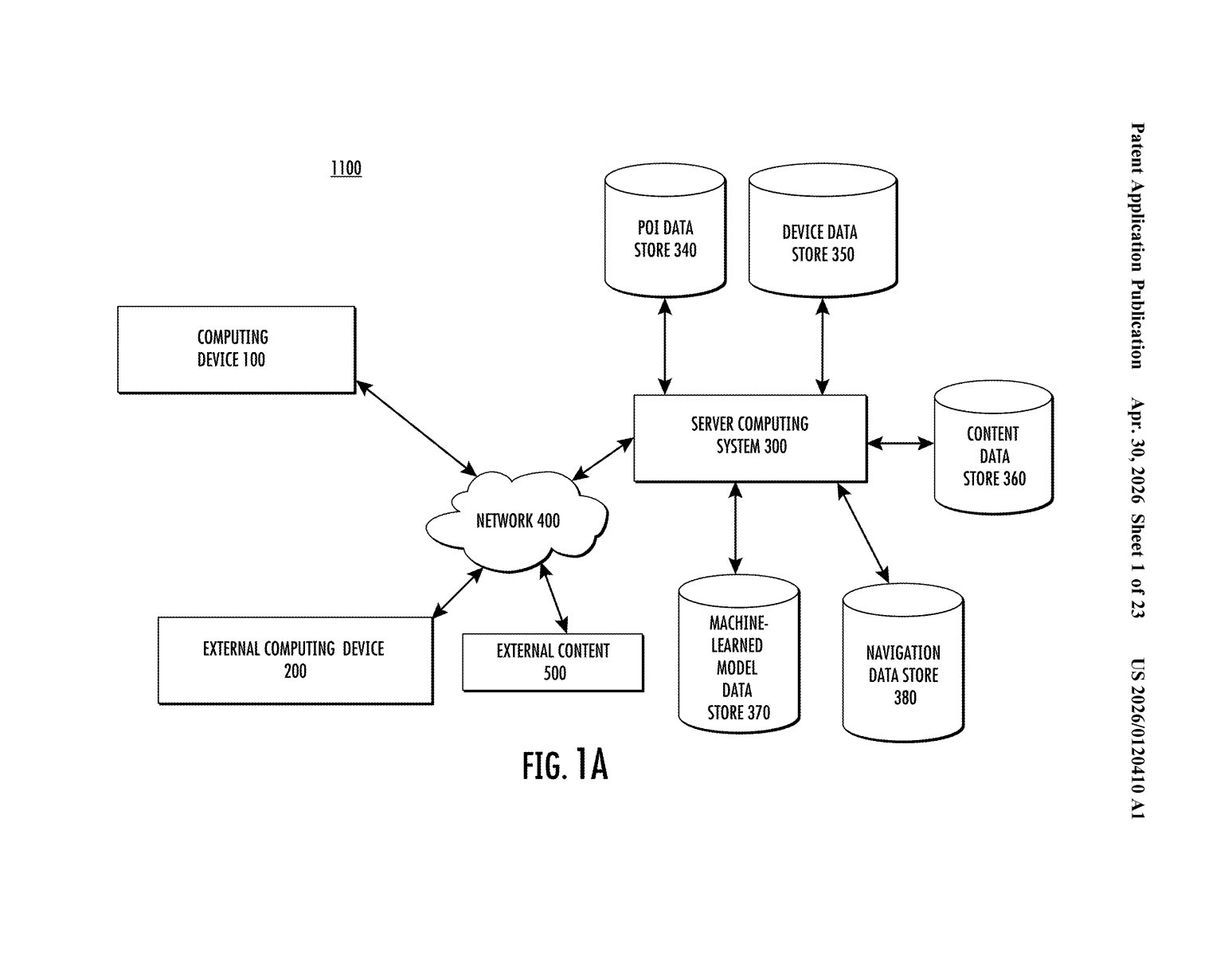

The patent describes a first computing system (your local device) that coordinates with a second computing system (a remote server) to render the visual elements that make up an interactive 3D scene.

The key mechanism is selective rendering assignment: the local device evaluates one or more rendering criteria — the patent doesn't enumerate them exhaustively, but these could include GPU load, available memory, battery state, or network latency — and uses those criteria to decide, per visual element or group of elements, where the rendering should happen.

The workflow looks roughly like this:

- The device receives or maintains a set of visual elements (think: geometry, textures, lighting data) for a 3D scene of a specific location.

- A decision engine checks the rendering criteria and assigns each element or batch to either the local GPU or the remote server.

- Both systems produce rendered visual elements — essentially completed image layers — which are then composited and displayed together on the device's screen.

The claim is intentionally broad: it covers any configuration where at least one of the two systems does rendering work. That breadth suggests Google is staking out territory around the general concept of adaptive, hybrid rendering rather than a single narrow implementation.

What this means for Google Maps, Street View, and AR

Google runs some of the most 3D-heavy consumer products in existence — Google Maps (with its photorealistic city models), Street View, Google Earth, and its growing AR navigation features. All of those products are constrained today by what your device can render in real time. A patent like this points toward a future where those constraints are softened by seamlessly leaning on Google's server infrastructure.

For you as a user, the practical upside is richer visuals on cheaper hardware, and less battery drain when your phone isn't doing all the work. For Google, it's a way to push the visual fidelity of its location products without waiting for phones to catch up — and it keeps more compute running on Google's own cloud, which is a strategic win regardless of the hardware you're holding.

This is a solid, practically motivated patent — not a moonshot. Google already operates the infrastructure that would make this real, and the products that would benefit from it are obvious. The claim is broad enough to matter commercially, but the underlying idea (split rendering between client and server) has existed in game streaming and enterprise visualization for years. The interesting question is how fine-grained the per-element decision logic actually gets.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.