Google Patents a Two-Stage Prompt Filtering System for Better AI Responses

What if your AI assistant quietly ran your question through a gauntlet of self-generated follow-up prompts — and only showed you the answer that survived the cut? That's essentially what Google is patenting here.

How Google's layered prompt system picks better answers

Imagine you ask a smart assistant a question. Instead of just firing back the first answer it generates, it secretly brainstorms a bunch of different ways to interpret and expand your question, throws out the weak ones, tries to answer each of the good ones, and then filters those answers again before picking the best one to show you.

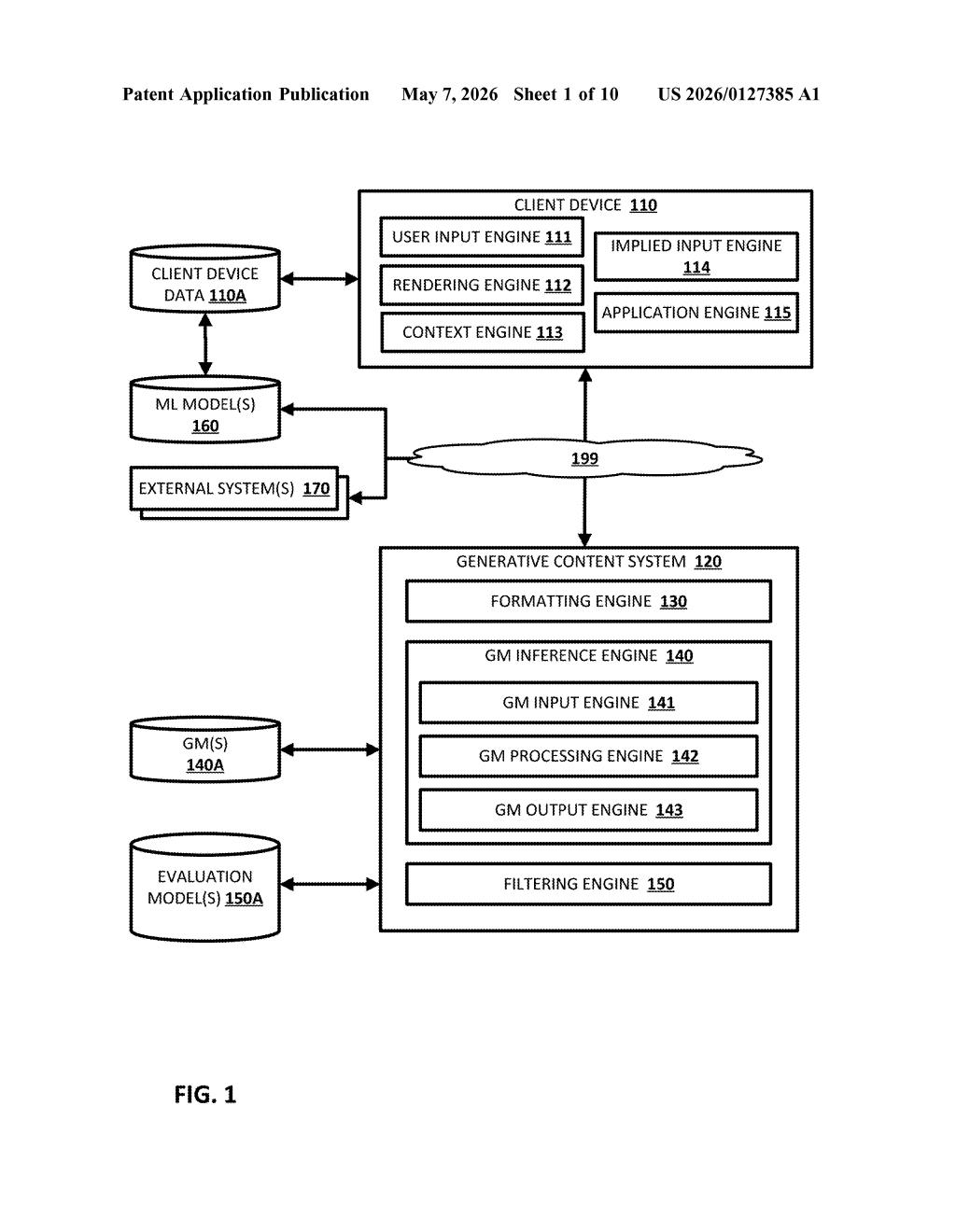

That's the core idea behind this Google patent. Your original question becomes a base prompt, which gets handed to an AI model. That model doesn't answer directly — it generates a set of extended prompts, basically richer or more specific reframings of what you asked. A filtering step trims that list, and then the AI (possibly a second, different model) generates candidate answers for each surviving prompt.

A final filtering pass picks the best answer from that pool. The result you see has gone through at least two rounds of AI self-evaluation before reaching you — which is meant to make responses more accurate, safer, or better aligned with what you actually wanted.

How the base prompt fans out into filtered candidates

The patent describes a pipeline with several distinct stages, each involving either generation or filtering:

- Base prompt construction: The system takes a user's natural language input and builds a structured base prompt from it — essentially a formatted version of the question ready for model consumption.

- Extended prompt generation: A first generative model (GM) processes that base prompt and outputs a plurality of extended prompts — multiple variations or elaborations on the original query. Think of this as the AI brainstorming different angles on your question.

- Filtering round one: Not all extended prompts are used. A filtering step winnows the set down to a subset — removing low-quality, redundant, or off-topic expansions before any answer generation happens.

- Candidate response generation: For each surviving extended prompt, either the same first GM or a separate second GM generates a candidate response. Using two different models is interesting — it could mean a cheaper model generates candidates while a more capable model judges them, or vice versa.

- Filtering round two: The candidate responses are filtered again, and the surviving output(s) become the final response delivered to the user.

The dual-filter architecture is the key structural novelty here. By pruning at the prompt level and the response level, the system avoids wasting compute on bad prompts and bad answers simultaneously.

What this means for Google's AI assistant quality

For Google, this is squarely about response quality and alignment — making sure AI answers are closer to what users actually mean, not just what they literally typed. The filtering steps act as a kind of automated quality control layer that doesn't require human reviewers in the loop. That's important at the scale Google operates, where billions of queries flow through products like Search, Assistant, and Gemini.

For you as a user, the implication is subtler: responses might feel more on-point without you knowing why. The tradeoff is latency and compute cost — running multiple model passes per query is expensive. How Google balances speed against quality here will determine whether this architecture shows up in real-time consumer products or stays confined to higher-latency, higher-stakes use cases.

This is a solid, pragmatic engineering patent rather than a conceptual leap — the idea of iterative prompt refinement and multi-stage filtering is well understood in the AI research community, and it's been explored in published work on chain-of-thought and self-consistency methods. What Google is doing here is productizing and systematizing that idea into a deployable pipeline. It's worth watching because it signals Google's intent to bake response-quality mechanisms directly into infrastructure, not just into model training.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.