Google Patents a Self-Correcting Listening Mode for Its Voice Assistant

When Google's voice assistant mishears you, it usually just acts on the wrong word. This patent describes a system that knows when it's probably wrong — and quietly shifts into a focused mode to hear you correctly.

What Google's phonetic self-correction actually does

Imagine you ask your voice assistant to "call Renata" and it hears "call the nada" — so it dials nothing and looks confused. The assistant had no idea it made a mistake. That's the problem Google is trying to fix here.

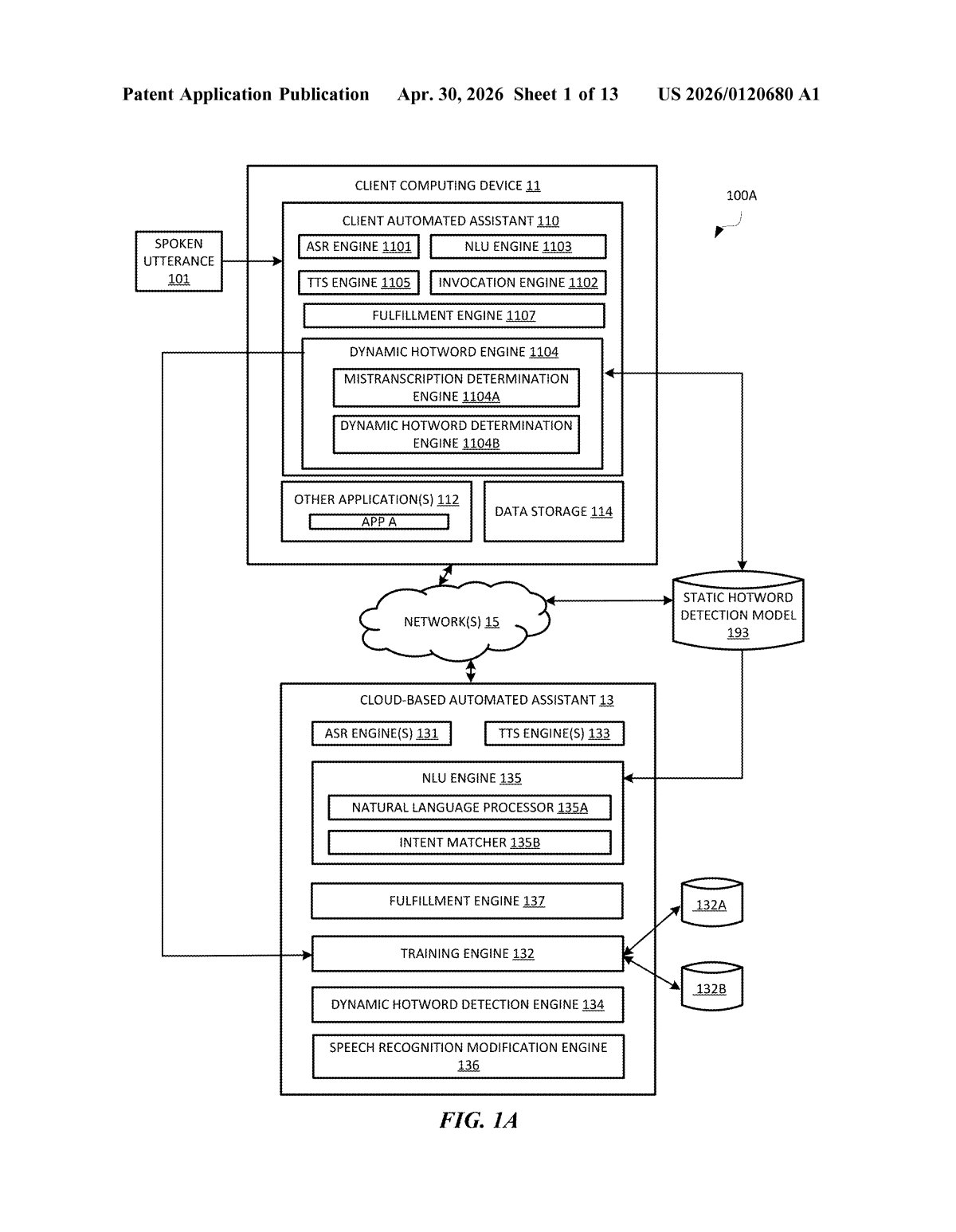

This patent describes a system where the assistant recognizes that a word or phrase in your request might have been misheard. Instead of acting on a bad transcription, it pauses and compiles a short list of words that sound similar to what it thinks it heard. Then it enters a kind of "narrow listening" mode, waiting for you to say one of those candidates — or pick one from a list on screen.

Once you confirm or say the right word, the assistant carries out the action that matches your actual intent — not the garbled version it first transcribed. It's like giving the assistant a second ear, but a smarter one.

How the assistant narrows its listening after a mis-transcription

The system works in three broad stages triggered whenever the assistant suspects a transcription error.

Stage 1 — Error Detection: After transcribing a spoken request, the assistant evaluates whether any phrase in the output is a likely mis-transcription. The patent doesn't specify a single detection method, but the implication is that confidence scores from the speech recognition model are a key signal — if a word scores below a threshold, it's flagged as potentially wrong.

Stage 2 — Candidate Generation: Once a suspect phrase is identified, the system generates phonetically similar candidate phrases (words or phrases that sound alike — think "Renata" vs. "the nada"). These candidates are drawn from contextually relevant sources, such as the user's contacts list or known app names, which helps keep the list short and useful.

Stage 3 — Phonetically Restricted Listening: The assistant enters what the patent calls a phonetically restricted listening state — essentially a mode where it filters incoming audio against only the candidate phrases. This dramatically narrows the recognition space, making it much easier to resolve the ambiguity. The user can either say the correct word aloud or select a candidate from a UI prompt. The confirmed phrase then triggers the intended action rather than the misheard one.

What this means for Google Assistant's reliability

Voice assistants have always struggled with proper nouns — names, places, app titles — precisely because these words don't follow predictable phonetic patterns and vary enormously across accents. A system that catches its own errors and asks for targeted clarification, rather than acting blindly or forcing a full re-prompt, is a meaningful upgrade to the conversational loop.

For you as a user, this could mean fewer frustrated repeated commands and less of that familiar "sorry, I didn't catch that" dead end. Google is also strategically positioning Assistant to compete with more capable AI voice interfaces — and self-aware error recovery is the kind of reliability improvement that makes voice feel less like a party trick and more like a dependable tool.

This is a focused, well-scoped fix for a real and persistent problem in voice assistants. It's not glamorous AI research — it's the kind of careful engineering that actually makes products more trustworthy. If Google ships this, it'll be most noticeable in the moments users currently don't notice: the ones where they gave up and reached for their keyboard instead.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.