Nvidia Patents a Multi-Head Neural Network for Reading Traffic Lights

Reading a traffic light sounds trivially easy — until you're the AI responsible for a two-ton vehicle and the signal is partially obscured, blinking, or showing an arrow. Nvidia's latest patent tackles that messy real-world problem with a specialized multi-head neural network.

What Nvidia's traffic light classifier actually does

Imagine you're driving and you see a traffic light. In a split second, your brain registers the color, whether it's blinking, whether it's an arrow or a circle, and whether it even applies to your lane. That's a lot of parallel processing — and it turns out teaching a computer to do the same thing isn't as simple as pointing a camera at the light.

Nvidia's patent describes a deep learning system that breaks traffic light recognition into multiple specialized tasks handled by separate "heads" on the same neural network. One head might focus on the light's color, another on its shape or orientation, another on whether it's flashing. A final "fusion head" then combines all those individual reads into a single, confident classification your self-driving car can actually act on.

The practical payoff: instead of one overloaded model trying to do everything at once, you get a team of specialists feeding their findings to a decision-maker. That's a well-established pattern in machine learning, and applying it specifically to traffic signals is a sensible engineering choice for any autonomous vehicle system.

How the component heads and fusion head divide the work

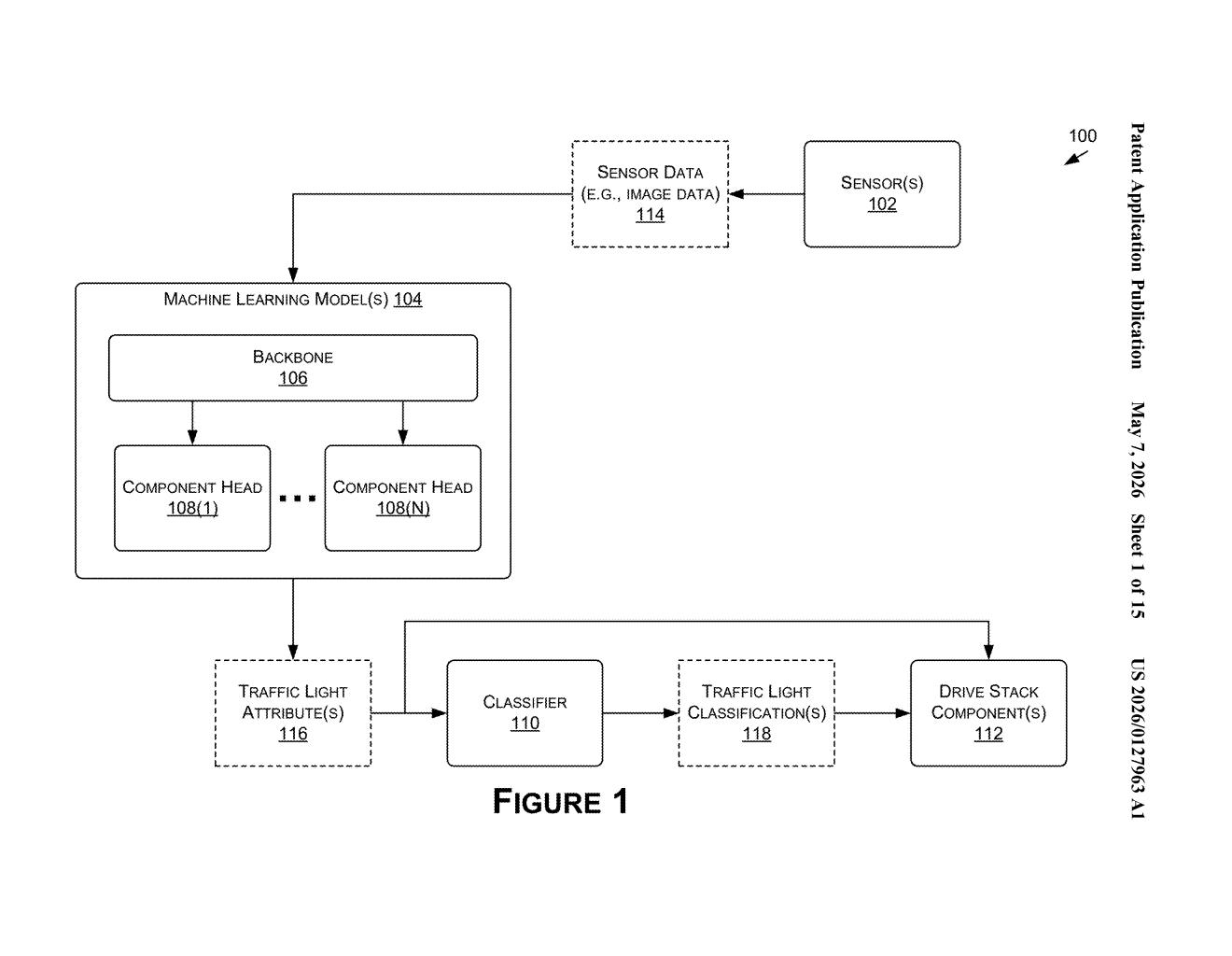

The patent describes a pipeline that starts with a camera image and ends with a vehicle control decision. Here's how the pieces fit together:

- Backbone network: A shared feature extractor (think of it as the "eyes" of the system) processes the raw image and produces a rich feature representation used by all downstream heads.

- Component heads: Multiple specialized sub-networks, each trained to detect a specific attribute of the traffic light — such as color state (red/yellow/green), signal type (arrow vs. circle), or whether the light is flashing. Each head is independently optimized for its narrow task.

- Fusion head: A final network layer that ingests the outputs from all component heads — either the attribute predictions themselves or a combined feature vector (a concatenated numerical summary of what each head learned) — and produces a unified traffic light classification.

- Drive stack integration: The classification is passed directly to the vehicle's control layer, which uses it to decide whether to stop, go, or yield.

The multi-head architecture matters because traffic lights vary enormously across geographies, intersection layouts, and lighting conditions. Training one monolithic model to handle all that variation is harder than training several focused models and letting a fusion layer reconcile their outputs. The patent also explicitly covers training, not just inference — meaning Nvidia is claiming the end-to-end learning process, not just the runtime behavior.

What this means for Nvidia's self-driving AI stack

Nvidia's DRIVE platform is already used by dozens of automakers and robotaxi developers. A robust, productionized traffic light classifier is one of the foundational building blocks any autonomous vehicle stack needs — getting it wrong (or slow) at an intersection is a safety-critical failure. Filing this patent signals that Nvidia is deepening its IP position around the perception layer of autonomy, not just the chip hardware it's famous for.

For you as a consumer or developer, this is the kind of unglamorous but essential work that separates demo-grade autonomy from something you'd trust at a busy urban intersection. A car that can confidently parse a flashing yellow arrow in the rain is meaningfully safer than one that can't — and this architecture is Nvidia's proposed answer to that challenge.

This is solid, applied autonomy engineering — not a moonshot, but exactly the kind of meticulous perception work that separates real autonomous systems from demo reels. The multi-head fusion approach is a well-understood pattern in computer vision, and Nvidia applying it specifically to traffic light classification makes sense given their DRIVE platform ambitions. It's worth tracking as part of Nvidia's broader push to own the software and IP stack, not just the silicon.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.