Nvidia Patents a Laser-Targeting System for Calibrating In-Cabin Sensors

Getting an in-car camera to know exactly where the driver's eyes <em>should</em> be looking is harder than it sounds — and Nvidia thinks a targeted light projector is the cleanest way to solve it.

What Nvidia's projected-target sensor calibration actually does

Imagine you're fitting a new security camera in a room and you need it to know precisely where the "danger zones" are — the doorway, the safe, the window. You could painstakingly hand-measure everything, or you could use a laser pointer to mark each corner and let the camera learn from those reference dots. That's essentially what Nvidia's patent describes, but inside a car.

Modern vehicles are packed with cameras and sensors that watch the driver and passengers — tracking eye movements, head positions, and whether you're paying attention to the road. For those systems to work correctly, the sensors need an accurate map of the cabin zones they're supposed to monitor. Nvidia's approach uses a target projector (think a precise light-pointer device) to dot each boundary point of those zones, recording the exact 3D coordinates as it goes.

Once the projector has walked the perimeter of each monitoring region, that spatial data gets translated into the sensor's own coordinate system — so the camera or radar knows precisely what it's watching. It's a calibration shortcut that could make in-cabin safety systems faster to set up and more accurate from day one.

How the projector maps 3D gaze-region boundaries to sensor coordinates

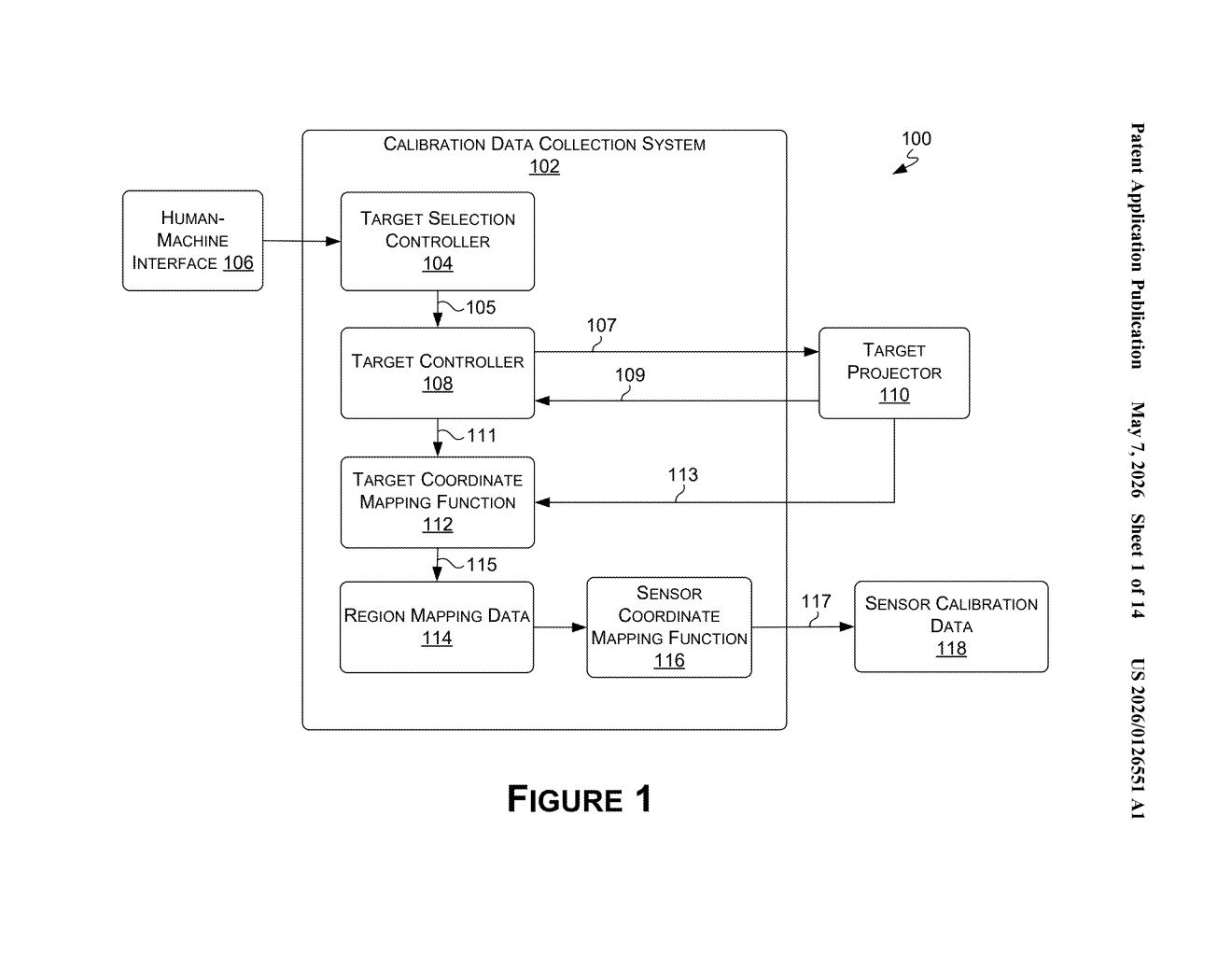

The patent describes a calibration pipeline with three main stages:

- Projection and marking: A target projector aims a visible light point at predefined boundary positions of so-called gaze regions — the areas in the cabin (driver's head zone, passenger seat, rear seat, etc.) that occupant-monitoring sensors are configured to watch.

- 3D coordinate capture: As the projector hits each boundary point, the system records the 3D position of that projected target in the projector's own coordinate system — essentially building a spatial map of every corner and edge of each monitoring zone.

- Coordinate transformation and sensor calibration: That map is then mathematically transformed (a rigid-body or affine transformation — a way of rotating and translating coordinates from one reference frame to another) so it's expressed in the coordinate system of the target sensor. The sensor is then calibrated against this translated map.

The key insight is that the projector acts as a trusted spatial reference device. Rather than manually measuring cabin geometry with rulers or relying on factory CAD data that may not match real-world assembly tolerances, the system generates ground-truth 3D boundary data dynamically. This makes it adaptable to different vehicle models and sensor mounting positions without re-engineering the calibration workflow from scratch.

What this means for in-cabin driver and occupant monitoring

Driver monitoring systems (DMS) and occupant monitoring systems (OMS) are becoming mandatory in new vehicles under regulations in the EU and increasingly elsewhere — they're what alert you when you're drowsy, distracted, or not wearing a seatbelt. The accuracy of those systems depends entirely on how well the sensors are calibrated to the actual geometry of each car's interior. A sloppy calibration means false alerts, missed events, or a system that simply doesn't work reliably.

For Nvidia, which supplies the DRIVE platform powering in-cabin AI in vehicles from several automakers, a faster and more precise calibration method is a real competitive advantage. It could reduce time on the manufacturing line, improve consistency across vehicle variants, and make post-repair recalibration — after a sensor gets replaced at a dealership — much more straightforward for technicians.

This is unglamorous but genuinely useful engineering. Sensor calibration is one of those problems that doesn't get headlines but quietly determines whether a safety feature works or doesn't. Nvidia solving it with a projector-based spatial-reference approach is the kind of practical, production-line-friendly thinking that separates shipping hardware from demo hardware.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.