OpenAI Patents a System for Forcing AI Responses Into Structured Formats

When you ask an AI to return JSON and it returns... a paragraph of prose instead, that's a real problem for production software. OpenAI is patenting a method to make sure that never happens.

What OpenAI's structured output enforcement actually does

Imagine you're building an app that asks an AI to fill out a form — customer name, order total, shipping address — and return it as clean, machine-readable data. The AI is helpful, but sometimes it writes a friendly sentence instead of a structured list. Your app breaks. This is one of the most common headaches developers face when using AI in real products.

OpenAI's patent describes a system where you send a request to an AI model along with a schema — a blueprint that defines exactly what the output must look like. The model is then constrained to only produce output that matches that blueprint, no improvisation allowed.

Think of it like giving a fill-in-the-blanks form instead of a blank sheet of paper. The AI can still be creative within each field, but it can't go off-script and hand you a cover letter when you asked for a spreadsheet.

How the schema constrains the generative engine's output

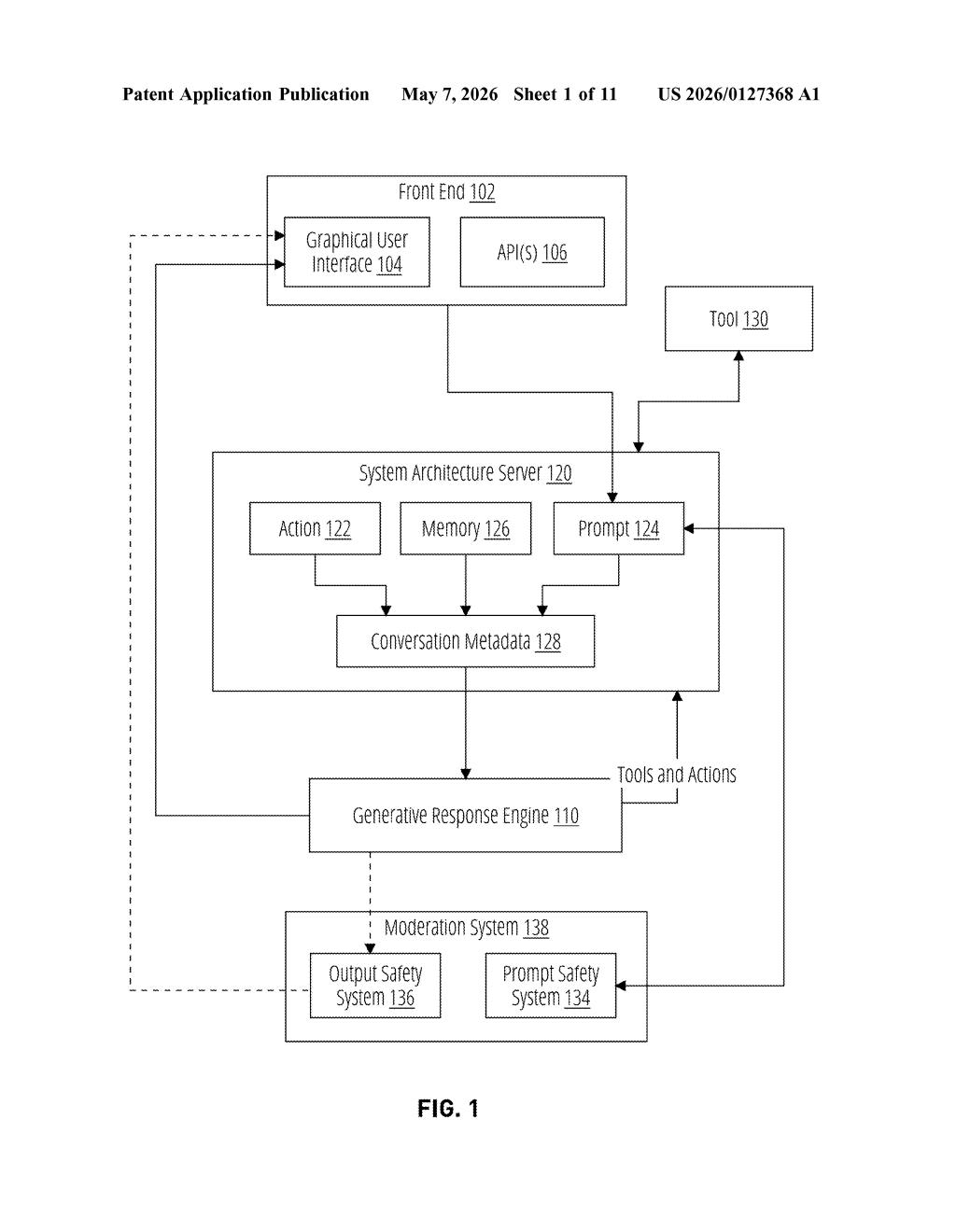

The patent describes a generative response engine — essentially a large language model — that receives a request bundled with a schema. A schema is a formal definition of a data structure: think JSON Schema, which might specify that a response must be an object with a "name" field (string), an "age" field (integer), and a "tags" field (array of strings).

The core claim is that the engine generates output guaranteed to conform to that schema. This goes beyond prompt engineering (just telling the model "please return JSON") — it implies a structural enforcement mechanism, likely operating at the token-generation level. During inference, the model's next-token probabilities are filtered so only tokens that keep the output on a valid path toward a conforming structure can be selected. This technique is sometimes called constrained decoding or grammar-based sampling.

- The request includes both the content prompt and a formal schema definition.

- The engine generates a response token by token, constrained to valid completions of the schema.

- A safety system is also referenced in the patent diagram, suggesting moderation still runs in parallel.

This is the architecture behind OpenAI's publicly available Structured Outputs feature in the API, which launched in 2024.

What this means for developers building on top of AI APIs

For developers, reliable structured output is the difference between an AI feature that ships and one that stays in prototype. Every time an AI returns malformed JSON or ignores a required field, someone has to write error-handling code, retry logic, or worse — hand-check outputs. Guaranteed schema conformance removes an entire category of production bugs.

From a competitive standpoint, structured output is now a baseline expectation for enterprise AI APIs. By patenting the underlying enforcement technique, OpenAI is staking a formal claim on the mechanism — not just the product feature. Whether that claim is broad enough to matter depends heavily on how the patent office interprets the scope, but it signals OpenAI is treating core API infrastructure as protectable IP, not just a developer convenience.

This patent is essentially OpenAI filing paperwork around a feature that already exists and that competitors like Google and Anthropic also offer. The independent claim as written is very broad — 'receive schema, generate conforming output' — which will almost certainly face prior art scrutiny during examination. It's more of a flag-planting exercise than a genuinely novel disclosure.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.