Meta Patents a Real-Time Mesh Overlay That Keeps AR Headset Users From Walking Into Walls

Meta is patenting a system that watches for real-world objects getting too close to your AR headset and renders a visible 3D mesh of them directly into your virtual view — so you see the wall before you walk into it.

What Meta's collision-boundary mesh actually does

Imagine you're deep in an AR experience — maybe watching a virtual presentation or playing a game — and you slowly drift toward your actual living-room couch without realizing it. Normally, you'd just bump into it. Meta's patent describes a system designed to prevent exactly that.

The headset's cameras continuously scan the physical environment around you. When a real object — a table, a wall, a person — gets within a set danger distance, the headset generates a 3D mesh (think: a wireframe or semi-transparent shell) of that object and projects it right into your AR view at the correct position and angle. It looks like the object is inside your virtual world, because in a sense it now is.

As you move, the mesh moves with you, always reflecting the object's true location in the room. Step closer and the mesh stays accurate. Step away past the safe threshold, and it can fade out. It's a spatial safety net that tries to keep the virtual and physical worlds from colliding — literally.

How the stereo cameras build and track the safety mesh

The system is built around a concept called a predefined interaction boundary — a configurable distance threshold that, when breached by a nearby physical object, triggers the mesh rendering pipeline.

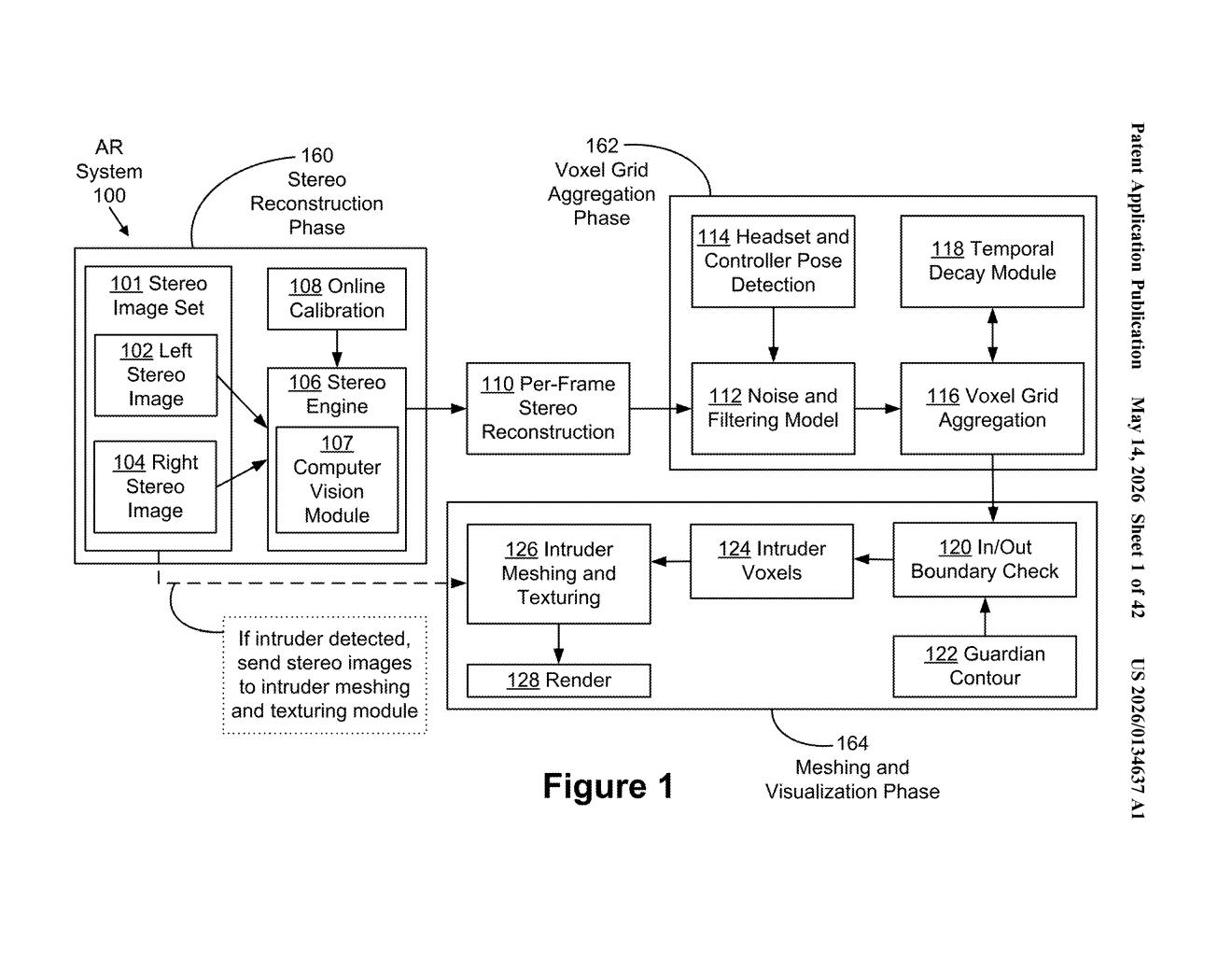

On the hardware side, the headset uses stereo cameras (two cameras whose slight offset lets the system calculate depth, the same way your two eyes do) to capture the environment continuously. A stereo reconstruction engine processes these image pairs frame-by-frame to build a voxel grid — a 3D grid of small cubes representing occupied space, similar to a Minecraft-style spatial map of reality.

The pipeline includes several key stages:

- Online calibration — keeps the camera parameters accurate as conditions change

- Temporal decay module — fades out old voxel data so stale obstacles don't linger in the mesh

- Noise and filtering model — removes spurious detections from the depth data

- In/Out boundary check — classifies voxels as inside or outside the safe zone, flagging "intruder" objects that cross the threshold

When an intruder is detected, the system triggers meshing and texturing of the relevant voxels — converting raw spatial data into a smooth, displayable surface. This mesh is then rendered at the correct angle and position on the headset's displays, tracking the user's movement in real time so the overlay never drifts from the actual object's location.

What this means for untethered AR headset safety

Passthrough safety systems already exist in devices like the Meta Quest, but they typically work by switching to a camera feed or showing a simple guardian boundary grid. This patent describes something more spatially precise: a persistent, geometry-aware mesh of real objects rendered contextually inside the AR experience, not as an interruption to it. That's a meaningful difference for immersive AR where breaking presence is costly.

For you as a user, this could mean AR experiences that feel safer in everyday cluttered spaces — not just in cleared living rooms. It also signals Meta's continued investment in the hard perceptual problem of blending real and virtual space without requiring you to stop and look around every few seconds.

This is genuinely useful, unsexy engineering — the kind of safety infrastructure that has to exist before untethered AR becomes something people actually trust in their homes. It's not a headline feature, but it's the sort of foundational patent that will matter a lot if Meta's AR glasses ever move beyond controlled demos into daily use.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.