Nvidia Patents a Geometry-Guided Radar Tracking System for Autonomous Machines

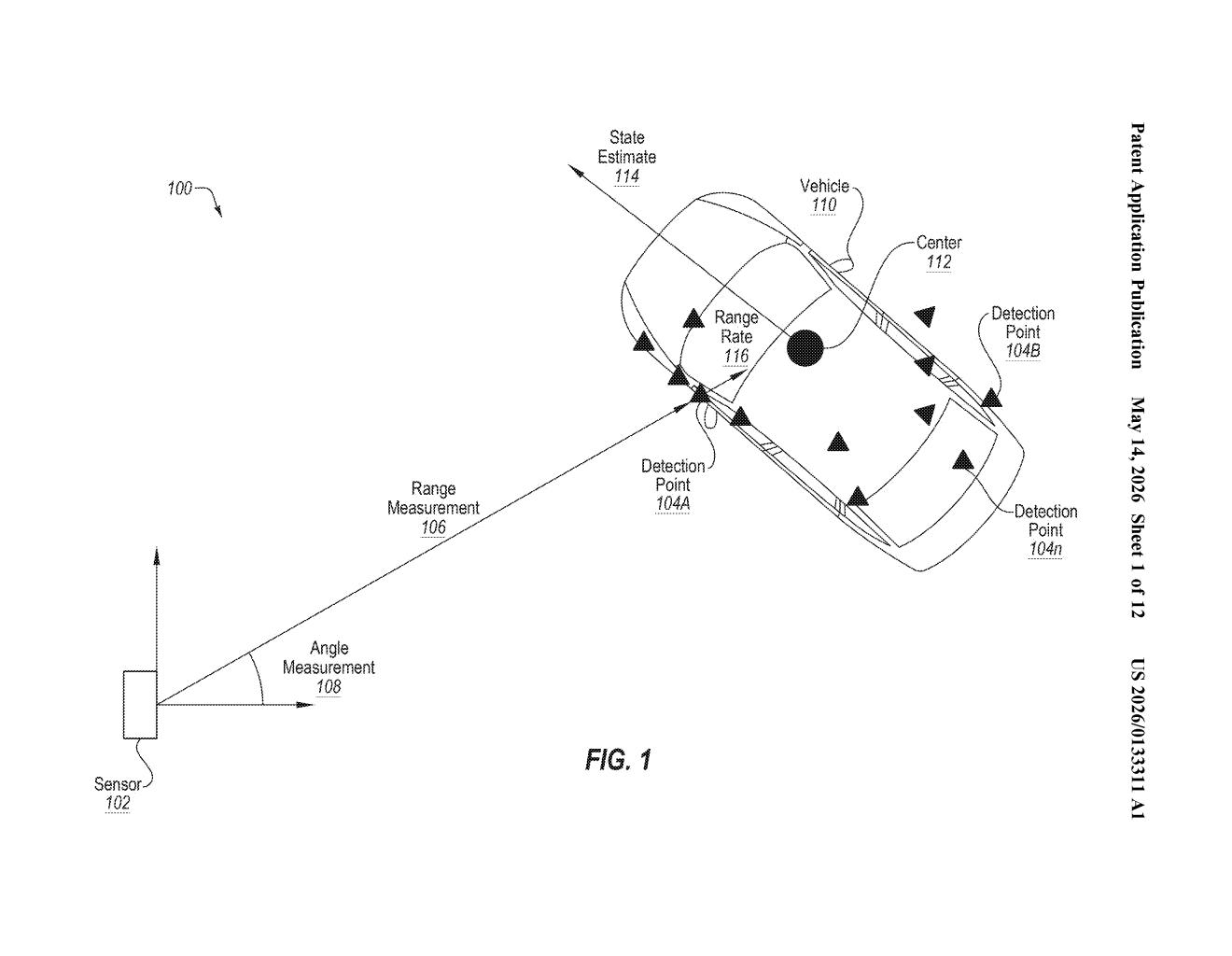

Radar is noisy — a single return ping might land anywhere on a truck's surface. Nvidia's patent describes a system that uses the predicted geometry of an object to figure out exactly which part of it a radar blip is bouncing off, making tracking dramatically more precise.

What Nvidia's radar bounding-shape tracker actually does

Imagine you're trying to follow a delivery truck in front of you using radar. Radar doesn't see the truck as a neat rectangle — it sees a messy scatter of signal reflections bouncing off whatever surfaces happen to face your sensor. One ping might come from the tailgate, another from the side mirror, and a third from somewhere ambiguous. Your tracker can easily get confused.

Nvidia's approach wraps a virtual bounding shape around the detected object — think of it like drawing a box around the truck that represents its estimated size and orientation. The system then uses the edges and corners of that box as reference points. When a new radar return comes in, the system asks: "Which part of that box would produce a reflection in this position?" That answer is used to refine where the tracker thinks the object actually is.

The result is a tighter, more stable position estimate for the object as a whole — even when individual radar hits are scattered or unreliable. Crucially, this estimate then feeds into whatever decisions a machine (like a self-driving car) needs to make next.

How reference edges and state estimates sharpen radar hits

The patent describes a multi-step pipeline for turning raw radar point-cloud data into a reliable object position estimate.

Step 1 — Build the bounding shape: The system first identifies reference portions of a bounding shape (a geometric approximation of the tracked object). These include a first reference edge, a second reference edge, and importantly the corner where those edges intersect. Think of it as knowing the rough outline of a car or truck before you worry about where exactly a radar ping landed.

Step 2 — Match radar hits to reference portions: When a radar return comes in (a "position measurement"), the system determines which reference portion on the bounding shape would geometrically correspond to that measurement. This is the key insight: instead of treating a radar point as a raw coordinate, you interpret it relative to the object's known structure.

Step 3 — Compute an expected position: Using that reference portion, the system calculates an expected position — where on the object's body the radar return most plausibly originated. This is combined with a state estimate (a running prediction of the object's position, velocity, and heading — similar to a Kalman filter update) to produce a refined position estimate.

Step 4 — Act on it: The final position estimate feeds into downstream machine operations — path planning, braking decisions, collision avoidance, or whatever the autonomous system needs to do next.

What this means for self-driving radar reliability

For autonomous vehicles and robotics, the difference between a sloppy radar track and a precise one isn't academic — it directly affects whether a self-driving system holds a safe following distance or misjudges a lane change. Radar works in rain, fog, and darkness where cameras struggle, but its raw data is inherently sparse. A system that can intelligently anchor noisy radar returns to a geometric model of the target object is a meaningful step toward more robust perception.

Nvidia is a dominant supplier of autonomous-vehicle compute platforms (Drive), so this patent slots neatly into their AV stack ambitions. It also signals Nvidia is doing deep sensor-fusion IP work, not just selling GPUs to other companies building these systems. If radar processing like this makes it into the Drive platform, every automaker licensing Nvidia's stack benefits.

This is solid, unsexy infrastructure work — exactly the kind of perception-layer IP that separates production-ready AV systems from research demos. The geometric bounding-shape trick for disambiguating radar returns is clever and practical, and Nvidia filing it as a continuation (building on a 2023 patent that already granted) suggests they consider this a core piece of their autonomous-driving portfolio worth layering protection around.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.