Google Patents an AI System That Recolors Photos Using Text Descriptions

Imagine typing "golden hour sunset" and watching a flat daytime photo automatically shift to warm amber tones — no sliders, no manual color grading. That's exactly what Google's latest patent describes.

How Google's text-to-recolor AI actually changes photo hues

Picture handing someone a photo and saying, "make this look like a foggy autumn morning." Today, a photo editor would spend time manually adjusting hue, saturation, and tone. Google's patent describes a way to let an AI do that from a plain-text prompt — no color picker required.

The system takes your photo and your description, then repeatedly tweaks the image's colors until they match what you wrote. It does this by comparing the recolored image and the text in a shared "understanding space" — a way of measuring how closely your words and the resulting image agree on what the scene should look like.

After enough of these back-and-forth adjustments, the AI lands on a version of the photo whose colors reflect your description. The original content — shapes, faces, objects — stays intact. Only the color information changes.

How the embedding loss loop steers the recoloring model

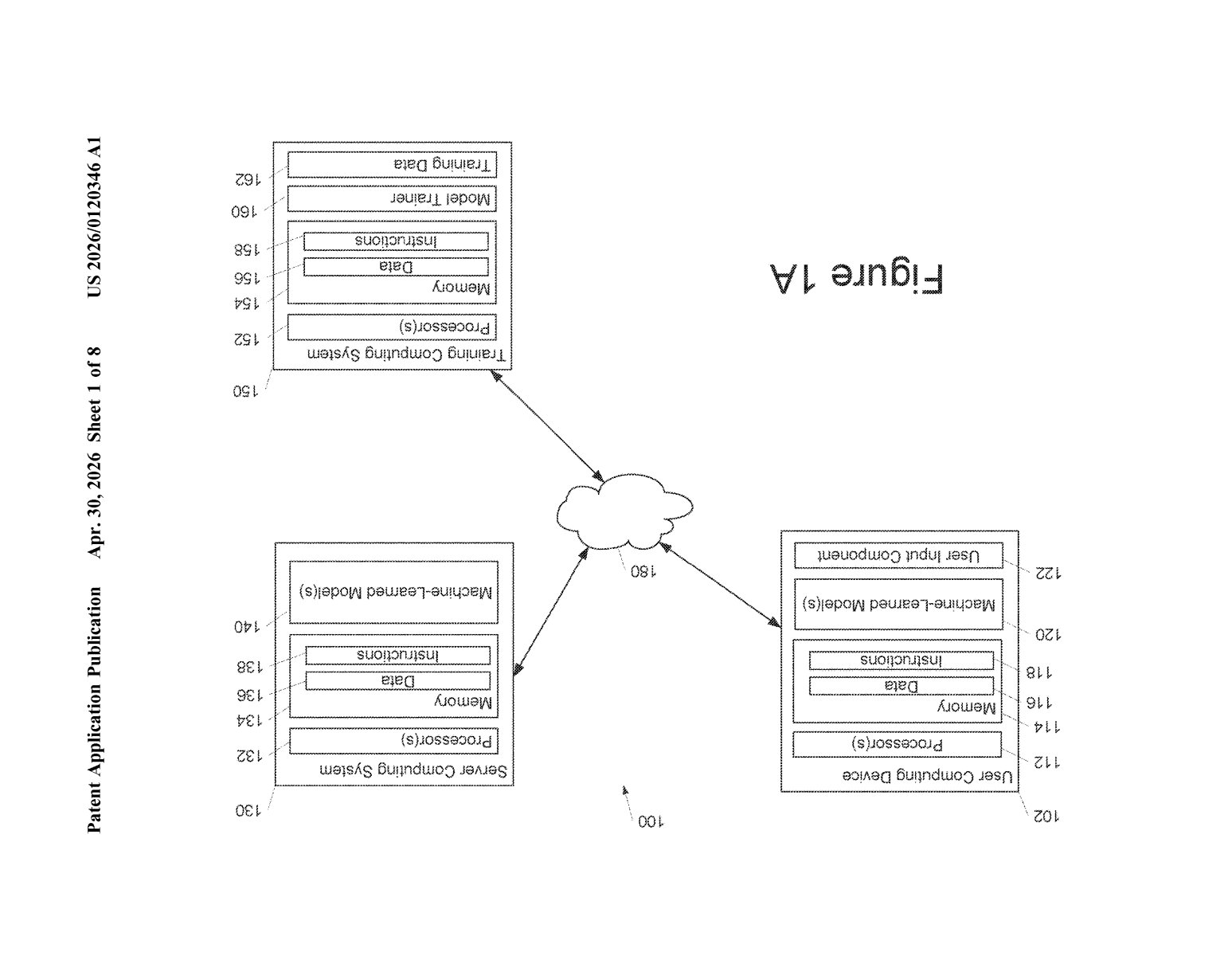

The patent describes a machine-learned recolorizing model that operates in a training-style loop, even at inference time. Here's the core pipeline:

- The system separates an image into its luminance (brightness) and chrominance (color) channels — a common trick that lets you mess with color without distorting the underlying structure of the image.

- A machine-learned text encoder converts the input text prompt into a numeric vector (a text embedding — essentially a point in a high-dimensional map of meaning).

- A machine-learned image encoder converts the recolored image into a comparable vector in that same map.

- An embedding loss function measures how far apart those two vectors are — i.e., how well the image's colors match the text description.

The recolorizing model's parameters are then adjusted to minimize that gap, iteration by iteration. This is essentially test-time optimization — the model is fine-tuning itself on the fly to satisfy a single prompt, rather than relying on a fixed output.

The approach leans on a pre-trained multimodal encoder (something like CLIP, which was trained on massive text-image datasets to map both modalities into a shared space). That pre-training is what gives the system its language understanding — it already "knows" what "golden hour" or "overcast day" looks like in color terms.

What this means for AI photo editing and Google Photos

For everyday users, this is the difference between fiddling with color sliders and just describing what you want. It's a natural fit for a product like Google Photos, which already uses AI for scene recognition and editing suggestions — adding text-driven recoloring would be a meaningful leap in how approachable photo editing feels.

More broadly, the technique is notable because it frames recoloring as an optimization problem guided by language rather than a one-shot generative output. That's a meaningful design choice — it prioritizes faithfulness to the original image structure while still giving users expressive control over mood and tone. Whether Google ships this as a standalone feature or folds it into a broader generative editing suite, the underlying approach has real legs.

This is a genuinely clever use of shared embedding spaces — applying test-time optimization to recoloring is a cleaner, more controllable approach than just prompting a diffusion model and hoping the structure survives. It's not a flashy generative AI story, but it's the kind of precise, targeted tool that actually ships and gets used. Watch for this in Google Photos.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.