Microsoft Patents a System That Lets AI Design Its Own Input Forms

What if an AI could decide, mid-conversation, exactly what form fields it needs to ask you the right questions — and then build that form itself? That's the core idea in this Microsoft patent.

How Microsoft's AI builds its own data-entry forms

Imagine you're using an AI assistant to help draft a contract. Instead of asking you a string of follow-up questions in chat, it pauses and presents you with a tidy form — fields for the party names, dates, payment terms — that it designed on its own, right in that moment. That's the experience this patent is trying to create.

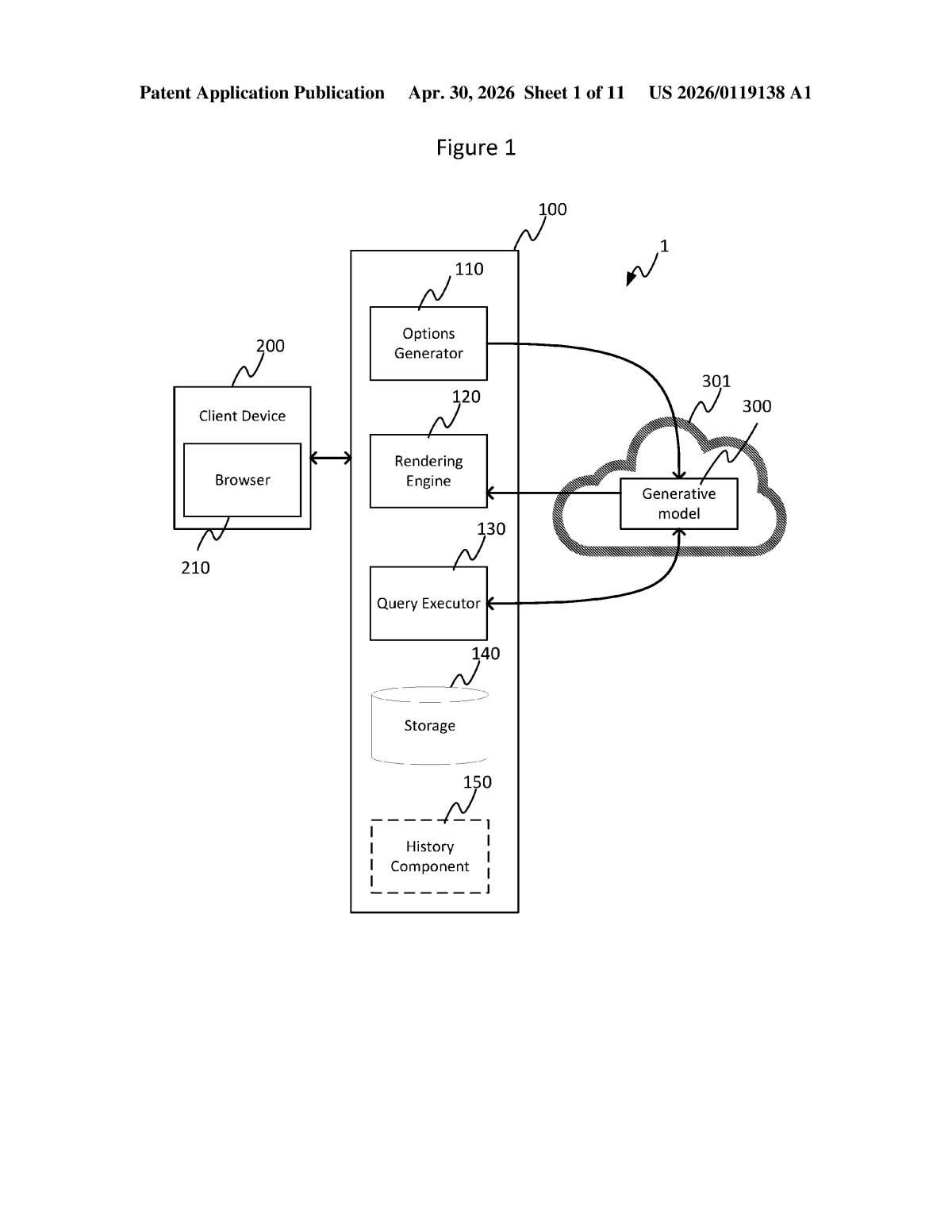

The system works by letting a generative AI model output not just words, but a description of a user interface — specifically, a form — that gets automatically built and displayed to you. The AI decides what inputs it needs, describes the form in a structured format, and the system converts that description into real, rendered code.

The key insight is that the AI defines what it needs from you, rather than a human developer hard-coding every possible input screen in advance. That makes the interface dynamic and responsive to context rather than one-size-fits-all.

How the schema turns AI output into executable UI code

The patent describes a three-step pipeline. First, a generative ML model (think a large language model) produces an output that includes a GUI component definition — a structured description of a form or input widget, written according to a predefined schema (a fixed vocabulary the system understands, so the AI can't output something unparseable).

Second, that definition is handed to a code generator, which translates the schema-compliant description into executable code — actual renderable UI, not just text about a UI. Third, that code is executed and the component appears on screen for the user to interact with.

The purpose of the form is specifically to refine an input — meaning the AI is using the form to gather more precise information from the user before completing a task. It's a structured clarification loop, not a static data-entry screen.

- Predefined schema: constrains what the AI can describe, preventing malformed or unsafe UI definitions

- Code generation layer: bridges the gap between AI-readable description and browser/app-renderable UI

- Execution layer: actually displays the component to the end user in real time

What self-generating forms mean for AI-powered software

Right now, every screen in a software product is designed by a human developer ahead of time. If an AI assistant needs information it wasn't anticipated to need, it falls back on asking in plain text — which is clunky and easy to misinterpret. This patent removes that bottleneck by letting the AI author its own structured inputs dynamically, so your interaction with AI tools becomes more precise without requiring a developer to anticipate every scenario.

For Microsoft, this fits squarely into its Copilot strategy across Office, Azure, and Windows. If Copilot can generate the exact form it needs — whether you're filling out an expense report, configuring a cloud resource, or authoring a document — the AI becomes a much more capable collaborator, not just a chatbot that guesses at what you mean.

This is a genuinely clever architectural idea: instead of forcing AI to work around static UI, give the AI the ability to extend the UI itself. The predefined schema constraint is the smart part — it keeps the AI's creative latitude bounded so you don't end up with a rogue form trying to collect your bank details. Whether this is novel enough to survive a thorough prior-art search is another question, but the concept is clean and practically useful.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.