Apple Patents End-to-End AI Navigation of iPhone Screens Using Vision Language Models

Apple is patenting a system that lets an AI look at your phone screen, understand what's on it, and carry out multi-step tasks — no hardcoded shortcuts required. This is the architecture behind a true AI agent for iOS.

What Apple's screen-navigating AI actually does

Imagine telling your phone, "Book me a dinner reservation for Saturday at 7pm near downtown" — and your phone actually does it: opening the app, tapping through menus, filling out the form, and confirming the booking. That's the dream this patent is chasing.

Right now, even the most capable voice assistants rely on pre-built integrations with specific apps. Apple's patent describes a fundamentally different approach: an AI that sees your screen like a human does, understands what the buttons and menus mean, and then figures out what to tap, swipe, or type — all from a single natural-language instruction you give it.

The system processes both your words and a snapshot of the current UI at the same time, then translates its understanding into real actions on the device. Think of it as giving your phone eyes and a brain that can read any app's interface, not just ones it's been specifically taught.

How the vision-language model reads and acts on the UI

The patent describes a pipeline with four key stages:

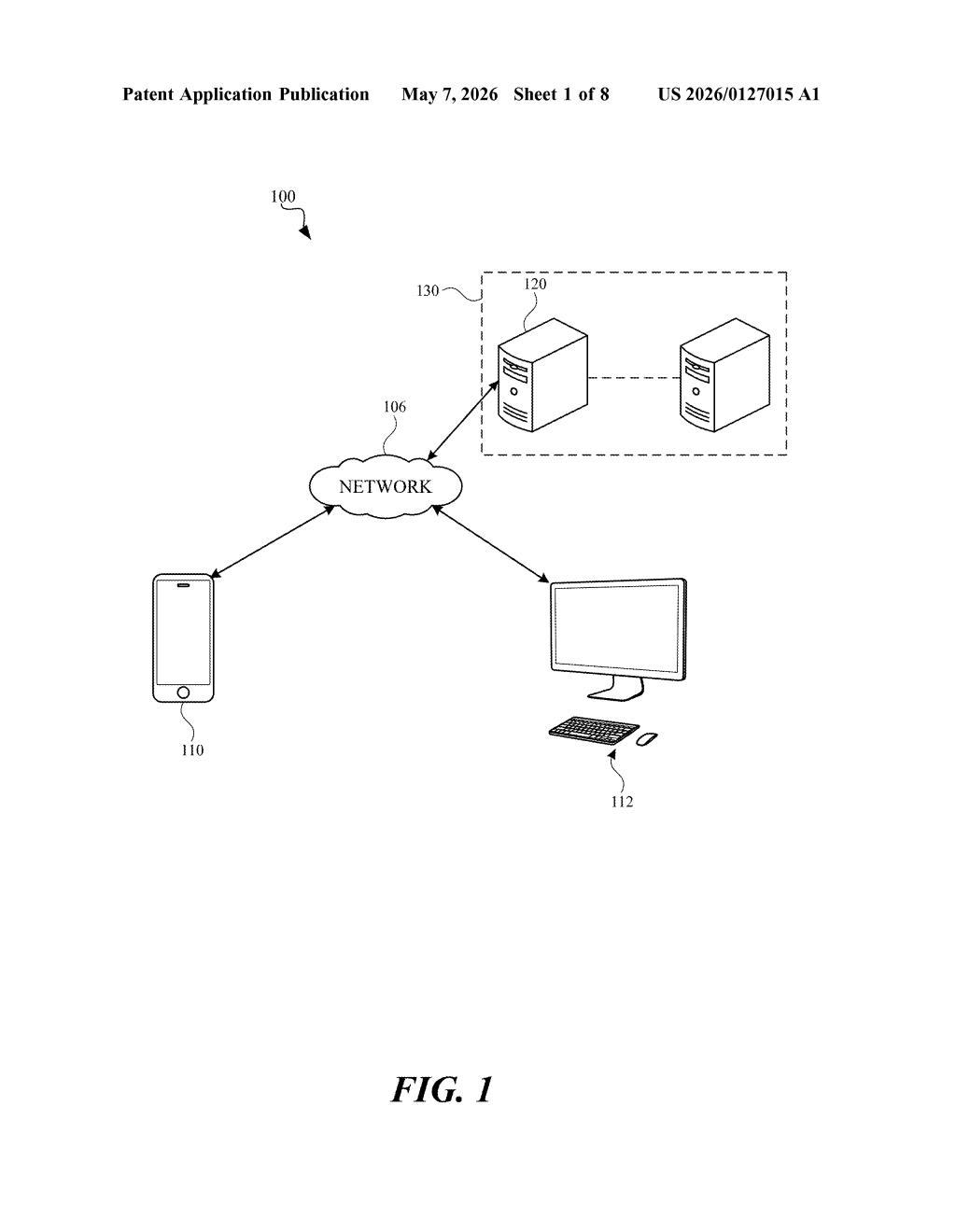

- Receiving inputs: The system takes in two things simultaneously — a language instruction (what you said or typed) and a visual input (a screenshot or live render of the current UI screen).

- Separate tokenization: The text and image are converted into tokens (the atomic units AI models process) independently before being fed into the model. Tokenizing them separately lets the model handle each modality at its native resolution without forcing one to conform to the other's format.

- Multi-modal LLM processing: A vision-language model (a large language model extended to understand images, similar in concept to GPT-4o or Gemini) ingests both token streams and produces action outputs — structured decisions about what to do next on the screen.

- Action execution: Those action outputs are converted into executable commands — actual tap coordinates, swipe gestures, text input, or button presses — that the operating system carries out on the live UI.

The claim covers the full loop: perceive, reason, act. Notably, it's end-to-end, meaning there's no separate object-detection step or app-specific API call in the middle — the model maps directly from what it sees and hears to what it does.

What this means for Siri and on-device AI agents

The biggest limitation of today's AI assistants — including Siri — is that they only work where engineers have manually built integrations. This architecture removes that ceiling. If the AI can see and interpret any UI, it can operate any app without needing special permission or a dedicated plugin. That's a qualitative leap in what an on-device assistant can accomplish.

For you, this could mean delegating genuinely complex, multi-app workflows — not just setting timers or playing music. Apple has been investing heavily in on-device AI (Apple Intelligence), and a capable UI-navigation agent would be the missing piece that makes those investments feel like something you actually use every day.

This is one of the more consequential AI patents Apple has filed in recent memory. The shift from app-specific integrations to a general-purpose screen-understanding agent is exactly the architectural bet that separates a gimmick assistant from a genuinely useful one. The team listed as inventors includes serious AI researchers, which suggests this isn't a paper exercise.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.