Google Patents a System That Builds 3D Maps From a Quick Video Walkthrough

Point a phone camera at your living room, walk around for a minute, and get back a full 3D model. That's the core idea behind Google's latest patent — and it's more clever than it sounds.

What Google's video-to-3D mapping system actually does

Imagine you want to create a 3D map of a room — maybe for a real estate listing, a warehouse inventory system, or just to show a friend the layout of your apartment. Today, that usually requires expensive lidar scanners or tedious manual photography from dozens of precise angles. Google's new patent describes a much simpler approach: you just walk through the space with a camera, and the system figures out the rest.

Here's the key insight: your casual video walkthrough tells the system where the important spots are. It then automatically identifies specific scan nodes — optimal locations along your path — and generates precise instructions for capturing higher-quality images at those spots. Think of it like a scout going ahead of a survey crew to flag exactly where to plant the tripods.

The final 3D model is built by combining your original casual video with the targeted high-quality scans the system directed. You do the easy part; the software handles the geometry.

How Google picks scan nodes and assembles the 3D model

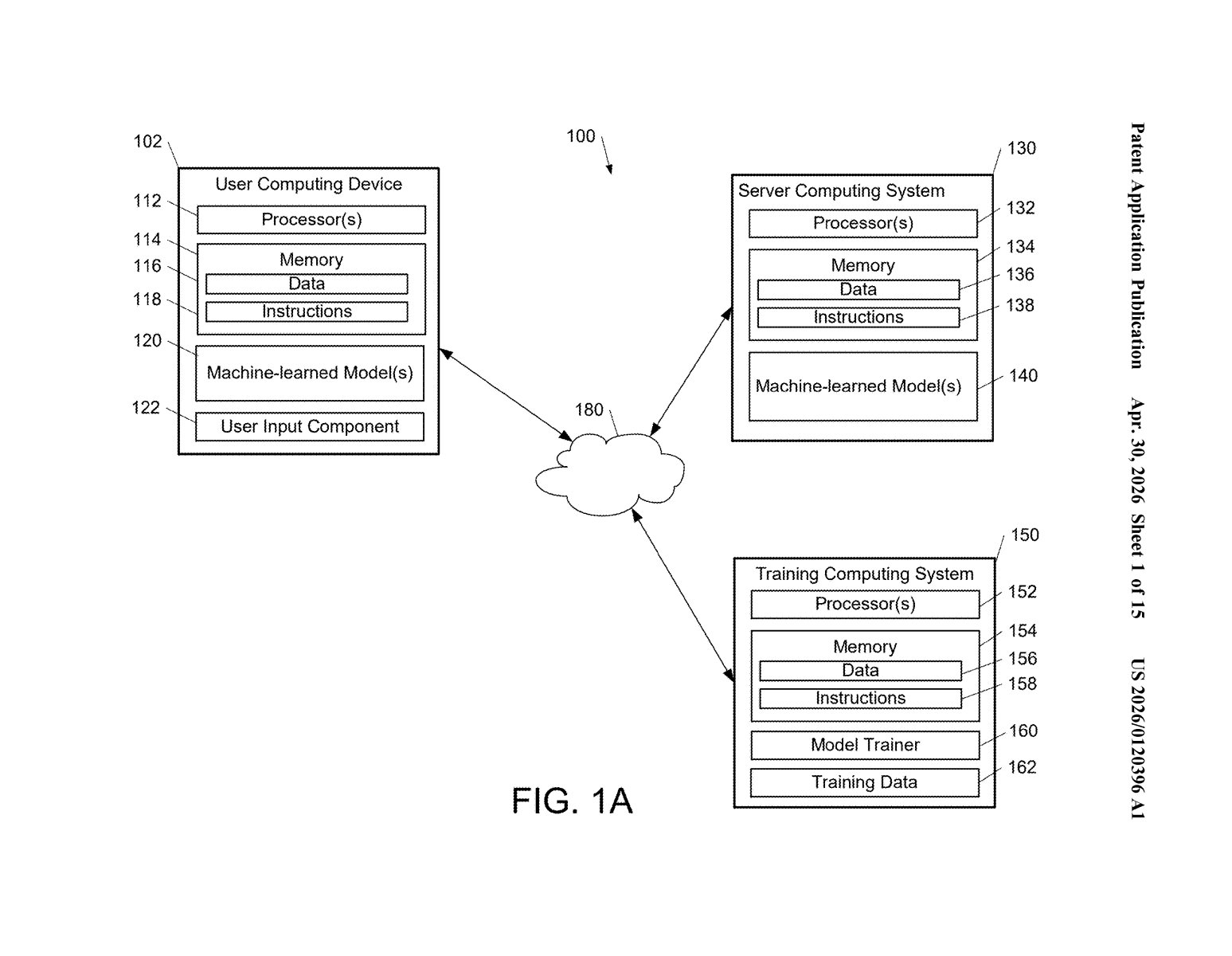

The patent describes a two-phase pipeline. In the first phase, the system ingests a set of ordinary 2D images — essentially frames pulled from a casual video walk — and analyzes them to understand the geometry of the space and the camera's path through it.

From that initial analysis, it determines a set of scan nodes: specific positions along the path where additional, higher-fidelity images should be captured. These aren't random — they're algorithmically selected locations that will best fill in gaps in the 3D reconstruction. The system then generates explicit capture instructions tied to each node (think: "stand here, point the camera at this angle").

In the second phase, those targeted scans are actually captured — either by guiding a human operator, an autonomous robot, or another imaging device back through the space. The system then fuses:

- The original casual walkthrough images

- The directed high-quality scans at each node

...into a single reconstructed 3D representation of the physical space.

The approach is notable because it treats the rough walkthrough not as the final input, but as a planning document — a cheap first pass that makes the expensive, precise scanning more efficient.

What this means for Google Maps and indoor navigation

For Google, the most obvious application is Google Maps indoor mapping and Street View-style coverage of spaces that are currently hard to capture at scale — stores, offices, transit stations, homes. A system that lets anyone with a phone do a rough walkthrough, then automates the precision scanning, could dramatically lower the cost and effort of building that kind of coverage.

For you as a user, this could eventually mean getting accurate indoor navigation in malls or airports, or having a landlord share an actual 3D walkthrough of an apartment rather than cherry-picked photos. It also connects to Google's broader AR ambitions — high-quality 3D spatial maps are the foundational layer for augmented reality overlays that know exactly where they are in a room.

This is a genuinely practical piece of computer vision engineering. The two-phase design — rough pass first, then directed precision scanning — is an elegant solution to a real cost problem in spatial mapping. It's not a flashy AI announcement, but it's the kind of infrastructure patent that quietly underpins Google Maps features that hundreds of millions of people actually use.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.