Meta Patents a System That Fills AI Assistant Wait Time With Personalized Content

While Meta's AI assistant is off processing your request, you're just... waiting. This patent describes a system that turns those dead seconds into a personalized content feed — and the longer the wait, the richer the content it serves you.

What Meta's idle-time content system actually does

Imagine you ask your AI assistant to do something that takes a few seconds to complete — maybe look something up, or generate something. Right now, you just stare at a loading screen. Meta's patent describes a smarter approach: fill that wait with content tailored to you, and matched to how long you'll be waiting.

The key insight is that not all waits are equal. A two-second pause gets you something quick to glance at — maybe a short notification or a simple card. A thirty-second wait unlocks richer content, like a friend's story or a longer video clip. The system figures out the expected idle time before it starts, then fetches something appropriately engaging.

The content is also personalized using context from whatever you were just doing — so it's not random filler, but something genuinely relevant to your interests and your current activity.

How Meta matches content length to predicted wait time

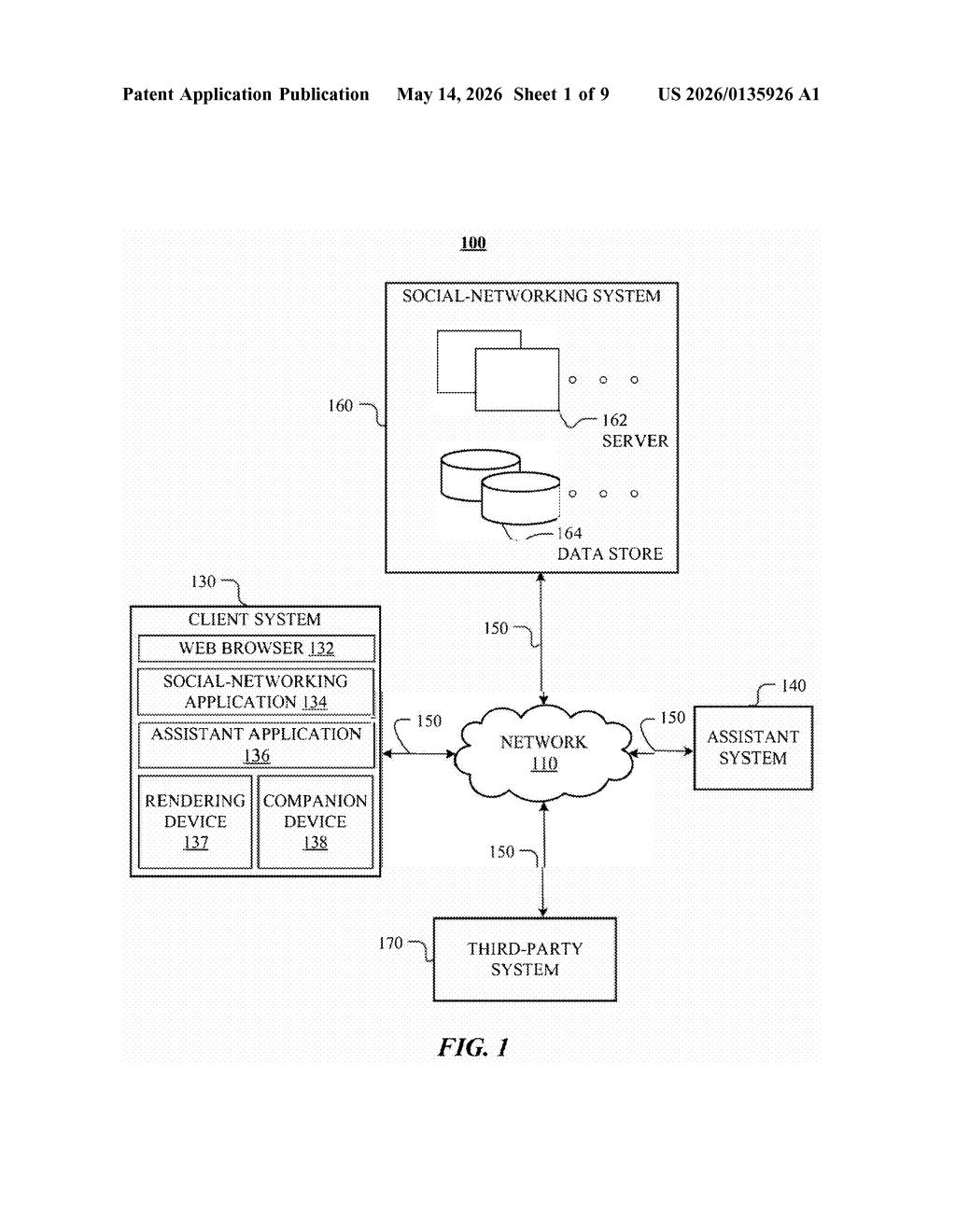

The patent describes a server-side system tied to an assistant platform — think Meta AI running on Ray-Ban smart glasses or the Meta app. When a user submits a task, the system calculates a predicted idle time (the window between task submission and result delivery) based on the nature of the request.

It then determines a level of interactivity for the content it will serve during that window. Shorter idle times map to low-interaction content (a quick glance, a static card), while longer idle times unlock higher-interaction content (a friend's Stories post, a short-form video) that warrants sustained attention.

- Idle time calculation: derived from the task type and user input at submission time, not retroactively

- Content retrieval: pulls from a personalized pool filtered by interactivity level and contextual signals from the task

- Client-side rendering: instructions are sent to the device to present the content during the wait window

The claim structure explicitly covers dynamic adjustment across multiple points in time — if a second task has a longer idle window than a first, the system escalates to content with a proportionally greater expected user interaction time. This suggests the system can adapt across an entire session, not just per-request.

What this means for Ray-Ban glasses and Meta AI users

For Meta, idle time is wasted real estate — and on a device like Ray-Ban smart glasses, where the screen (or audio channel) is already occupied, every second of wait time is a missed engagement opportunity. This patent is essentially a framework for monetizing attention during the gaps your AI assistant creates.

For you as a user, it could genuinely improve the experience — a well-timed friend's Story during a ten-second wait is less annoying than a spinner. But it also means Meta has filed IP around the idea of algorithmically filling every idle moment with content from its social graph. Whether that's a feature or a concern depends on how much you trust Meta's personalization engine.

This is a strategically interesting patent for Meta specifically because it sits at the intersection of AI assistant UX and social feed engagement — two things Meta desperately wants to unify. It's not technically novel in a deep sense (it's essentially adaptive content scheduling), but as a product layer on top of Meta AI it makes a lot of sense. The Ray-Ban glasses use case practically writes itself.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.