Microsoft Patents an AI System That Reads Security Logs and Summarizes Threats Automatically

Security logs are famously unreadable — thousands of raw lines that analysts have to sift through to find the one event that matters. Microsoft's new patent describes a system that ingests those logs, detects anomalies automatically, and then uses a generative AI model to write a plain-English summary of what went wrong.

What Microsoft's hybrid-embedding threat detector actually does

Imagine your company's servers generate millions of log entries every day — login attempts, file accesses, network connections. Most of it is noise. But buried in that noise might be a hacker quietly moving through your systems. Finding that needle in the haystack is what security analysts spend their lives doing.

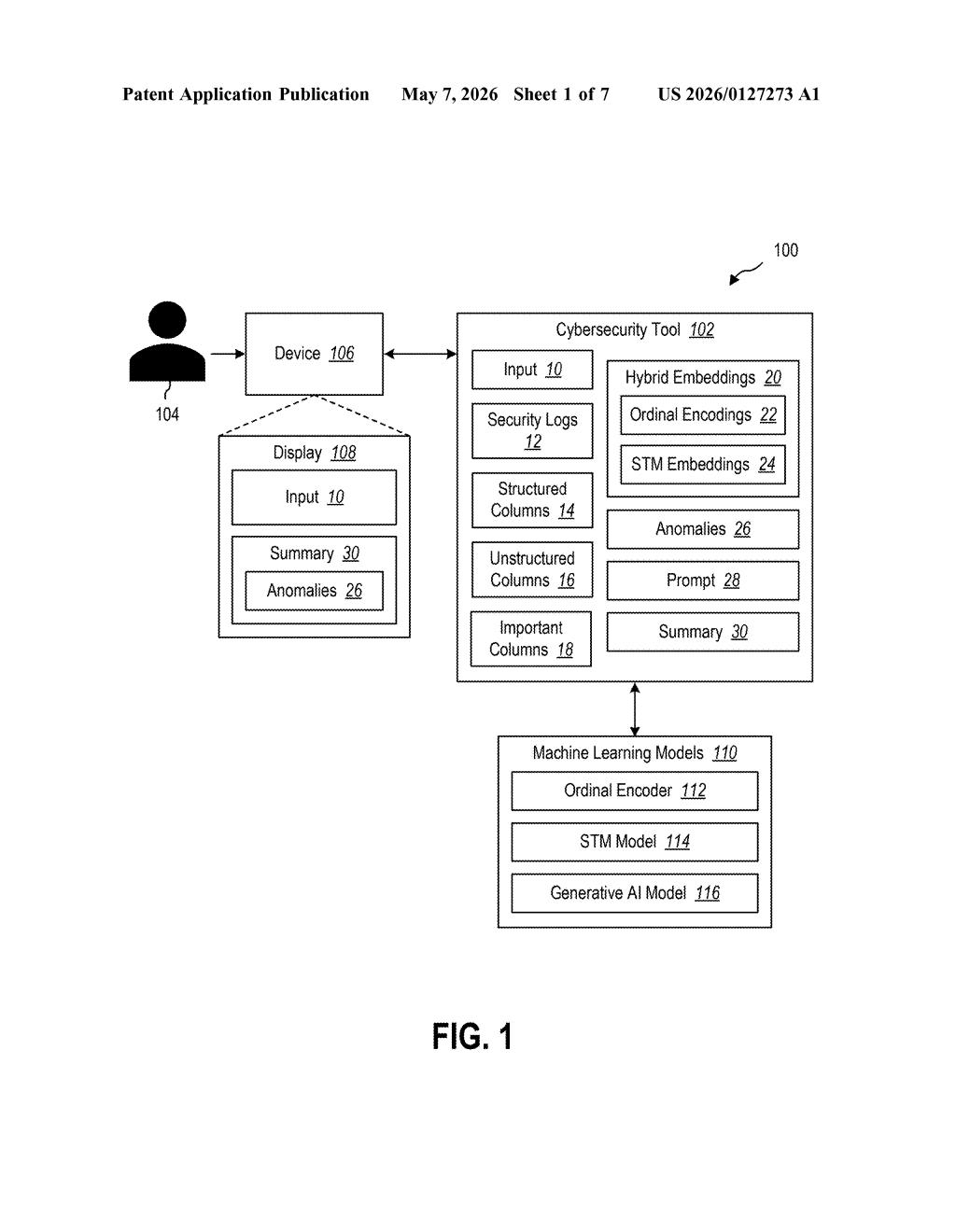

Microsoft's patent describes a tool that does much of that grunt work automatically. It takes those raw logs — which contain a mix of numbers, categories, and free-form text — and converts them into a unified mathematical representation called a hybrid embedding. That combined representation is fed into a machine learning model that flags anything that looks out of the ordinary.

Once an anomaly is flagged, the system doesn't just throw an alert number at the analyst. It constructs a prompt and sends it to a generative AI model, which writes a human-readable summary of the threat. That summary then goes to a "security mitigation agent" — software (or a person) responsible for actually fixing the problem.

How hybrid embeddings catch anomalies in raw log data

The core innovation here is the hybrid embedding approach. Security logs are messy: some fields are structured (user IDs, IP addresses, event codes), while others are unstructured text (error messages, file paths). Treating them uniformly is hard.

The system handles this by applying two different encoding strategies in parallel:

- Ordinal encodings — a technique that converts categorical data (like event type labels) into ordered numbers a model can process

- STM (Sentence Transformer Model) embeddings — a method borrowed from NLP that converts free-text fields into dense vectors capturing semantic meaning

These two representations are merged into a single hybrid embedding per log entry, which a downstream machine learning model then scans for anomalies — patterns that deviate significantly from normal behavior.

When an anomaly is detected, the system dynamically constructs a prompt (a structured set of instructions) and passes it to a generative AI model — think something in the GPT family. The GenAI model returns a plain-language summary of what the anomaly looks like and why it's suspicious. That summary is routed to a security mitigation agent, which could be an automated remediation system or a human analyst, configured to take corrective action.

What this means for enterprise security operations teams

Security operations centers (SOCs) are drowning in alerts — a well-documented industry problem called "alert fatigue." Analysts miss real threats because they're buried under thousands of low-quality notifications. A system that not only detects anomalies but explains them in plain language could meaningfully reduce the cognitive load on your security team.

This fits squarely into Microsoft's broader push to embed AI into its Sentinel and Defender security product lines. The patent's mention of a "security mitigation agent" that receives the output summary suggests Microsoft is positioning this as a component in a larger agentic security workflow — where AI doesn't just flag problems but initiates responses.

This isn't a flashy moonshot — it's a practical, well-scoped engineering patent for a real and painful problem in enterprise security. The hybrid embedding approach for mixed-format log data is the genuinely interesting technical contribution here; the GenAI summarization layer is almost table stakes at this point. If Microsoft ships this inside Sentinel or Copilot for Security, it could quietly become one of the more useful AI features in the enterprise security stack.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.