Microsoft Patents NVMe Command Batching for Faster Network Storage

Microsoft is patenting a way to make cloud storage behave more like local storage — by teaching a hardware controller to bundle commands together and route them intelligently before they ever hit the network.

What Microsoft's storage acceleration trick actually does

Imagine your app keeps asking for files stored on a remote server — one small request at a time. Each round trip takes time, and those delays add up fast, especially in a data center where thousands of apps are doing the same thing.

Microsoft's patent describes a smarter approach: a hardware controller that sits between your app and the remote storage, collects a sequence of related storage commands, and fires them all off as a single bundled package. Think of it like batching your grocery list instead of making a separate trip to the store for each item.

The system also handles the routing side of things. Before sending anything, it checks in with a broker — a kind of traffic coordinator — to find out exactly which storage node it should talk to. That way, each request lands directly where it needs to go, skipping unnecessary middlemen.

How the NVMe controller fuses and routes storage commands

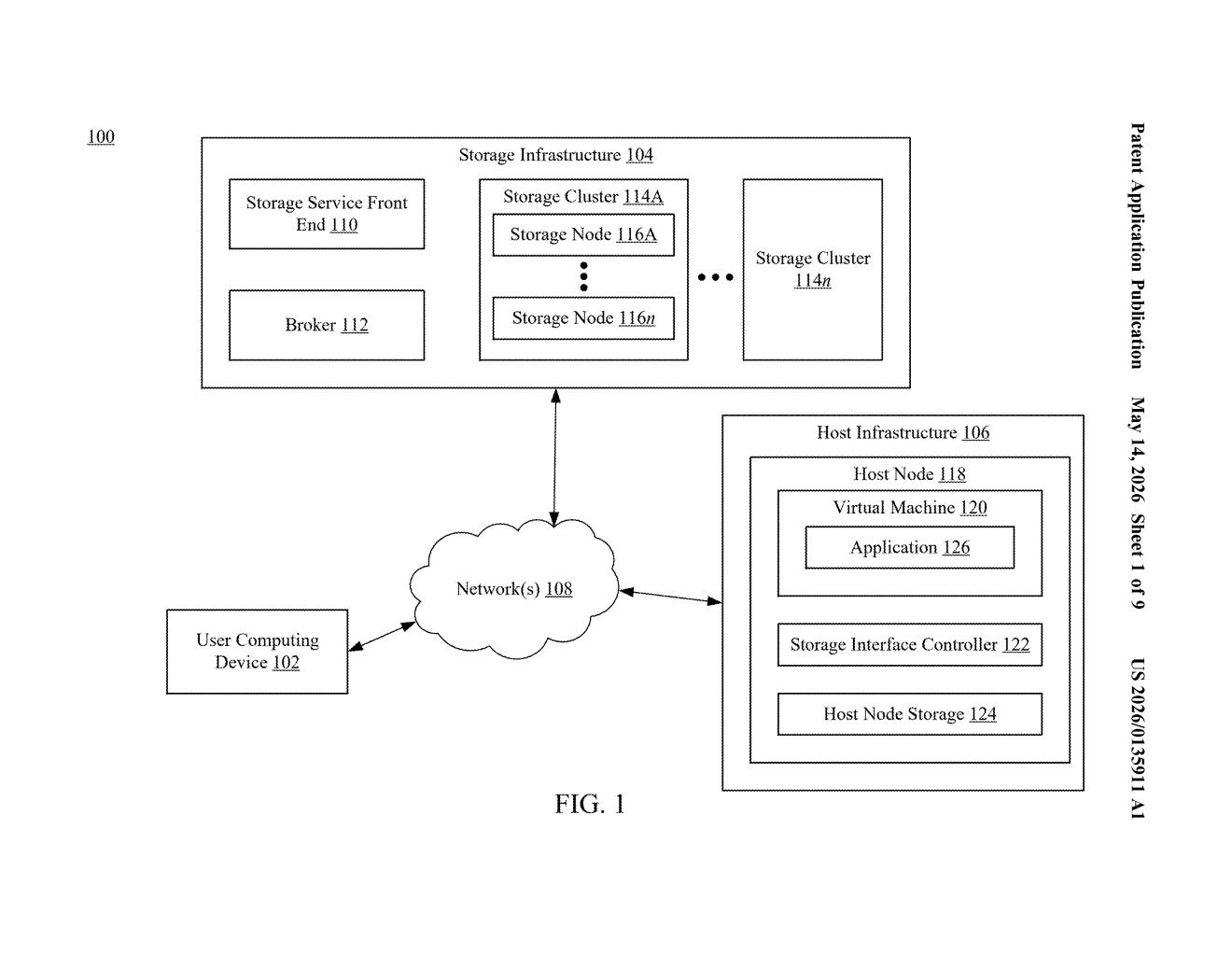

The patent describes a storage interface controller — specifically an NVMe controller (NVMe, or Non-Volatile Memory Express, is the modern protocol used to talk to fast flash storage) — that's been extended to work with remote, network-attached storage systems instead of just local drives.

Here's how the flow works:

- An application sends a series of commands, each tagged to indicate its position: first, intermediate, or last in the sequence.

- The controller collects these and generates a single fused command — one bundled payload preserving the original order.

- Before transmitting, the controller contacts a broker at a known endpoint (think: a directory service for storage nodes) using a session token to authenticate the connection.

- The broker responds with the specific network address of the target storage node, which maps directly to a storage object.

- The fused command is then sent straight to that node.

The key insight is moving the batching and routing logic into the hardware controller layer rather than the application or OS. That means less software overhead and potentially much lower latency for workloads that hammer remote storage repeatedly.

What this means for cloud and enterprise storage latency

For cloud infrastructure and enterprise data centers, storage latency is one of the most stubborn bottlenecks. If Microsoft can push NVMe-style command fusion down into a dedicated controller chip, workloads like databases, virtual machines, and AI training jobs — all of which do enormous volumes of small, sequential storage I/O — could see meaningful throughput gains without changing a line of application code.

This fits squarely into Microsoft's Azure infrastructure strategy. Azure runs massive fleets of storage nodes, and any hardware-level acceleration that reduces per-request overhead at scale translates directly into cost savings and competitive performance benchmarks. It also aligns with the industry-wide push toward computational storage — offloading smarts from the CPU to purpose-built controllers closer to the data path.

This is infrastructure plumbing, not a flashy AI patent — but it's the kind of low-level optimization that quietly determines whether a cloud platform wins or loses on performance benchmarks. The NVMe-over-network angle is real engineering work, and the command-fusion mechanism is a genuinely clever way to reduce round-trip overhead without touching application code. Worth paying attention to if you follow data center hardware.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.