Nvidia Patents an AI System That Maps Traffic Lights to Specific Lanes

Knowing a traffic light is red is only half the problem for a self-driving car — it also needs to know which lane that red light actually applies to. Nvidia's new patent tackles exactly that, using a machine learning model trained entirely on geometry and rules, not camera footage.

How Nvidia's lane-to-light AI works without camera images

Imagine you're at a complex intersection with five lanes and three traffic lights hanging overhead. As a human driver, you've learned through experience — and a lot of context clues — which light governs your lane. For a self-driving car, that mapping is surprisingly hard to get right, especially in unusual or first-time road configurations.

Nvidia's patent describes an AI system that figures out which traffic light belongs to which lane. The clever part: it doesn't rely on camera images at all. Instead, it uses geometric and semantic data — things like where a traffic light is physically positioned and what type of road lane it's near — to make the association.

The model is trained on synthetically generated data based on real traffic regulations about where lights are supposed to be placed. That means Nvidia can build a large, accurate training dataset without needing to manually label thousands of real-world intersection photos.

How the ML model scores light-to-lane associations

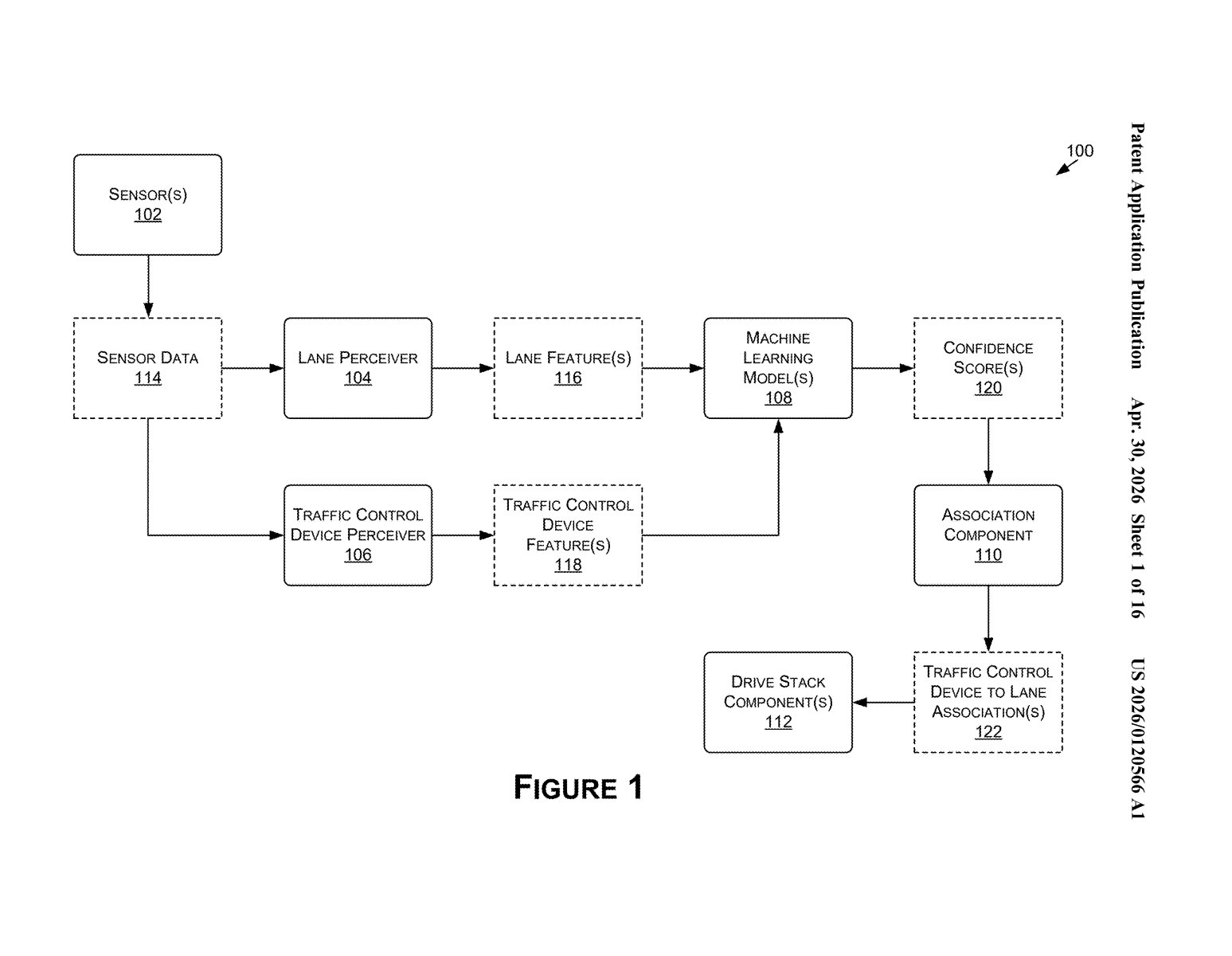

At its core, the patent describes a machine learning pipeline that takes two types of input and produces a confident answer about which traffic light governs which lane.

The first input is lane segment features — geometric information (like the shape, direction, and position of each lane) and semantic information (like whether it's a turn lane or a through lane). The second input is traffic light features — where the light is physically located, what direction it faces, and what kind of signal it is.

The ML model then outputs a set of confidence scores (essentially, probability estimates for each possible light-to-lane pairing). The system picks the highest-confidence associations and passes them to the vehicle's drive stack — the software layer that actually controls acceleration, braking, and steering.

A key design choice here is the use of synthetically generated training data derived from traffic placement regulations. Because real-world ground-truth labels for light-lane associations are expensive to collect, Nvidia sidesteps that problem by generating artificial but regulation-compliant scenarios. The rule-based ground truth teaches the model what a correct association looks like, while the ML layer handles the messiness of real-world variation.

What this means for autonomous driving reliability

For autonomous vehicles, misidentifying which traffic light applies to your lane isn't a minor inconvenience — it's a safety-critical failure. A car that brakes for a light meant for the adjacent left-turn lane, or worse, proceeds through a light it thinks is green but isn't, creates real danger. This system is aimed squarely at reducing that class of error.

The image-free approach is also practically significant. Camera-based perception can degrade in bad weather, glare, or at night. By grounding lane-light associations in geometry and map data rather than live image feeds, Nvidia's approach adds a more robust, weather-independent layer to the decision-making stack — exactly the kind of redundancy that mature autonomous driving systems need.

This is unglamorous but genuinely important work. The light-to-lane association problem is one of those things that sounds trivial until you're actually building a self-driving system and realize intersections are wildly inconsistent. The synthetic training data angle is smart engineering — it lets Nvidia scale without a massive labeling operation. This reads like something heading directly into the next iteration of Drive.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.