Nvidia Patents a Three-Agent AI Pipeline for Automatic Code Modification

Instead of asking one AI model to rewrite your code from scratch, Nvidia's new patent proposes splitting the job across three specialized models — one to spot problems, one to plan fixes, and one to actually apply them.

How Nvidia's three-AI code editor actually works

Imagine handing a messy codebase to a single AI assistant and asking it to clean everything up. That works, sometimes — but a single model trying to identify bugs, figure out a fix strategy, and rewrite the code all at once is juggling a lot.

Nvidia's patent describes a smarter division of labor. A first AI model reads your code and flags what needs to change. A second model takes that list and builds a step-by-step plan for how to apply the changes. Then a third model actually rewrites the code, guided by that plan.

Think of it like the difference between one person doing everything versus a team where a reviewer, a project manager, and a developer each handle their specialty. Each stage stays focused, which Nvidia is betting leads to better results than a single model trying to do it all.

How the three language models divide the work

The patent describes a multi-agent language model pipeline specifically designed for automated code modification — things like bug fixing, refactoring, or optimization.

The three-model architecture works like this:

- Model 1 (the identifier): Analyzes the input source code and produces a list of suggested modifications — what needs to change and why.

- Model 2 (the planner): Takes both the original code and the modification list, then generates a structured plan — essentially a roadmap for how to apply the changes in sequence without breaking things.

- Model 3 (the editor): Receives the original code plus the plan, and produces the final modified code.

The key insight is task decomposition — breaking a complex operation into smaller, more tractable subtasks that each specialized model handles well. In large language model research, this is a known technique for improving reliability: a model constrained to one job makes fewer errors than one trying to solve the whole problem end-to-end.

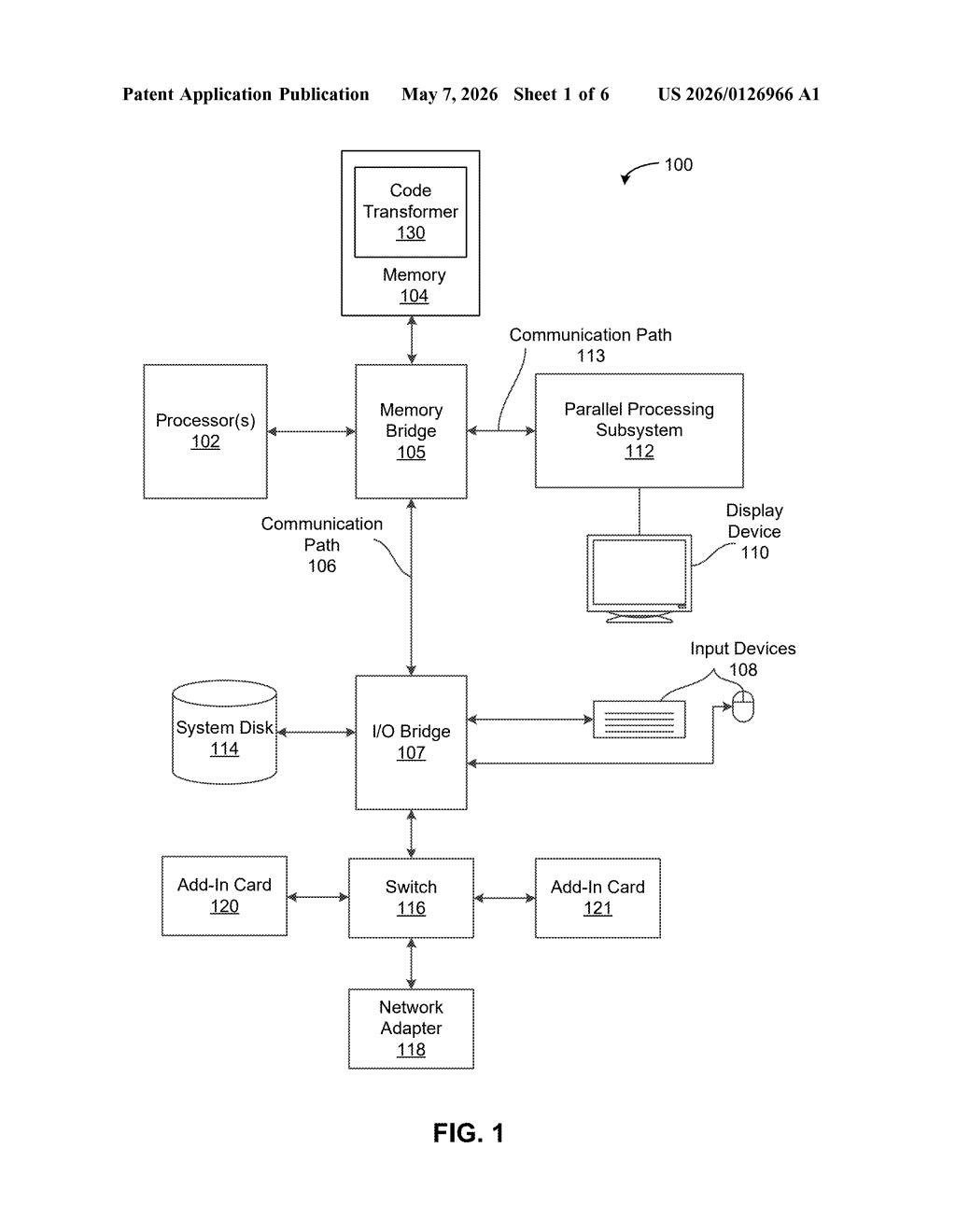

The patent is filed under USPC class 717/104, which covers automated code generation and modification tools. The inventors are from Nvidia's research side, suggesting this is closer to applied ML infrastructure than a product-ready feature.

What this means for AI-assisted software development

For developers, the practical pitch is reliability. Single-model code rewrites can go sideways because the model loses track of context or makes inconsistent changes across a large file. By separating what to change, how to change it, and actually changing it, Nvidia's approach tries to make each step more predictable.

This also fits neatly into Nvidia's broader push into AI software tooling — the company isn't just selling GPUs to run AI, it's increasingly building the AI workflows that run on those GPUs. A code-modification agent pipeline would be a natural fit for developer-facing products like NIM microservices or future updates to its AI coding tools.

The three-agent split is a sensible engineering approach with real precedent in multi-agent AI research, and it's worth paying attention to coming from Nvidia — a company with the compute and model-training resources to actually make this work at scale. That said, the core idea (chain specialized models together for complex tasks) is well-trodden territory, so the defensibility of this patent will depend heavily on the implementation details the abstract doesn't fully reveal.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.