Apple Patents Eye-Tracking Filter That Corrects Gaze Data at Lens Boundaries

When you wear a headset and glance toward the edge of a lens, your eye tracker can pick up gaze data that's technically pointing at something you can't actually see — Apple's latest patent quietly fixes that.

What Apple's lens-boundary gaze correction actually does

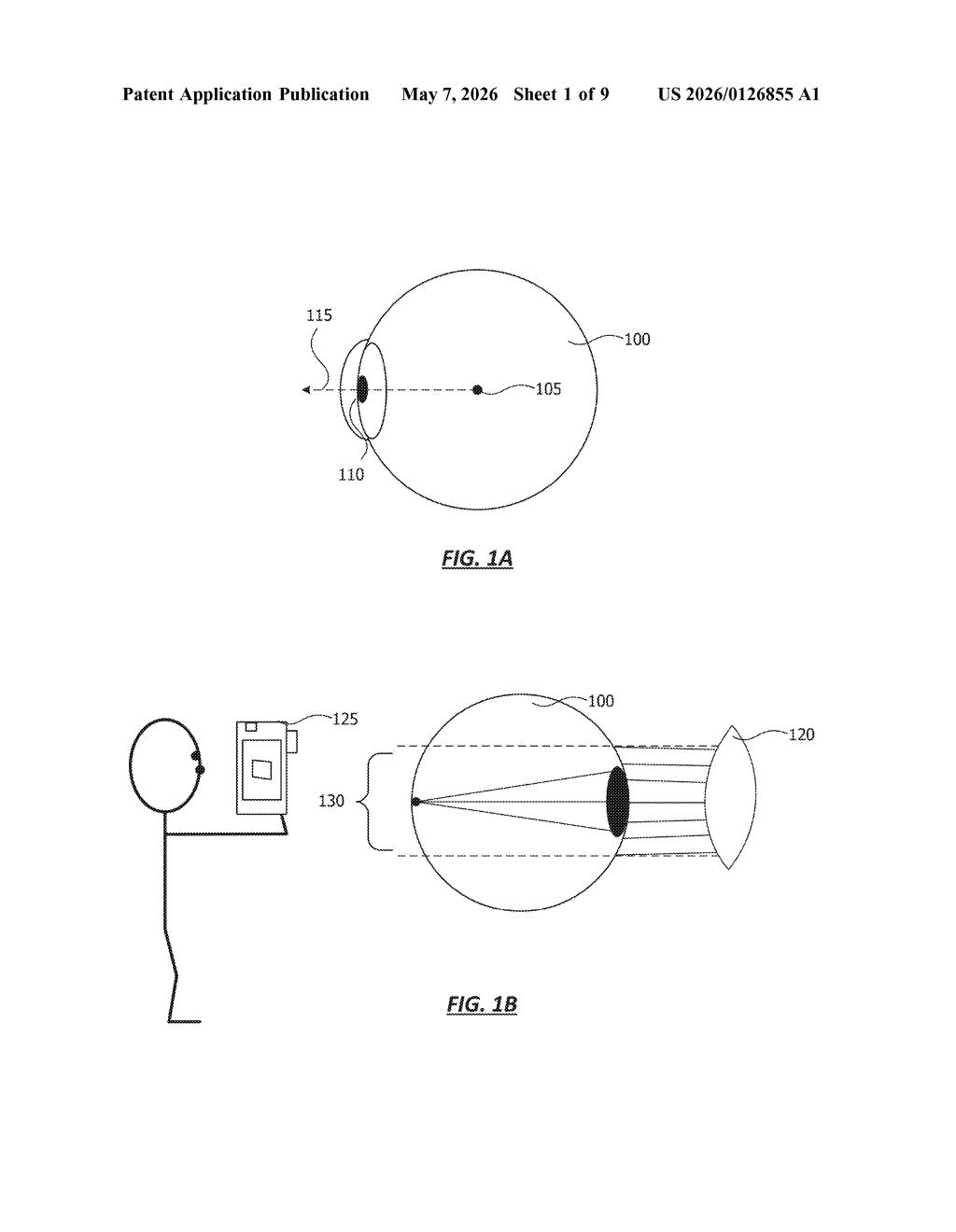

Imagine you're wearing Apple Vision Pro and you glance toward the very edge of your field of view. Your eye might be pointing at a spot on the screen that's actually outside the visible area of the lens — think of it like trying to read text that's behind the frame of your glasses. The eye tracker dutifully records that gaze position, but since you can't actually see that pixel, the data is misleading.

Apple's patent describes a system that catches these out-of-bounds gaze readings and replaces them with the nearest valid point inside the lens's visible region. Instead of throwing away the data or freezing the tracker, it finds the closest pixel you could see and recalculates your gaze angle from there.

The same logic applies to pupil position: if your pupil drifts outside a defined bounding box around the lens, the system substitutes a corrected position back inside that box. The result is smoother, more reliable eye tracking even when your eyes are near the edges of what the optics can capture.

How Apple snaps out-of-bounds gaze angles back into view

The patent describes two closely related correction mechanisms, both aimed at keeping eye-tracking data valid when the raw readings fall outside what the lens physically allows.

Gaze-direction correction: The system continuously tracks where your gaze is pointed on the display. If the targeted pixel lands outside the visibility region — the portion of the screen actually visible through the lens — it doesn't discard the data. Instead, it identifies a replacement pixel inside the visible region that is geometrically closest to the original gaze target, then recomputes a corrected gaze angle using your eye position and the new pixel's coordinates. This corrected angle is then fed into downstream eye-tracking functions.

Pupil-position correction: A parallel mechanism works at the pupil level. The system defines a bounding box around each lens — essentially a rectangle representing the valid range of pupil positions the optics can meaningfully read. If a detected pupil position falls outside that box (say, because the user shifted the headset or glanced hard to one side), it substitutes the nearest valid position within the box before continuing.

Together, these filters prevent bad edge-case data from propagating into:

- Foveated rendering pipelines (which sharpen only where you look)

- UI interaction logic (scroll, select, focus)

- Calibration routines that build a personal gaze model

What this means for Vision Pro eye-tracking accuracy

Eye tracking in a headset isn't just a cool feature — it's the engine behind foveated rendering, the technique that lets Vision Pro render sharp detail only where you're actually looking, saving enormous GPU load. If the gaze data is corrupted by lens-edge artifacts, foveated rendering can produce visible blurs or pop-in exactly where your attention is focused, which defeats the whole purpose.

For you as a user, this kind of filtering is invisible when it works — which is exactly the point. It's the difference between a headset that feels glitchy at the periphery and one that just works. As Apple pushes Vision Pro (and any future lighter successors) toward more everyday use, robust edge-case handling like this becomes foundational plumbing, not an optional polish pass.

This is classic Apple infrastructure work — not a headline feature, but the kind of careful engineering that separates a product that feels polished from one that feels prototype-y. Eye tracking at lens boundaries is a known pain point for all optical passthrough and VR headsets, and a clean software-side fix like this is smarter than trying to solve it purely in optics. It's worth paying attention to because it signals Apple is hardening the eye-tracking stack, not just shipping a v1 and moving on.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.