Apple Patents a Linked Display System That Senses How Two Devices Overlap

Apple is exploring a system where two devices — think an iPad laid on top of a MacBook — can detect exactly how they're overlapping and then share their screens and inputs as one unified experience.

What Apple's overlapping linked-display mode actually does

Imagine sliding your iPad partway over your MacBook's keyboard. Instead of treating them as two separate gadgets, what if your Mac knew exactly how much of the iPad was covering it — and the two devices instantly started acting like a single, larger display?

That's the core idea in this Apple patent. When two devices are placed near or on top of each other, they enter what the patent calls a linked mode. In that mode, one device's keyboard or touchpad can control what's showing on the other device's screen, and content can be arranged across both displays based on how they're physically positioned.

To pull this off, Apple describes using capacitive sensors — the same kind of touch-sensing tech in your phone's screen — to precisely measure how two devices are overlapping. That position data drives everything: what content appears where, and which device is in charge of input.

How capacitive sensors map two overlapping Apple devices

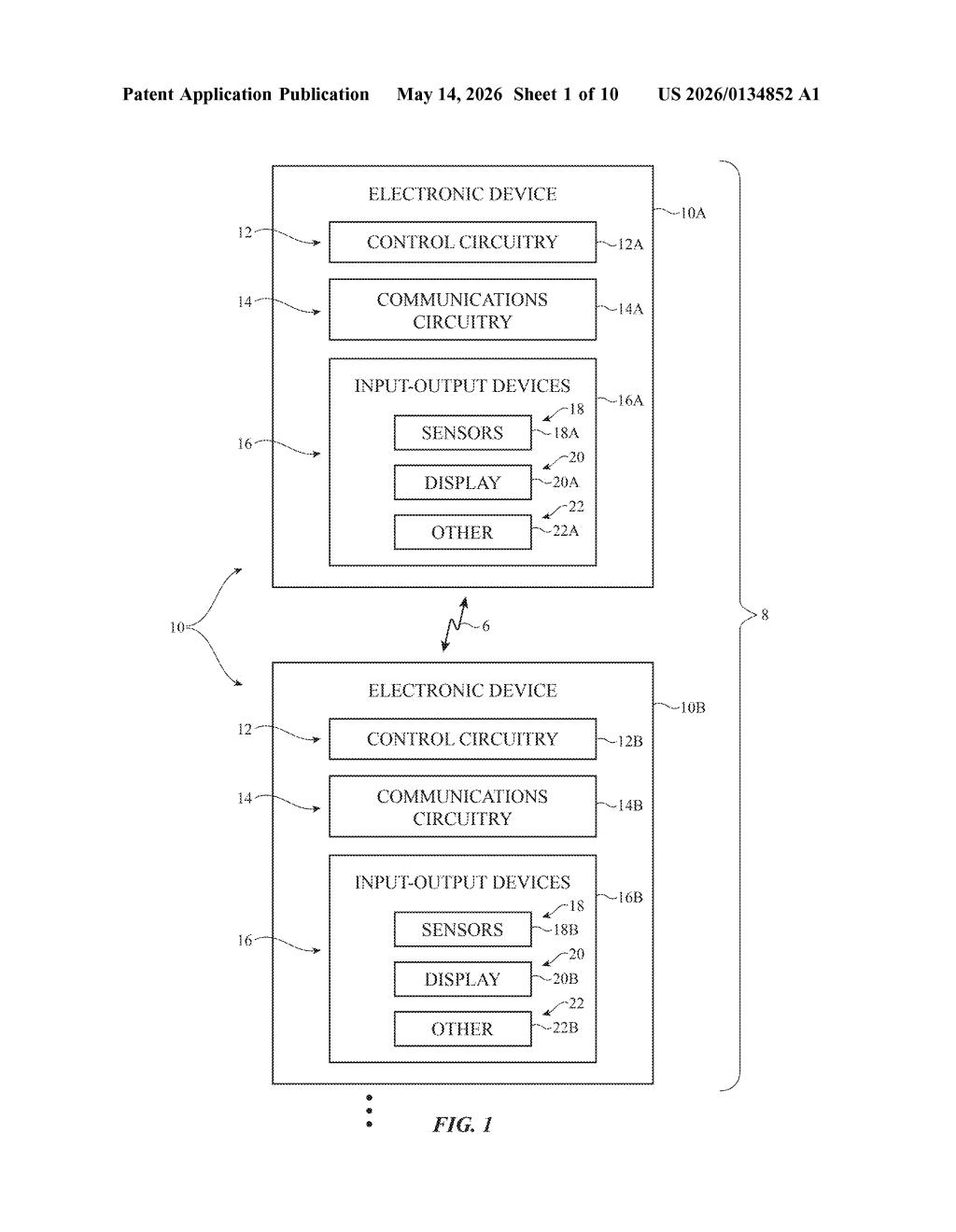

The patent describes a linked mode of operation between two separate computer systems — each with its own processor — that kicks in when the devices are placed adjacent to or overlapping one another.

Once linked, the system works like this:

- One device's processor takes on a coordinating role, sending display instructions to the other device's processor over a wireless connection.

- Input gathered on one device (keyboard, trackpad, touch) can control content rendered on the other device's screen.

- A capacitive sensor (or similar position-sensing hardware) measures the precise relative position of the two devices — how far they overlap, at what angle, and so on.

- That positional data adjusts how content is split, scaled, or continued across both displays in real time.

The claim structure puts the intelligence on the first device's processor, which both produces its own display content and remotely controls what the second device shows — all driven by the live position measurement. The wireless link (likely Wi-Fi or Bluetooth) handles the low-latency communication between the two systems without requiring a cable.

What this means for iPad, Mac, and multi-device setups

Apple already ships a feature called Sidecar that lets an iPad act as a second display for a Mac, but it doesn't know or care how the two devices are physically positioned relative to each other. This patent adds a spatial awareness layer — the system would react to how you've physically arranged the devices, not just that you've connected them.

For you as a user, that could mean content automatically flowing to fill the combined screen real estate, or a virtual bezel seamlessly bridging two displays when you physically slide them together. The inclusion of John Ternus — Apple's SVP of Hardware Engineering — among the inventors signals this isn't a fringe research filing. It's being taken seriously at a senior level.

This patent is genuinely interesting because it adds a missing dimension to Apple's existing multi-device ecosystem: physical spatial awareness. Sidecar and Universal Control are useful but static — they don't adapt to how you've positioned your hardware. A system that senses overlap and adjusts content layout accordingly would make multi-device setups feel a lot more natural. The presence of senior hardware leadership in the inventor list makes this worth watching.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.