Apple Patents a Smarter AR Plane Detection System Using Semantic ML

Getting AR objects to stick convincingly to real-world surfaces is harder than it looks — and Apple's latest patent targets one of the trickiest parts: figuring out where a floor ends and a wall begins.

What Apple's semantic plane-estimation system actually does

Imagine you're using an AR app to place a virtual lamp next to your couch. For the lamp to look real, your phone needs to understand the geometry of the room — not just that there's a flat surface, but which flat surface it is, how far it extends, and where it transitions into a wall.

Apple's patent describes a system that uses machine learning to analyze camera images and label what it sees — floor, table, wall, ceiling — while also measuring which direction each surface is facing. By combining those two pieces of information, it can group surface patches into coherent planes and extend them intelligently, even into areas the camera hasn't directly observed.

The patent also describes a specific trick: using the edges of detected horizontal planes (like a floor) as a cue for finding nearby vertical planes (like a wall). That means your AR app gets a more complete, room-scale understanding of the space around you — without requiring you to wave your phone at every corner.

How semantic labels and surface normals extend 3D planes

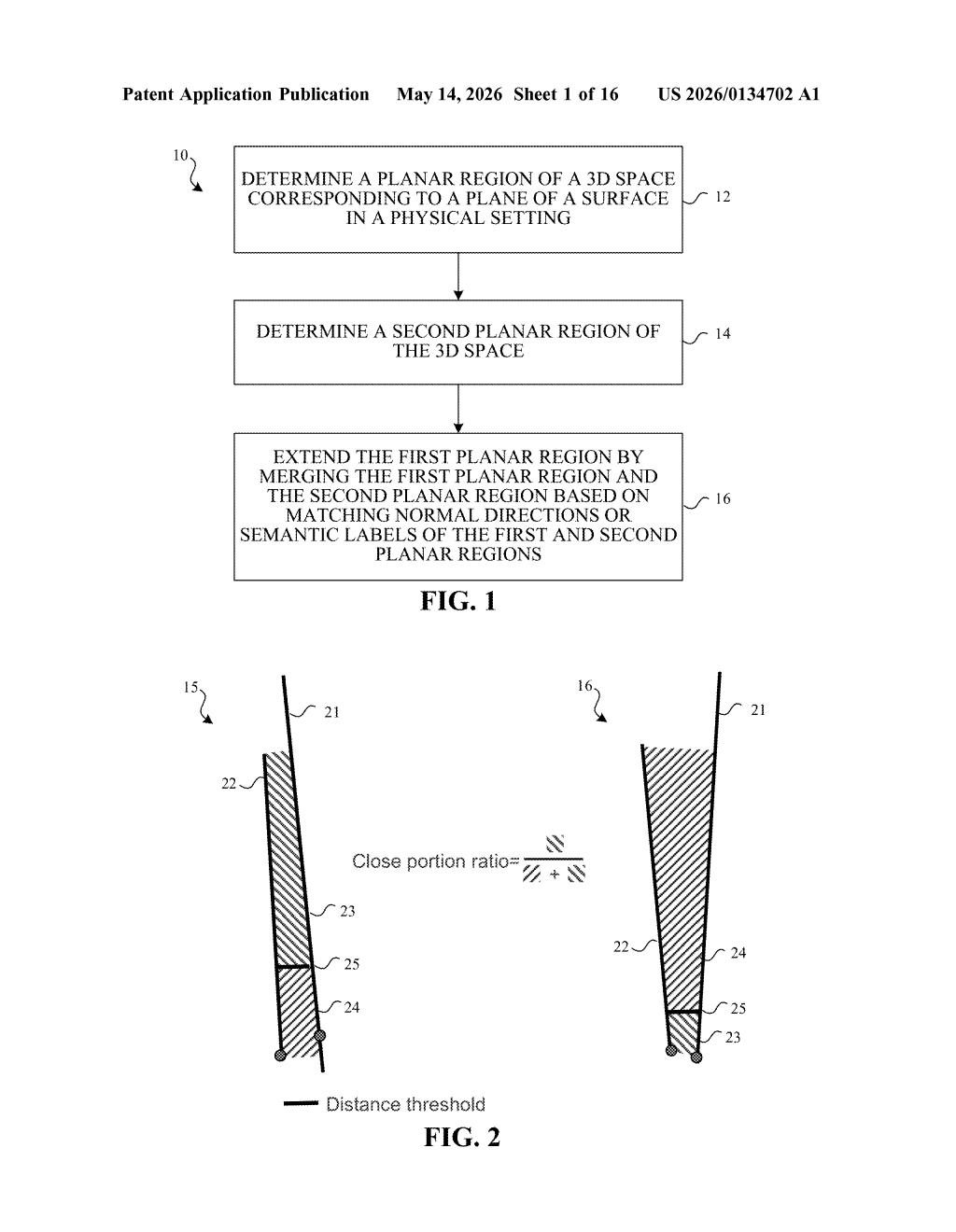

The system works in two broad phases: surface segmentation and plane extension.

In the first phase, a machine learning model processes camera images to produce two outputs simultaneously: a semantic segmentation map (a pixel-by-pixel labeling of what's in the scene — floor, wall, table, etc.) and a surface normal map (a per-pixel estimate of which direction each surface is facing in 3D space). Combining these two outputs lets the system identify surface segments — coherent patches that share both a semantic label and a similar orientation.

In the second phase, those surface segments are matched against initial planar regions already detected in 3D space (think: the rough planes ARKit or a similar system has already found). When a segment matches a known plane closely enough, it's merged in, extending the plane's footprint into areas that might have been previously undetected or partially occluded.

The independent claim highlights a particularly clever sub-technique:

- Detect the extent (boundary) of a known horizontal plane (e.g., a floor)

- Use semantic segmentation to find vertical segments in the same image

- Find where the horizontal plane's edge meets those vertical segments

- Use that boundary to select and construct a vertical plane (e.g., a wall)

The system also includes a stability-based filtering step — only extending planes when the evidence is reliable enough — which helps avoid jittery or spurious geometry in the final AR scene.

What this means for AR surface tracking on Apple devices

Accurate, stable plane detection is the unglamorous backbone of almost every AR experience. If your phone thinks a wall starts six inches too far to the left, virtual furniture looks wrong, shadows fall incorrectly, and object occlusion breaks down. Apple's Vision Pro, iPhone AR apps, and any future headset all depend on getting this geometry right, and this patent suggests Apple is pushing that capability to be more semantically aware — not just geometrically aware.

For developers building RealityKit or ARKit experiences, a more robust plane-detection backend means less manual scene-setup work and fewer edge cases where planes fail to appear or snap to the wrong surface. For you as a user, it's the difference between an AR app that feels like a magic trick and one that feels like a glitchy tech demo.

This is solid, unglamorous infrastructure work — exactly the kind of patent that never gets a press release but quietly makes every AR demo look better. The semantic-plus-normals combination isn't a brand-new idea in computer vision research, but Apple patenting a specific pipeline for consumer hardware signals this is heading toward a shipping implementation. Worth paying attention to if you follow ARKit or Vision Pro development.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.