Google Patents a Smarter Warp Motion Signaling System for Video Compression

Google is filing patents around a codec trick called 'Warp Delta Mode' — a way to compress video more efficiently by reusing and refining complex motion models across frames instead of re-encoding them from scratch every time.

How Google's warp delta mode shrinks video streams

Imagine you're watching a video of a drone slowly panning over a city. The camera's motion follows a consistent, sweeping arc — the same basic movement carries over from one frame to the next. A smart video encoder should be able to say 'this frame moves almost exactly like the last one, with just a small tweak' rather than fully describing the motion again.

That's the core idea here. Google's patent describes a system where the encoder builds a warp reference list — essentially a short menu of previously used motion models — and lets each new block of video just point to the closest match on that list, then transmit only the small difference (the 'delta') needed to fine-tune it. Your video decoder reconstructs the full motion by combining the reference model with that tiny correction.

The practical payoff is fewer bits spent describing how things move, which means better quality video at the same file size — or the same quality at a smaller file size. It's the kind of incremental-but-meaningful improvement that defines modern codec work.

How the warp reference list and delta encoding interact

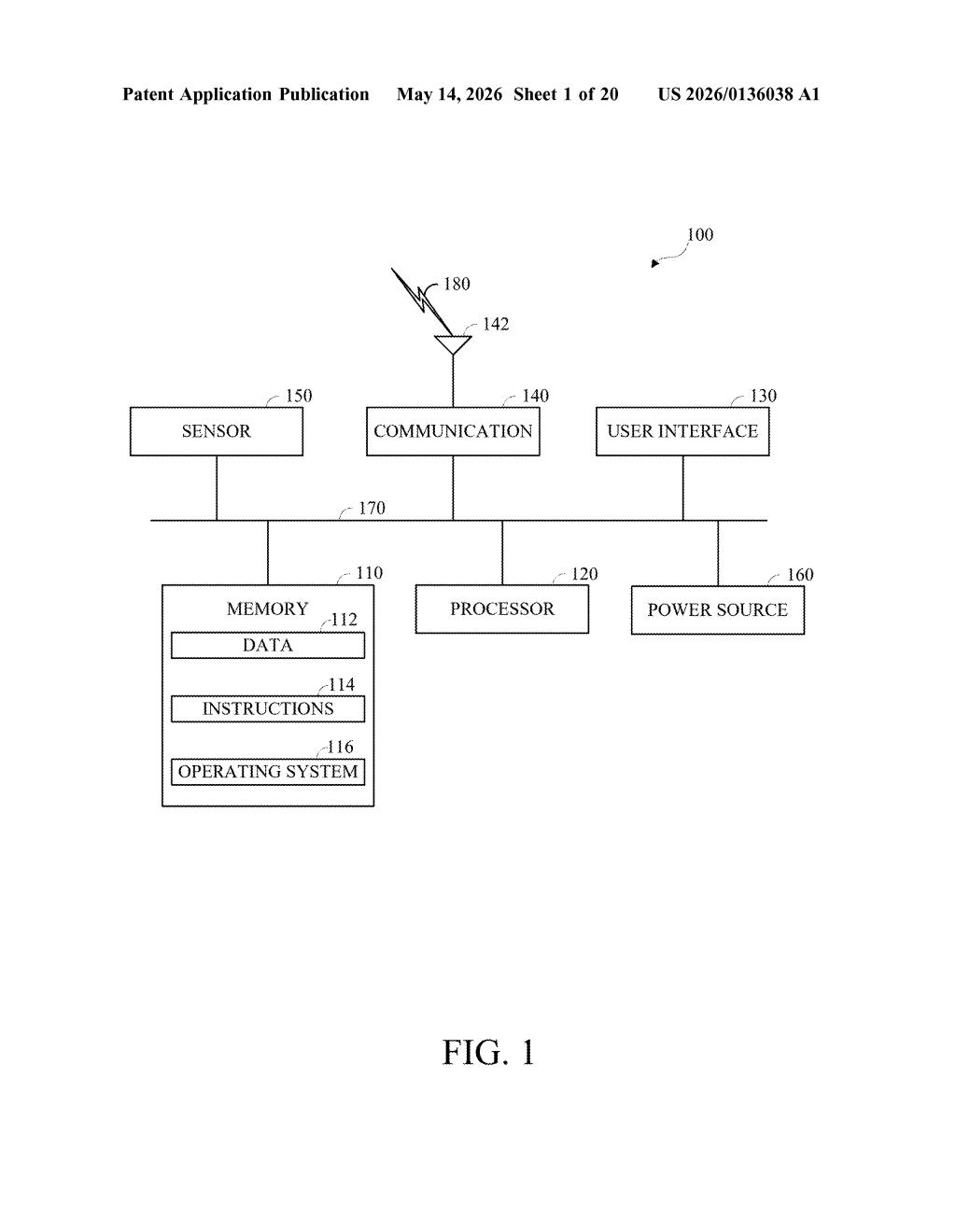

The patent describes a video decoding method that uses what Google calls 'Warp Delta Mode' to reconstruct video frames more efficiently.

Here's the core pipeline the decoder follows:

- It builds a warp reference list (WRL) — a catalog of candidate warp motion model parameters (mathematical descriptions of how a region of pixels is moving, including rotation, zoom, and shear, not just simple up/down/left/right translation).

- It reads a warp reference list index (WRI) from the compressed bitstream — a compact pointer that says 'use entry N from the reference list as your starting point.'

- It reads small differential warp parameters (the 'delta') also stored in the bitstream, then adds them to the referenced model to get the final, precise motion description for the current block.

- It uses that motion description to generate a predicted block, combines it with the separately decoded residual data (the leftover pixel error), and produces the reconstructed block.

The key insight is that warp motion models are expensive to signal in full. By maintaining a shared list and only transmitting corrections, the encoder spends far fewer bits per block. This is analogous to how inter-frame prediction (referencing previous frames) works in H.264/AVC and AV1 — but applied specifically to the higher-order, affine-style motion models that modern codecs increasingly rely on.

What this means for next-gen video codec efficiency

Modern video codecs like AV1 (which Google helped design) already support affine and warp-based motion models, but signaling those models efficiently is an open problem. This patent targets exactly that gap — reducing the overhead of transmitting complex motion descriptors, which becomes increasingly important as codecs push toward higher resolutions and more cinematic content with lots of camera motion.

Google controls both the AV1 codec standard (via the Alliance for Open Media) and major video infrastructure in YouTube. A technique like this fits naturally into the next-generation codec work that will eventually follow AV1 — likely AV2 — where squeezing out every bit of compression efficiency matters enormously at YouTube's scale.

This is solidly useful, unglamorous codec engineering. Warp delta mode is a logical extension of established prediction techniques, and the kind of incremental gain that separates a good codec from a great one at scale. It's not a headline idea, but if this lands in AV2 it will silently save enormous amounts of bandwidth across billions of video streams.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.