Nvidia Patents a Stochastic Texture Filtering System for Faster, Sharper GPU Rendering

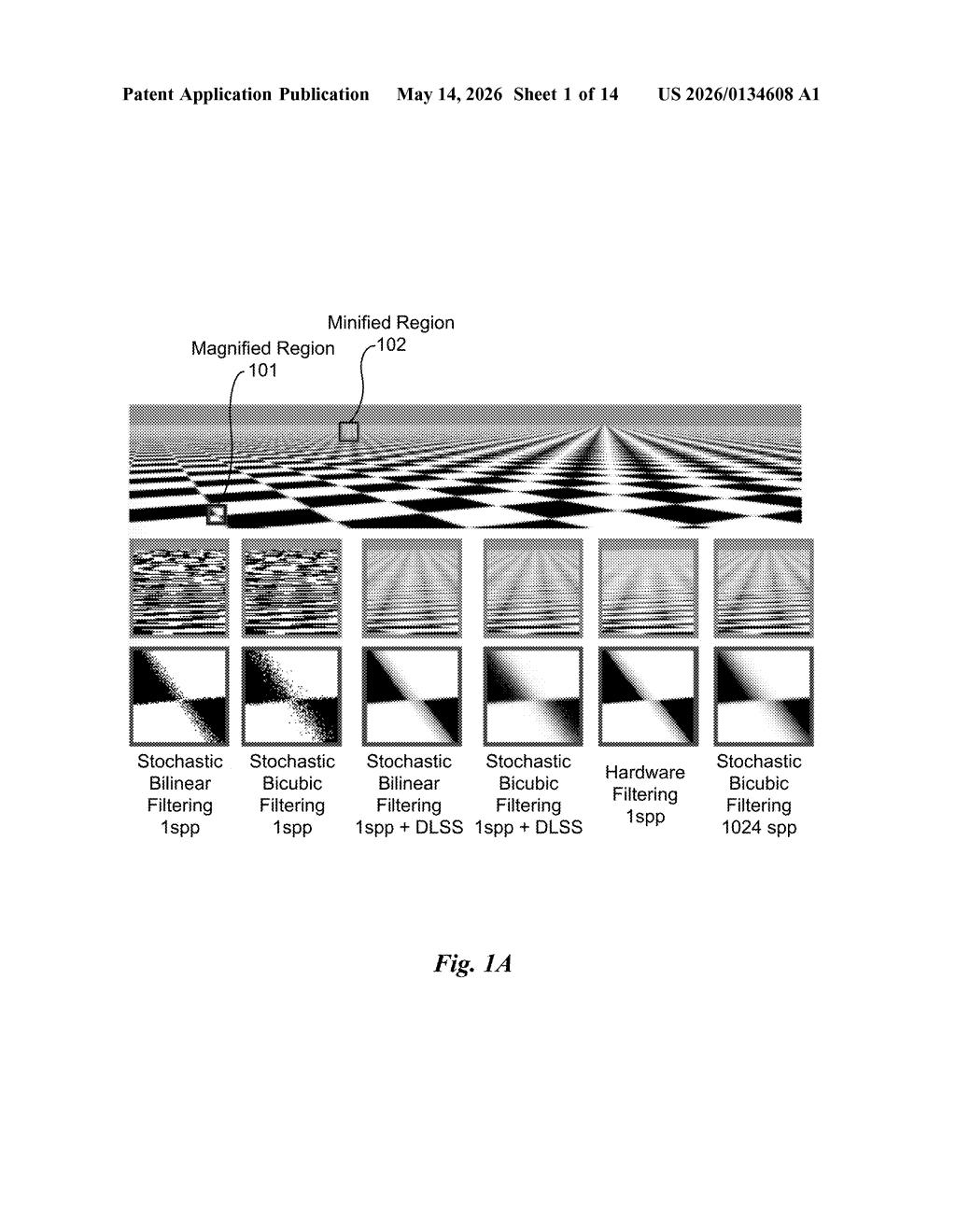

Nvidia wants to replace one of the oldest tricks in GPU texture rendering — weighted color blending — with something more elegant: deliberate randomness. The surprising part is that it's faster and looks better.

How Nvidia uses randomness to sharpen game textures

Imagine you're looking at a brick wall in a video game. The GPU has to figure out what color each pixel on your screen should be by sampling a texture map — basically a stored image that gets stretched and wrapped around 3D geometry. Normally it grabs several nearby color samples and blends them together with a weighted average. That's called filtering, and it's been done roughly the same way for decades.

Nvidia's patent proposes replacing that blending step with a clever use of randomness. Instead of averaging multiple texel samples (the individual color dots in a texture), the GPU picks just one sample per pixel per frame — but it picks that sample using a probability distribution tuned to mimic what the full blend would have looked like.

The trick is that if you're rendering many frames per second, and the random picks are well-calibrated, the average result over time converges to the correct filtered image. Pair that with a temporal reconstruction technique like DLSS and the GPU does far less work per frame without your eye noticing any quality loss.

How stochastic sampling replaces traditional texel blending

The patent describes two distinct techniques depending on the type of filter being used.

For discrete filters (like bicubic filtering, which samples a grid of nearby texels and weights them by distance), the system normally computes a weighted average across multiple texels. Instead, Nvidia's approach assigns each texel a probability proportional to its weight, then randomly selects just one texel using those probabilities. The math says that over many frames, the expected value of this random pick equals the full weighted average — so you get the same perceptual result with a fraction of the memory reads.

For continuous filters (where there's no fixed grid of samples), the system skips weight computation entirely. Instead, it perturbs (shifts by a tiny random amount) the texture coordinates themselves, drawing that shift from a filter-specific probability distribution (a statistical curve that encodes where the filter would normally sample most heavily). A single texel at the new coordinates is then fetched.

- Stochastic sampling — picking one sample randomly, weighted by importance

- Coordinate perturbation — jittering UV coordinates instead of blending multiple lookups

- Temporal integration — spreading the randomness across frames so the result averages out correctly over time

The patent explicitly notes compatibility with image reconstruction systems like DLSS (Deep Learning Super Sampling), which already operate on temporally accumulated frames — making this a natural fit.

What this means for real-time rendering and DLSS

Texture filtering is one of the most frequent operations a GPU performs — it happens for virtually every pixel of every rendered frame. Shaving even a small amount of work off that path compounds into significant frame-rate headroom, especially at high resolutions. By reducing multi-sample texture fetches to a single stochastic fetch, Nvidia could free up memory bandwidth and shader cycles that get reinvested in ray tracing, AI inference, or higher resolutions.

This also fits neatly into Nvidia's broader strategy of leaning on temporal reconstruction — DLSS, DLAA, and similar techniques — to let the GPU do less per-frame work while maintaining or improving perceived quality. If stochastic texture filtering ships in a future driver or hardware feature, you might get better-looking textures at the same frame rate, or the same quality at higher frame rates, without any change to the game itself.

This is a genuinely clever piece of rendering research dressed up as a patent. The core insight — that a well-designed random process can replace an expensive deterministic average when frames are temporally integrated — is elegant and has a clear implementation path in Nvidia's existing DLSS ecosystem. It's not flashy on the surface, but it's exactly the kind of low-level GPU efficiency work that shows up in driver updates and quietly makes every game look better.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.