IBM Patents a Version-Control System for Security Configs in Container Platforms

Managing security configurations across hundreds of containers is a known headache in modern cloud infrastructure. IBM's new patent tries to bring version control — think Git, but for security policies — directly into the orchestration layer.

What IBM's versioned pod security system actually does

Imagine your company runs dozens of microservices in the cloud, each wrapped in its own container (a "pod"). Now imagine you need to roll out a new security policy — but you can't break the services that depend on the old one. That's a real, painful problem for platform engineers today.

IBM's patent describes a system where multiple versions of security information can coexist inside a container orchestration platform (like Kubernetes). Instead of everyone getting the same security config, a set of rules — called destination rules — decides which version each pod gets when it's deployed.

This means you could run version 1 of a security policy for stable production services while quietly rolling out version 2 to a test group, all without touching the pods themselves. It's a cleaner, more controlled way to manage security upgrades at scale.

How destination rules route security versions to pods

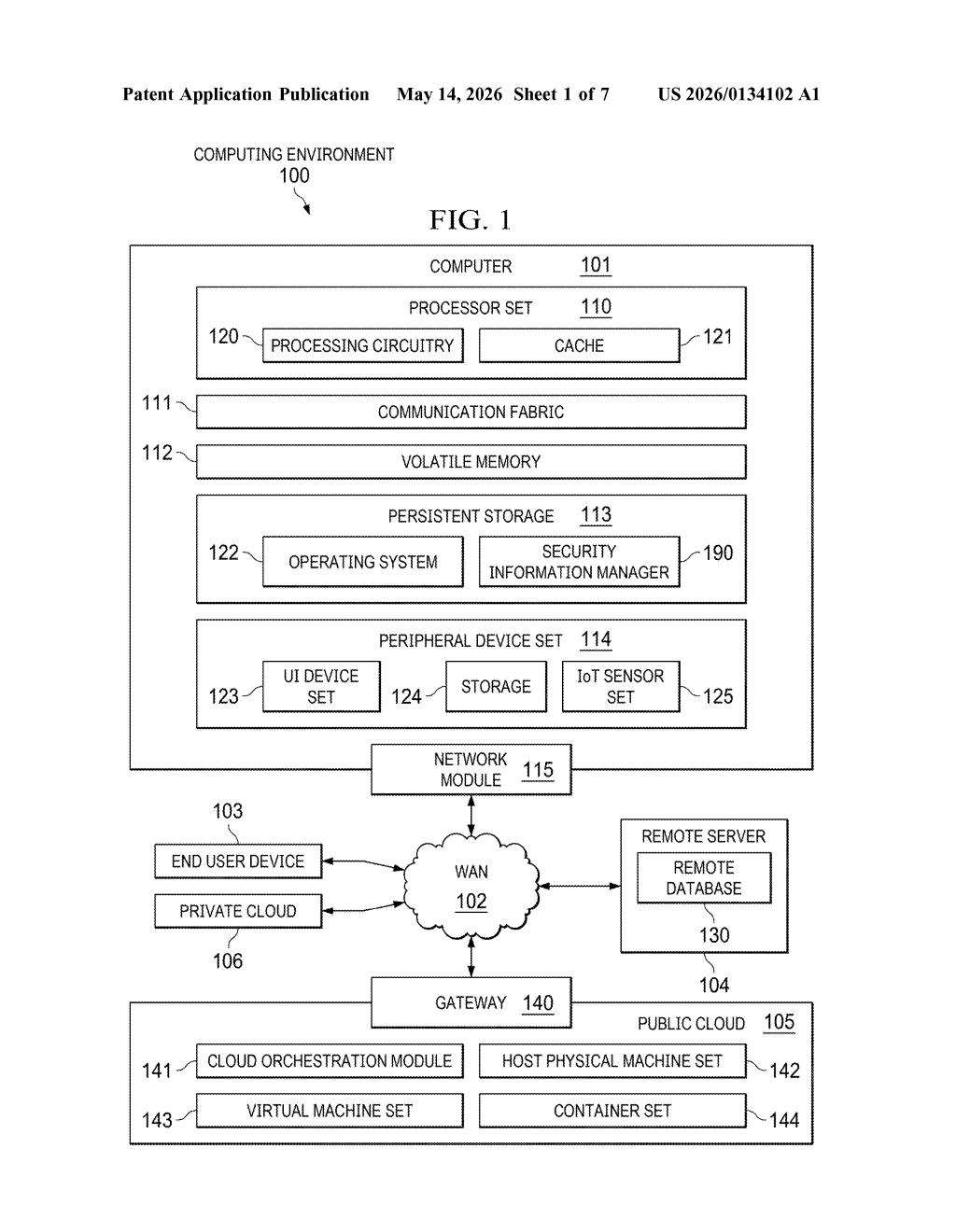

The patent describes a computer-implemented method that sits inside an orchestration platform (the kind of system, like Kubernetes, that manages containerized applications at scale).

Here's the flow:

- A processor set receives a request to register a new version of security information — things like certificates, access policies, or mTLS (mutual TLS, a protocol where both sides of a connection verify each other) settings — for a group of pods.

- That version gets stored in a metadata database alongside other existing versions, building up a versioned library of security configurations.

- A destination rule — essentially a routing instruction that says "pod type X gets security version Y" — is also stored in the same database.

- When a pod is actually deployed, it consults the destination rules and pulls the appropriate security version automatically.

The key architectural idea is separating the security configuration data from the routing logic that decides who gets which version. This is similar to how feature flags work in software releases — you can ship new behavior to select consumers without forcing everyone to upgrade at once.

What this means for Kubernetes security management

For platform and DevSecOps teams, security configuration drift is a persistent risk — one pod running an outdated TLS policy can become a liability. IBM's approach makes it easier to stage security rollouts, audit which pods are running which policy version, and roll back without a full redeployment.

This is squarely aimed at enterprise Kubernetes environments, where IBM already has a significant footprint through Red Hat OpenShift. If this system were integrated into OpenShift or IBM Cloud, you'd get finer-grained control over security policy versioning without needing third-party tooling like Istio's destination rules bolted on as an afterthought.

This is solid, unglamorous infrastructure work — the kind of thing that doesn't make headlines but genuinely reduces operational risk in large Kubernetes deployments. The concept of routing different security policy versions to different pods using a destination-rule model is sensible and draws from patterns already familiar in service mesh tooling. It's not a paradigm shift, but it's a real gap that IBM is trying to close cleanly.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.