IBM Patents a Non-Disruptive Fault Isolation System for Server I/O Hardware

When a network card or storage adapter inside a server cluster goes haywire, the usual fix is painful: bring things down, pull the card, restart. IBM's new patent describes a way to wall off the broken component automatically — and keep everything else running while you investigate.

How IBM quietly quarantines a broken I/O adapter

Imagine a busy data center where dozens of servers share a pool of network and storage adapters. One of those adapters starts misbehaving — throwing errors, corrupting traffic. Normally, fixing it means interrupting all the servers that depend on it, which is expensive and disruptive.

IBM's patent describes a smarter approach. A central coordinator — called a root complex — sits between the servers and the adapters. The moment a switch detects a problem with one adapter's port, it sends an alert. The root complex then tells all attached servers: "stop sending traffic through that adapter, now." The adapter is effectively frozen in place.

While everything is on pause, one designated server quietly inspects the adapter — checking the link, pulling diagnostic data — to figure out what went wrong. After a set waiting period, the root complex signals a reset. If the adapter keeps failing past a set threshold, the system flags it for physical replacement. The whole process happens without rebooting any of the servers.

How downstream port containment coordinates the freeze-and-reset cycle

The patent describes a protocol built around a concept called downstream port containment (DPC) — a PCIe standard mechanism where a switch can electrically isolate a misbehaving downstream port (think of it like a circuit breaker for a slot on a PCIe switch).

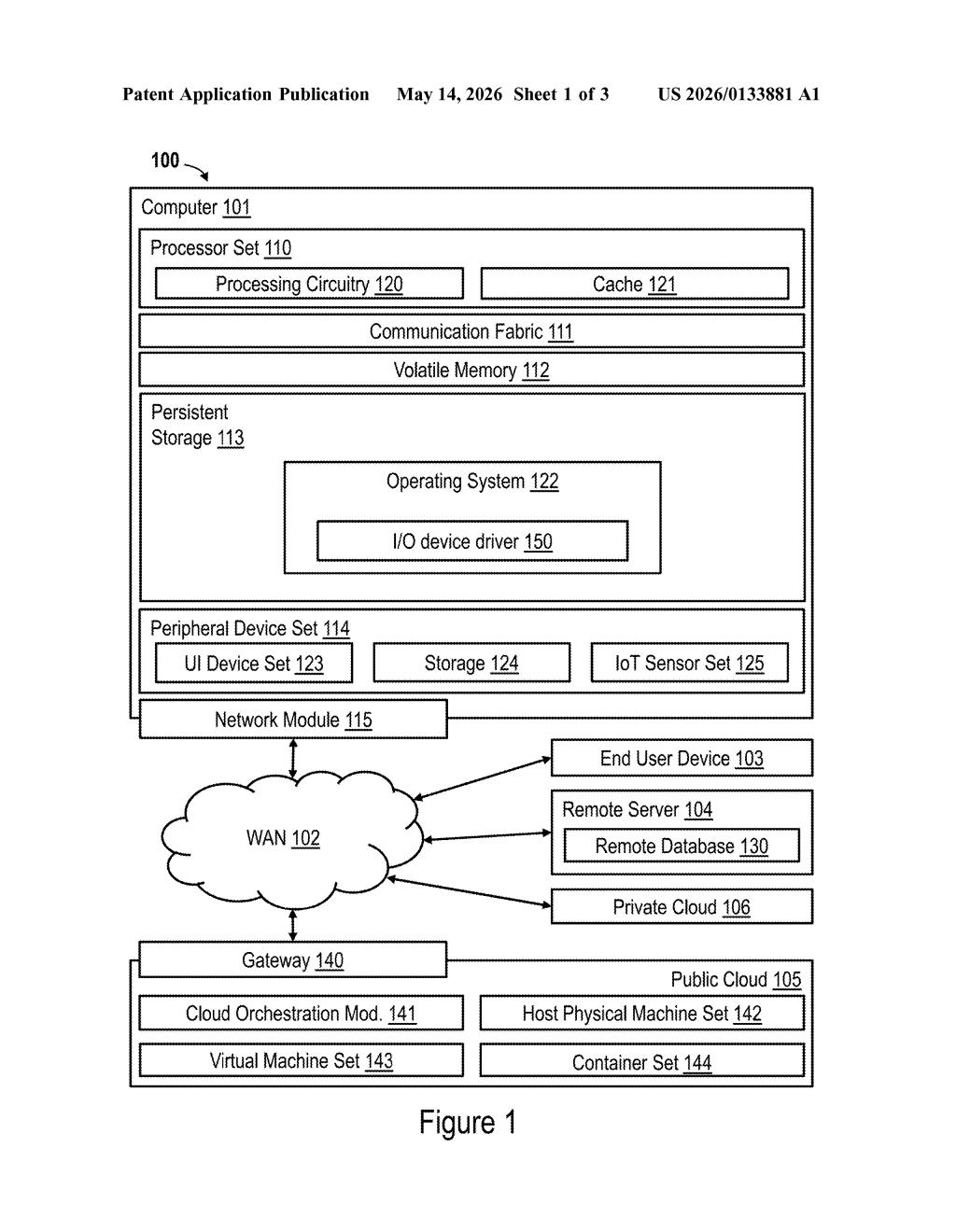

The key actor here is the root complex, a piece of hardware/firmware that sits at the top of the I/O hierarchy, bridging multiple server platforms and multiple I/O switches. When a switch detects a fault and triggers DPC on a port, it sends a message signaled interrupt (MSI) — a low-latency interrupt mechanism — up to the root complex.

The root complex then orchestrates a four-step response:

- Broadcast a containment notification to all attached server platforms so they stop queuing I/O to the affected adapter

- Allow a designated controlling server to inspect the link and collect diagnostic metadata from the adapter during the freeze window

- Hold containment for a defined interval to ensure all in-flight transactions drain

- Release containment and signal both the adapter and the servers to resume, triggering an adapter reset

If the same adapter trips DPC more times than a configurable containment threshold, the controlling server fences the adapter entirely and marks the field-replaceable unit (FRU — the physical component) for swap-out.

What this means for enterprise uptime and hot-swap reliability

For large enterprise or cloud environments running IBM's Power-based infrastructure — think financial services firms, government systems, telcos — even a few seconds of unplanned downtime per adapter can cascade into SLA violations and data integrity problems. IBM's approach lets the platform absorb a hardware fault without forcing a cluster-wide restart, which is the kind of resilience that matters at scale.

The patent also bakes in root-cause analysis during the fault window, not after. That means your operations team gets diagnostics automatically collected at the moment of failure, rather than trying to reconstruct what happened from logs after the fact. It's a subtle but meaningful shift from reactive repair to structured, automated triage.

This is solidly useful infrastructure engineering — exactly the kind of unsexy reliability work that actually keeps enterprise systems running. It won't make headlines at a product launch, but the combination of automated containment, in-situ diagnostics, and threshold-based hardware flagging is a well-thought-out system. IBM's Power server and storage customers will recognize the problem immediately.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.