Sony's New Patent Wants to Replace Your Controller With Your Body and Voice

Sony is exploring a way to let a machine learning model watch you, listen to you, and track your movements — then translate all of that into game inputs, no physical controller needed.

How Sony's multi-sensor AI replaces your controller

Imagine playing a game by waving your hand, saying a command, or just moving your body — and the game responds as if you'd pressed a button on a traditional controller. That's the basic idea behind this Sony patent.

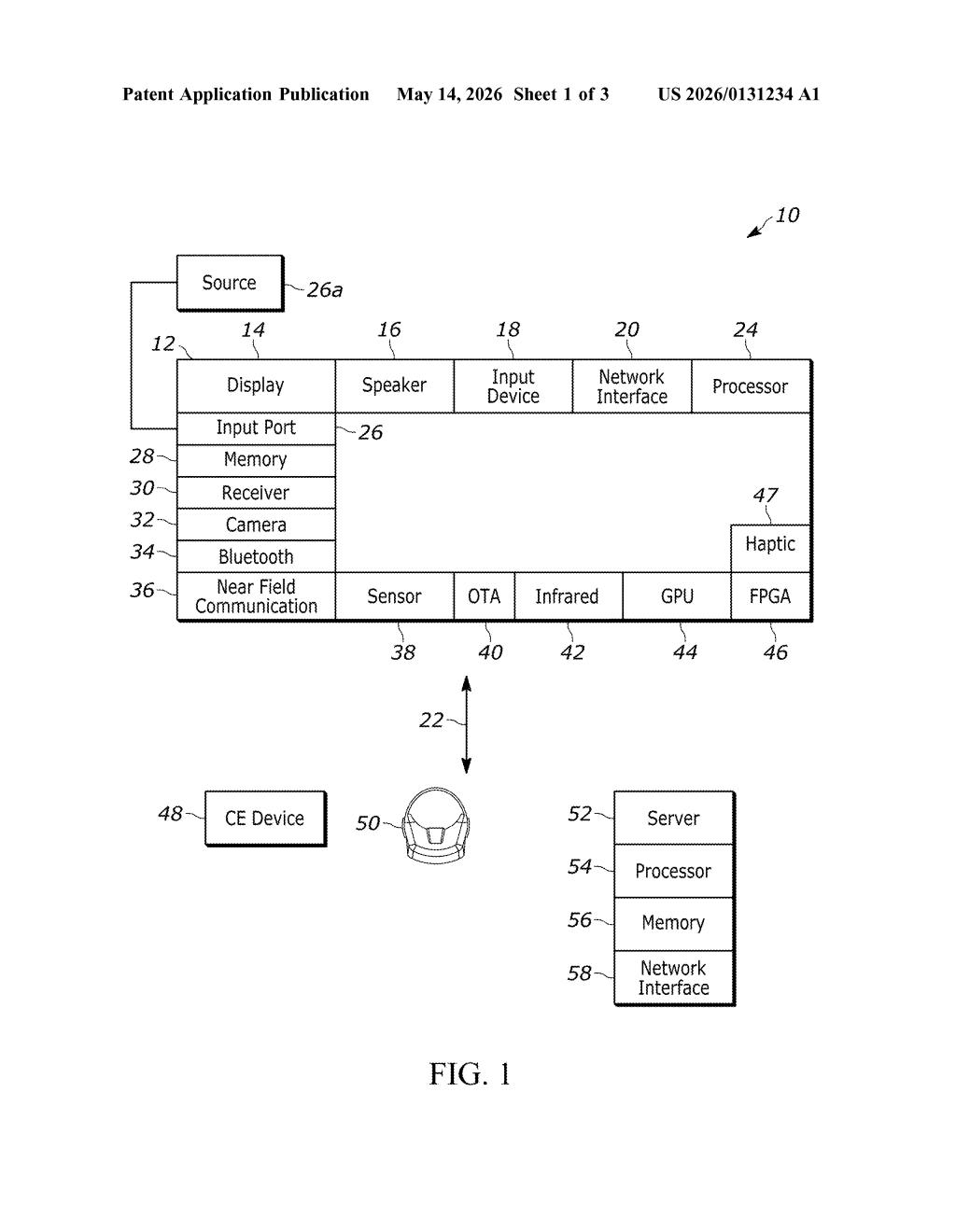

The system pulls in three types of data at once: video from a camera, motion sensor readings (think accelerometers or depth sensors), and audio. It feeds all of that into an AI model, which figures out what "player input" you intended — then passes that instruction directly into the game engine.

It's essentially a smart interpreter sitting between your body and the game. You do something physical or vocal, the AI decides what game action that maps to, and the game reacts. Sony already has a history with camera-based control through PlayStation Camera and PlayStation Move, so this feels like a natural — if significantly more AI-driven — evolution of that thinking.

How the ML model fuses image, motion, and audio inputs

At its core, the patent describes a multi-modal input pipeline — a system that doesn't rely on any single sensor but combines three streams simultaneously:

- Image data — visual frames from a camera, likely capturing player pose or gestures

- Motion data — readings from accelerometers, gyroscopes, or depth sensors tracking physical movement

- Audio data — microphone input that could capture voice commands or even ambient sound cues

All three streams are fed together into a machine learning model (the patent doesn't specify the architecture, but multi-input neural networks are the obvious candidate). The model processes the combined input and outputs a player input — meaning it produces something structurally equivalent to a button press, joystick move, or other standard game command.

That output is then handed directly to the game, which treats it like any other controller input. The game itself doesn't need to know the input came from an AI sensor fusion system rather than a DualSense controller — the interface is standardized.

The key claim here is dynamic encoding — the system is presumably adaptive, not rule-based, meaning the ML model can generalize across different gestures, environments, and audio conditions rather than relying on a fixed lookup table of recognized moves.

What this means for controller-free PlayStation gaming

For players, the immediate implication is controller-free gaming that's smarter than prior camera gimmicks. Earlier motion-control systems (PlayStation Move, Kinect) were brittle — they relied on predefined gesture libraries. An ML-based approach could theoretically handle natural, varied movements and even learn over time.

For Sony's broader strategy, this patent sits at the intersection of accessibility and next-generation input design. A system like this could let players with limited hand mobility use their voice and body more fluidly, or it could underpin future PlayStation hardware where a traditional controller is optional. It also signals that Sony is actively patenting AI-native input methods — which could become important as AR and VR headsets make handheld controllers increasingly awkward.

This is a genuinely interesting input-design patent, not a routine filing. Fusing camera, motion, and audio into a single ML inference pipeline for real-time game control is non-trivial, and Sony's track record with physical controllers and camera accessories gives this some real-world credibility. Whether it ships as a PlayStation feature or stays a research experiment depends heavily on latency — real-time ML inference for game input is a hard engineering problem — but the concept is worth tracking.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.