Intel Patents a Way to Make Your Car's CPU Work Harder When It Matters

Your car's processor doesn't need to work the same way when you're parked as when you're navigating a highway at 70 mph. Intel's new patent proposes a system that dynamically reshuffles CPU resources — cores, cache, memory bandwidth — based on what driving mode the vehicle is in and how critical each task actually is.

How Intel's vehicle processor adjusts to driving conditions

Imagine you're cruising on a highway with adaptive cruise control active. Your car's computer is simultaneously running navigation, sensor fusion, and music streaming — but not all of those tasks are equally urgent. Intel's patent describes a system that knows the difference.

The idea is straightforward: when the vehicle switches modes — say, from a parked state to active highway driving — the processor reshuffles how it divides up its resources. Safety-critical tasks get more CPU cores, more memory bandwidth, and higher operating frequency. Background tasks like infotainment get less.

This is essentially a priority scheduler that's aware of the physical state of the vehicle, not just software load. Your car's chip could automatically tighten up for a demanding merge and relax again once you're parked.

How driving mode and QoS scores drive resource allocation

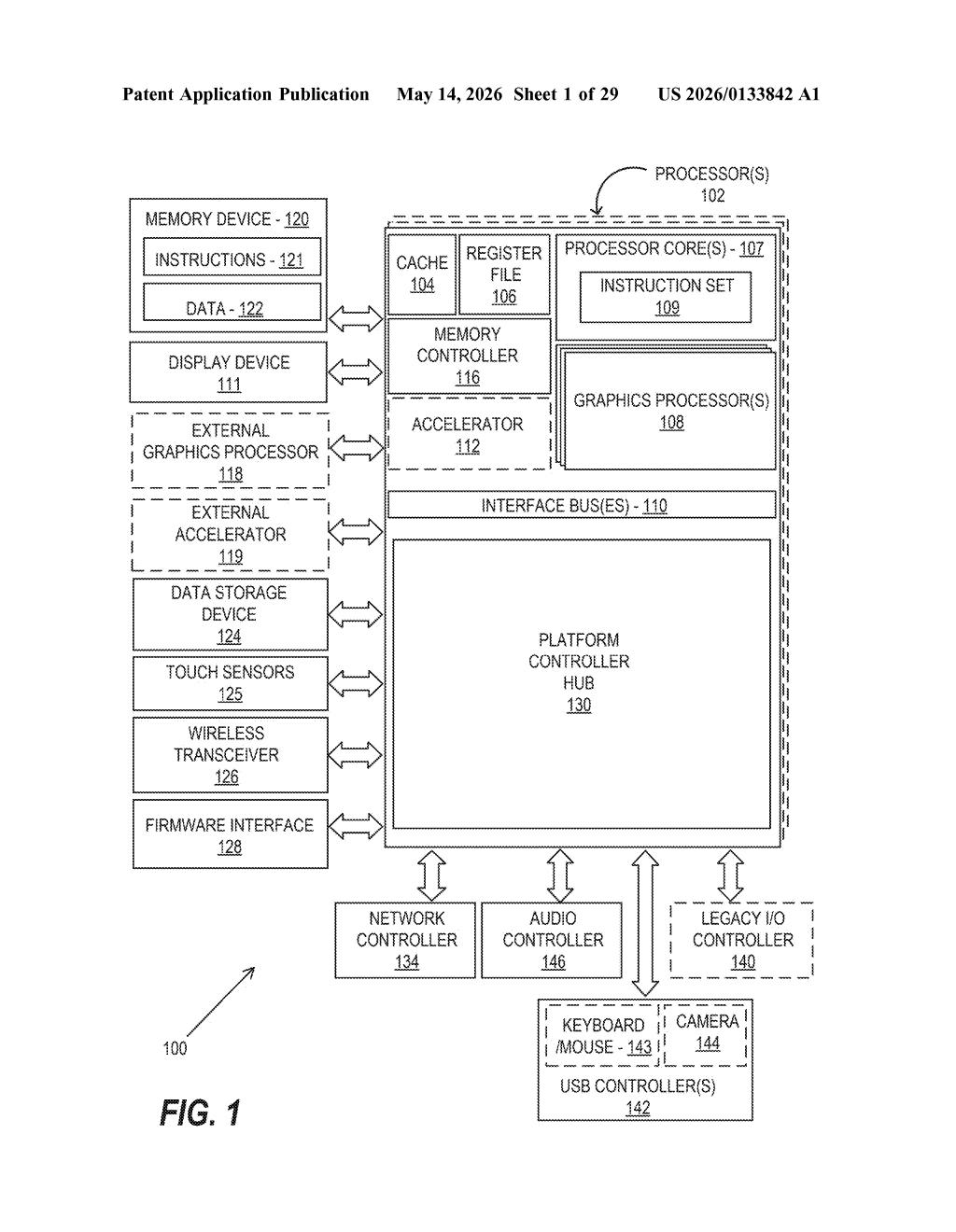

The patent covers a resource allocation system embedded in a vehicle's processor. When a task needs to run, the system consults two inputs: the current vehicle mode of operation (parked, highway driving, autonomous navigation, etc.) and the task's Quality of Service (QoS) — a priority score that reflects how time-sensitive or safety-critical the task is.

Based on those two factors, the system then adjusts a bundle of hardware-level parameters:

- Register allocation — how much of the CPU's fastest internal storage a task gets

- Cache allocation — how much of the high-speed memory buffer is reserved for the task

- Number of processor cores assigned to the task

- Memory allocation and bandwidth — how much RAM and data throughput the task can consume

- Processor operating frequency — essentially, how fast the chip runs for that task

The core innovation is tying these allocations to vehicle context rather than just software-level load metrics. A task that deserves 4 cores while the car is executing an autonomous lane change might only warrant 1 core while the vehicle is idle in a parking lot.

What this means for in-car compute and ADAS workloads

Modern vehicles — especially those with advanced driver assistance systems (ADAS) or full self-driving stacks — are running increasingly complex compute workloads on shared silicon. A processor that doesn't distinguish between a parking sensor task and a collision-avoidance task is leaving both safety and efficiency on the table.

For Intel, which has been pushing its automotive-grade silicon through its Mobileye acquisition and its broader automotive SoC roadmap, this kind of context-aware scheduling is a natural layer to add. If you're an automaker evaluating in-vehicle compute platforms, a chip that natively understands vehicle state — rather than requiring custom middleware to handle it — is a meaningful selling point.

This is solid systems engineering applied to a real problem in automotive computing, but it's not a dramatic leap. Dynamic resource allocation based on workload priority has been a staple of server and mobile chip design for years — the novel angle here is anchoring priority to vehicle operating mode rather than purely to software signals. It's incremental, practical, and likely to show up quietly in an Intel automotive SoC spec sheet rather than a keynote.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.