Nvidia Patents a Synthetic Data Pipeline for 3D Road Surface Detection

Training a self-driving AI to understand road surfaces in three dimensions requires enormous amounts of labeled data — and getting that data in the real world is slow, expensive, and dangerous. Nvidia's answer is to skip the real world entirely and build the training data in simulation.

How Nvidia teaches AI to 'see' road surfaces in 3D

Imagine trying to teach a computer to understand every bump, slope, and dip in a road — not just as a flat image, but as a full 3D surface. To do that well, you need thousands of perfectly labeled examples showing exactly what the road looks like in three dimensions. Collecting that kind of data from real cars on real roads is a logistical nightmare.

Nvidia's patent describes a system that builds all of that training data inside a virtual simulation. The system runs a fake world, generates virtual camera images, and automatically creates matching depth maps (which describe how far away each point is) and segmentation masks (which identify exactly which pixels are road). No human labelers required.

The result is a clean dataset that a deep neural network can learn from — so that when the trained model is deployed in a real car, it can accurately reconstruct the 3D shape of the road surface in front of it.

How the simulated pipeline builds ground-truth road geometry

The pipeline has two main outputs for every simulated frame it generates: input training data and ground truth training data.

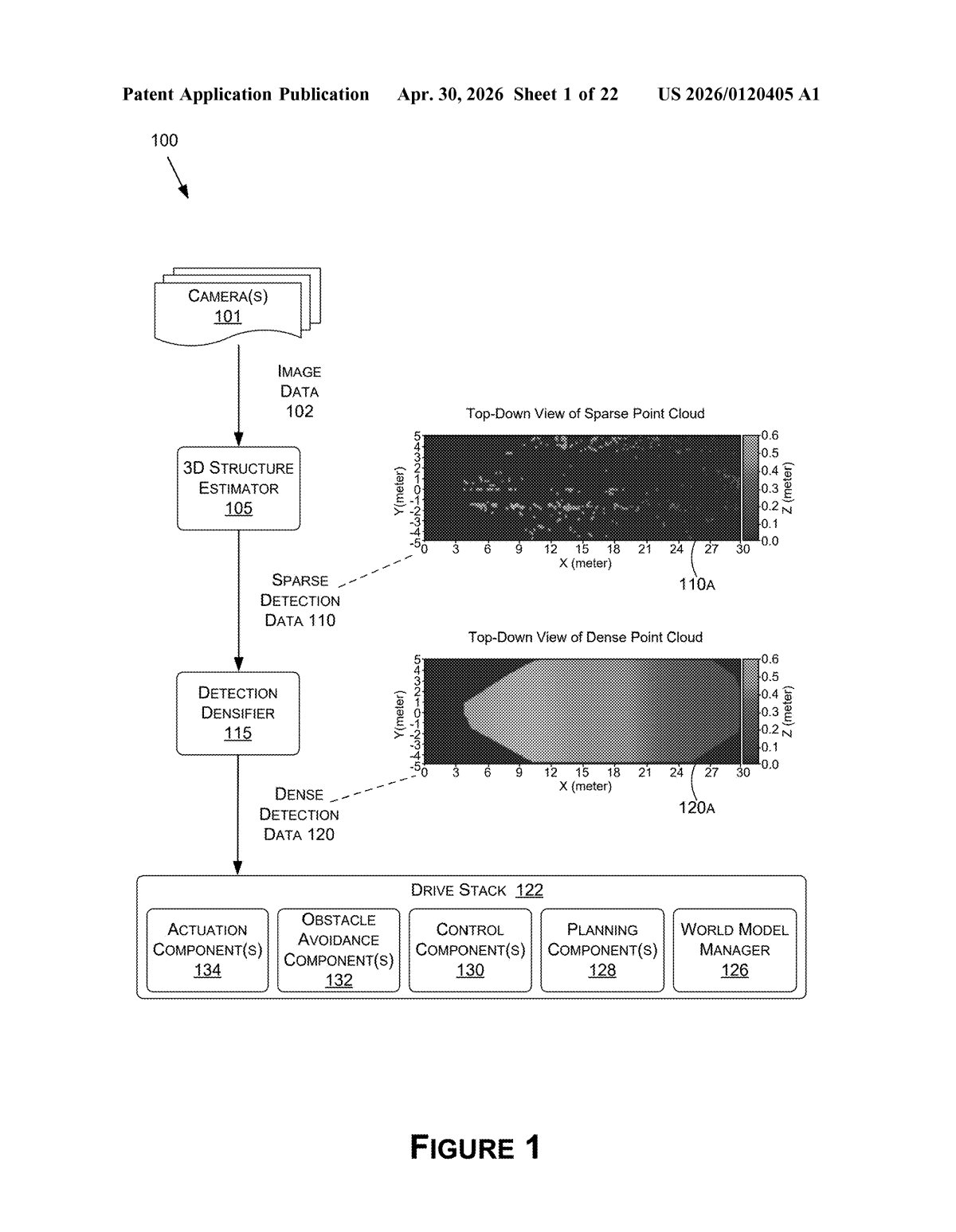

For the input side, the system takes a rendered virtual image and runs 3D structure estimation on it — essentially the same kind of algorithm a real autonomous vehicle would use to infer road geometry from camera or sensor input. This produces a point cloud (a sparse, dotted representation of 3D space) that mimics what a real sensor would generate.

For the ground truth side, the system uses the simulation's built-in knowledge — the depth map (exact per-pixel distance to objects) and a segmentation mask (a pixel-level label saying 'this is road, this is not road') — to generate a dense representation of the road surface. Dense here means complete and continuous, not the sparse point cloud you'd get from a real sensor.

A post-processor then pairs these two outputs into matched training examples:

- Input: noisy, sparse point cloud (like what a real car sees)

- Ground truth: clean, complete 3D surface (what you want the model to learn to predict)

The DNN trains on thousands of these pairs until it learns to turn sparse real-world sensor data into accurate dense 3D road geometry.

What this means for autonomous vehicle training at scale

For autonomous vehicles, understanding road geometry in 3D — not just 'there's a road here' but 'the road curves and slopes like this' — is critical for safe navigation, especially at speed or in poor conditions. The bottleneck has always been getting enough labeled training data. Synthetic pipelines like this one let Nvidia (and its automotive customers) generate effectively unlimited training data without sending a single test vehicle out the door.

This also feeds directly into Nvidia's DRIVE platform strategy: the more capable their simulation and training tooling, the harder it becomes for automakers to switch to a competitor's stack. A robust synthetic data pipeline is infrastructure-level leverage in the AV industry.

This is unglamorous but genuinely important work. The hard part of autonomous driving has always been data, not algorithms — and patents like this one show Nvidia systematically closing that gap through simulation. It's less exciting than a new chip announcement, but it may matter more in practice.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.