Nvidia Patents a Self-Correcting Sim-to-Real Training Loop for Robots

Getting a robot to work in simulation is hard enough. Getting it to work the same way in the real world — where physics is messy and unpredictable — is the part that usually breaks everything. Nvidia's new patent attacks exactly that gap.

How Nvidia teaches robots to learn from real-world failures

Imagine you're teaching someone to catch a ball using only a video game. The game's physics are close to real life, but not perfect — the ball maybe moves a little too fast, or the gravity feels slightly off. When your trainee steps into the real world, those small differences add up and they keep dropping the ball.

Nvidia's patented system solves this by watching what actually happens when a robot tries a task in the real world, then going back and tweaking the simulation to better match reality. Think of it as the simulation learning from the robot's mistakes, not just the other way around.

Once the simulation is updated, the robot retrains on it — and the cycle repeats. Each round, the simulation gets closer to the real world, and the robot gets better at the task. Over time, you end up with both a more accurate simulation and a robot that can actually do the job.

How the sim parameters update after each real-world attempt

The core idea is a sim-to-real feedback loop — a process where a robot's real-world performance is used to calibrate the virtual environment it was trained in.

Here's the sequence:

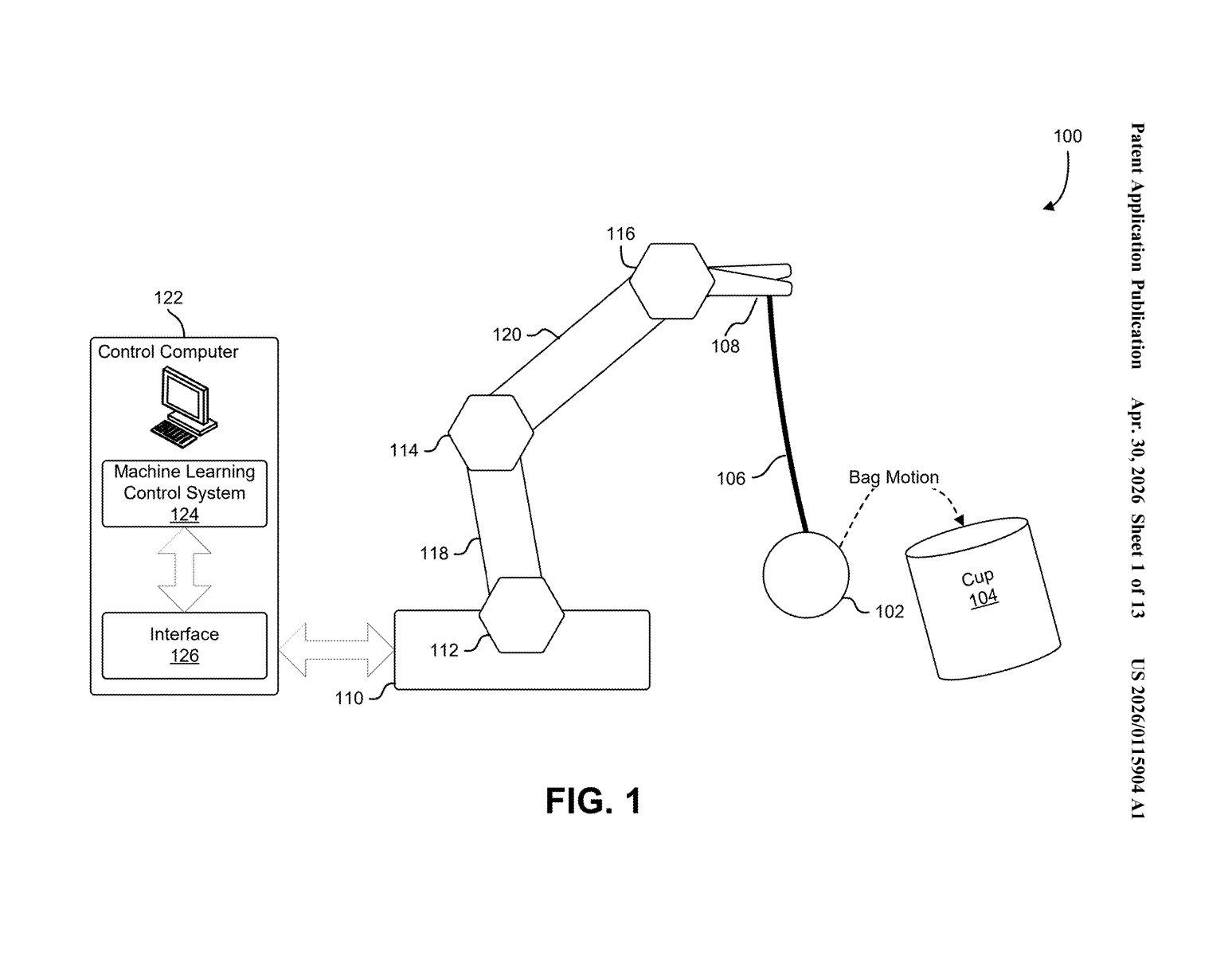

- The system starts by training a machine-learning controller inside a simulation, where physical properties (like friction, object weight, or joint stiffness) are initialized with best-guess values and allowed ranges.

- The trained robot then attempts the task in the real world, and sensors capture what actually happened — how the object moved, how much force was applied, etc.

- Those measured outcomes are compared against what the simulation predicted. The gap between the two is used to adjust the simulation's parameters — nudging things like surface friction or mass until the simulated result matches the real one.

- The robot is then retrained on the updated simulation, and the whole loop runs again.

The key technical insight is treating simulation parameters not as fixed values but as ranges with uncertainty (similar in spirit to Bayesian inference — the idea that you start with a best guess and update it as you see evidence). As more real-world attempts happen, those ranges narrow, and the simulation becomes a tighter and tighter model of physical reality.

The patent specifically mentions an example involving bag motion, suggesting the team tested this on deformable objects — one of the hardest categories for robotic manipulation.

What this means for deploying robots outside the lab

The biggest wall between a robot that works in a lab and one that works in a warehouse is the sim-to-real gap. Simulations are cheap and fast for training, but real-world physics never quite match the model. Most approaches try to paper over this gap with randomization (training on thousands of slightly different simulated environments and hoping one sticks). Nvidia's approach is more principled: it measures the gap and closes it iteratively.

For you as an end user, this matters because it's a path toward robots that can be deployed faster and more reliably — without requiring armies of engineers to hand-tune every physical parameter for every new environment. If this works at scale, it could meaningfully shorten the time between "robot trained in simulation" and "robot useful on a factory floor."

This is one of the more technically coherent robotics patents to come out of a big tech lab recently — it's not just a vague AI claim, it's a specific algorithmic loop with a clear problem (the sim-to-real gap) and a concrete mechanism (parameter refinement via real-world comparison). The team behind it — including Dieter Fox and Nathan Ratliff, both serious names in robotics research — gives it additional credibility. Worth following as Nvidia pushes harder into physical AI.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.