Apple Patents a Hybrid Indoor-Outdoor Positioning System Using Sensors and Camera Poses

GPS falls apart the moment you step inside a building — Apple's latest patent describes a system that uses ambient sensor readings to anchor your device's position before a camera even takes a picture.

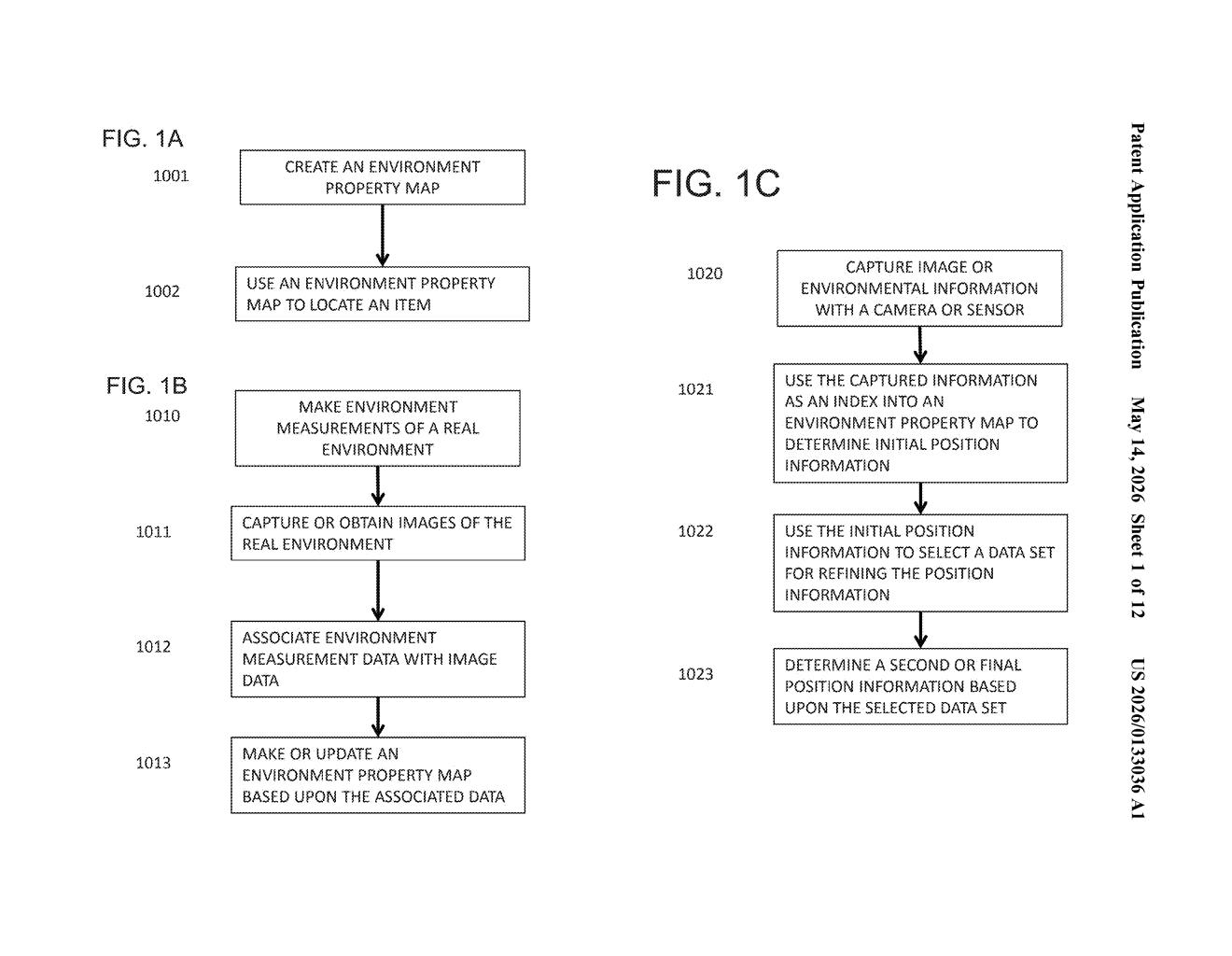

What Apple's environment property map actually does

Imagine you're navigating a large shopping mall or airport. Your phone's GPS signal is weak or nonexistent, but your phone is still quietly measuring things like Wi-Fi signal strength, magnetic field variations, or barometric pressure. Apple's patent describes using those ambient sensor readings as a kind of fingerprint that matches known reference data stored in an environment property map — essentially a pre-built lookup table of what the environment "feels like" at different locations.

Once that map gives your device a rough idea of where it is, the phone's camera kicks in. The system uses that coarse location to dramatically narrow down the harder problem of figuring out exactly where the camera is pointing and how it's oriented — what engineers call a camera pose.

The result is a two-step positioning system: cheap, fast sensor data does the heavy lifting first, and the camera refines the answer. You get better location accuracy indoors, where GPS can't help, without burning through battery on expensive computations the whole time.

How sensor readings guide Apple's camera pose estimation

The patent describes a pipeline with two distinct stages. First, one or more non-image sensors on a mobile device — think magnetometers, barometers, Wi-Fi receivers, or similar environmental detectors — capture a snapshot of ambient conditions at a given location. These readings are compared against an environment property map, a pre-constructed database that associates those kinds of sensor signatures with known physical positions.

That comparison produces location information: a coarse but quick estimate of where the device is. Crucially, the patent frames this as a pruning step — instead of asking the camera system to search the entire world for a matching visual reference, it's now only searching a small geographic window.

With that window established, the device's camera captures image data, and a camera pose (the precise position and orientation of the camera in 3D space) is computed. Because the search space has already been narrowed, this visual localization step is faster and more computationally efficient.

The environment property map itself can be built ahead of time using earlier sensor sweeps and captured images — essentially a mapping pass before the real-time localization is needed. The system is designed to work both indoors and outdoors, bridging the gap where GPS alone fails.

What this means for iPhone navigation and AR experiences

For everyday users, this kind of system is the missing piece for truly reliable indoor navigation — think turn-by-turn directions inside airports, hospitals, or warehouses, without requiring special hardware installations. It also matters a lot for augmented reality: any AR overlay that needs to stick to a real-world surface has to know exactly where the camera is, and doing that indoors is notoriously hard.

From a strategic angle, Apple has been investing heavily in spatial computing with Vision Pro and ARKit. A robust, sensor-assisted localization system that works whether you're outside on a street or inside a stadium could underpin more precise AR anchoring, improved Maps navigation, and tighter integration between the physical world and on-device experiences.

This is solid, practical infrastructure work rather than a flashy concept. The two-stage approach — cheap sensors first, camera refinement second — is a smart engineering trade-off that addresses a real gap in mobile positioning. Given Apple's push into spatial computing and the known limitations of GPS in dense urban and indoor environments, this feels like foundational plumbing that would quietly improve a lot of downstream features.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.