Google Patents a Sandboxed Ad Auction System Built on Trusted Execution Environments

Google is patenting a way to run ad auctions inside hardware-enforced sandboxes so that the code doing the bidding can never leak your personal data — even to Google's own servers.

What Google's sandboxed ad-selection system actually does

Imagine your browser asks a server to show you an ad. Normally, that server's ad-selection code can see everything — your browsing context, your location, your past behavior — and could, in theory, send that data anywhere. Google's new patent tries to close that loophole by wrapping the entire auction process in a locked box.

The locked box is called a sandbox, and it runs inside a trusted execution environment (TEE) — a secure enclave baked into the hardware itself. The ad-selection code runs in there, picks a winner, and only the result comes back out. The code can't phone home with your data because the sandbox literally won't let it.

What makes this different is the multi-stage design: each step of the auction — from different ad platforms — runs in its own isolated sandbox. So no single piece of code ever sees the full picture, and your data stays on your side of the wall.

How the TEE isolates each workflow stage in its own sandbox

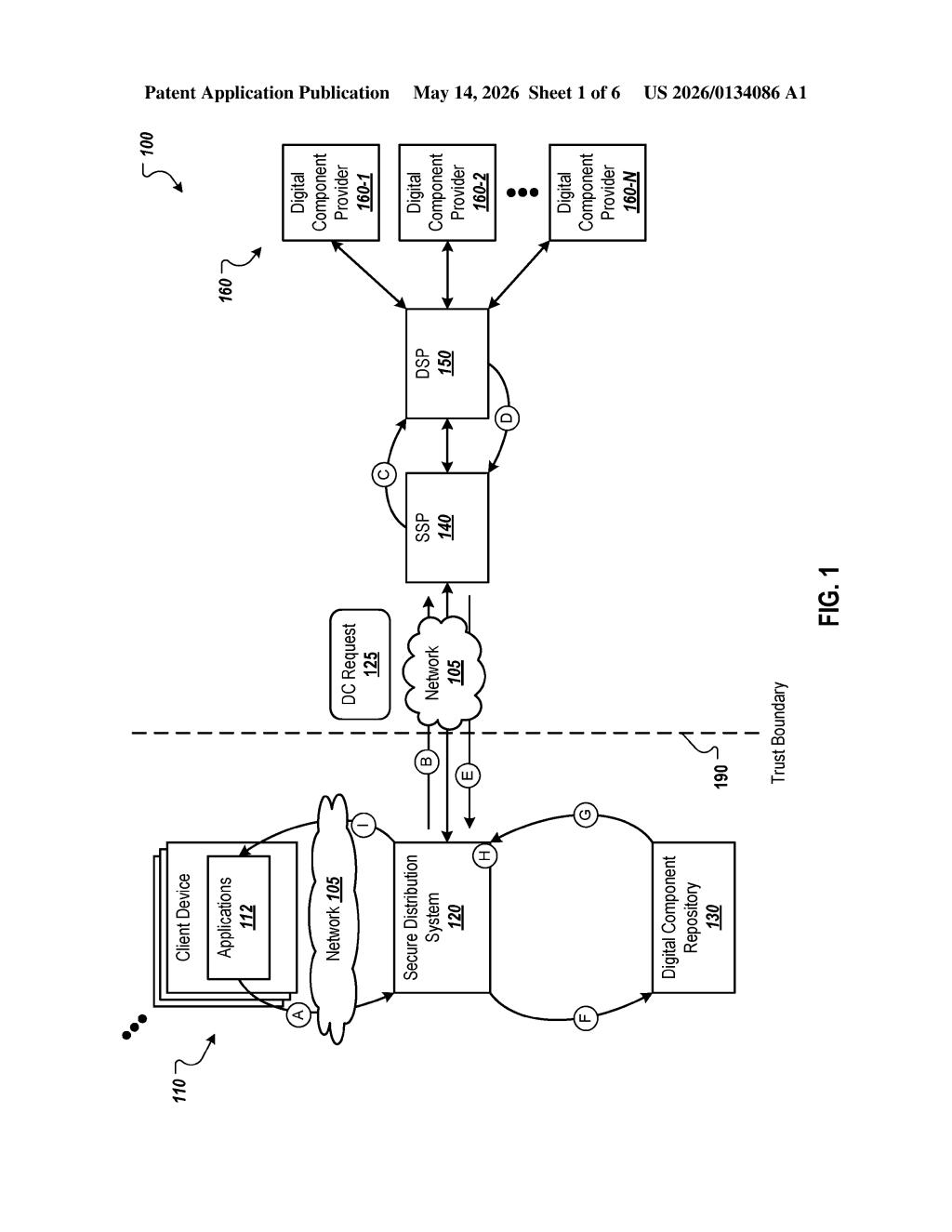

When a client device requests a digital component (Google's term for an ad), the server identifies a set of multi-stage workflows — essentially auction pipelines, one per ad platform. Each workflow can be customized by the platform but must run within strict boundaries.

The key enforcement mechanism is a Trusted Execution Environment (TEE) — a hardware-isolated compute zone (think Intel SGX or AMD SEV) that the host OS and server software cannot inspect or tamper with. Each workflow stage runs in a separate sandbox inside this TEE, meaning:

- The workflow code can receive user-context data as input

- It can compute selection parameters (bid values, targeting signals)

- It cannot exfiltrate that input data to any external destination

Once all workflow stages complete, the server receives only the output data — selection parameters and candidate rankings — not the raw user data that went in. The server then picks a winning digital component and serves it to the client.

The patent also describes an output validity check before serving, suggesting the system can detect if a workflow tried to smuggle data out through the output payload itself — a subtle but important defense against side-channel leakage.

What this means for privacy-preserving ad tech

Ad tech is under serious regulatory pressure — from GDPR to the Privacy Sandbox initiative — to stop treating user data as a free-flowing resource. This patent describes a technical architecture that could let Google offer privacy guarantees by construction, not just by policy. If the sandbox truly prevents data exfiltration, it's not a promise you have to trust; it's a constraint the hardware enforces.

For you as a user, this could mean ad auctions run using your data without any party — including Google — being able to extract and store that data in identifiable form. For ad platforms bidding in the system, it changes the game: you can participate in auctions without Google (or rivals) seeing your bidding logic. It's a structural shift, and it aligns closely with where browser-level privacy APIs like the Protected Audience API are already heading.

This is a meaningful patent, not a fluffy one. It's Google building a technical moat around its Privacy Sandbox vision — the same direction as the Chrome-level Protected Audience API, now applied at the server infrastructure layer. The TEE-plus-sandbox combination is a real engineering approach to a real problem, and filing it as a patent signals Google intends to own this architecture as the industry moves away from third-party cookies.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.