Microsoft Patents a System That Rewrites Your AI Prompt When You Flag a Bad Answer

When an AI gives you a wrong or useless answer, most systems just shrug and wait for you to rephrase manually. Microsoft's new patent describes a system that takes your complaint, figures out what additional information would actually help, and rewrites the prompt for you automatically.

How Microsoft's feedback loop fixes bad AI answers

Imagine you ask an AI assistant for a summary of a report, and it comes back with something totally off-base. Right now, fixing that usually means you doing the work — rephrasing, adding context, trying again. It's tedious, and most people have no idea which part of their original prompt was the problem.

Microsoft's new patent describes a smarter loop. When you flag an answer as wrong or unhelpful, the system doesn't just note your frustration — it analyzes your feedback, figures out what extra information would have led to a better answer, and then packages that into a revised prompt. You'd interact with a small UI element (what the patent calls a "feedback icon") to confirm or trigger the rewrite.

The key step is something Microsoft calls an "actionability check" — a test to make sure the revised prompt is actually something the AI can work with, not just a vague improvement. If it passes, the updated prompt gets sent to the model and you get a new, hopefully better, response.

How the actionability check and feedback icon work together

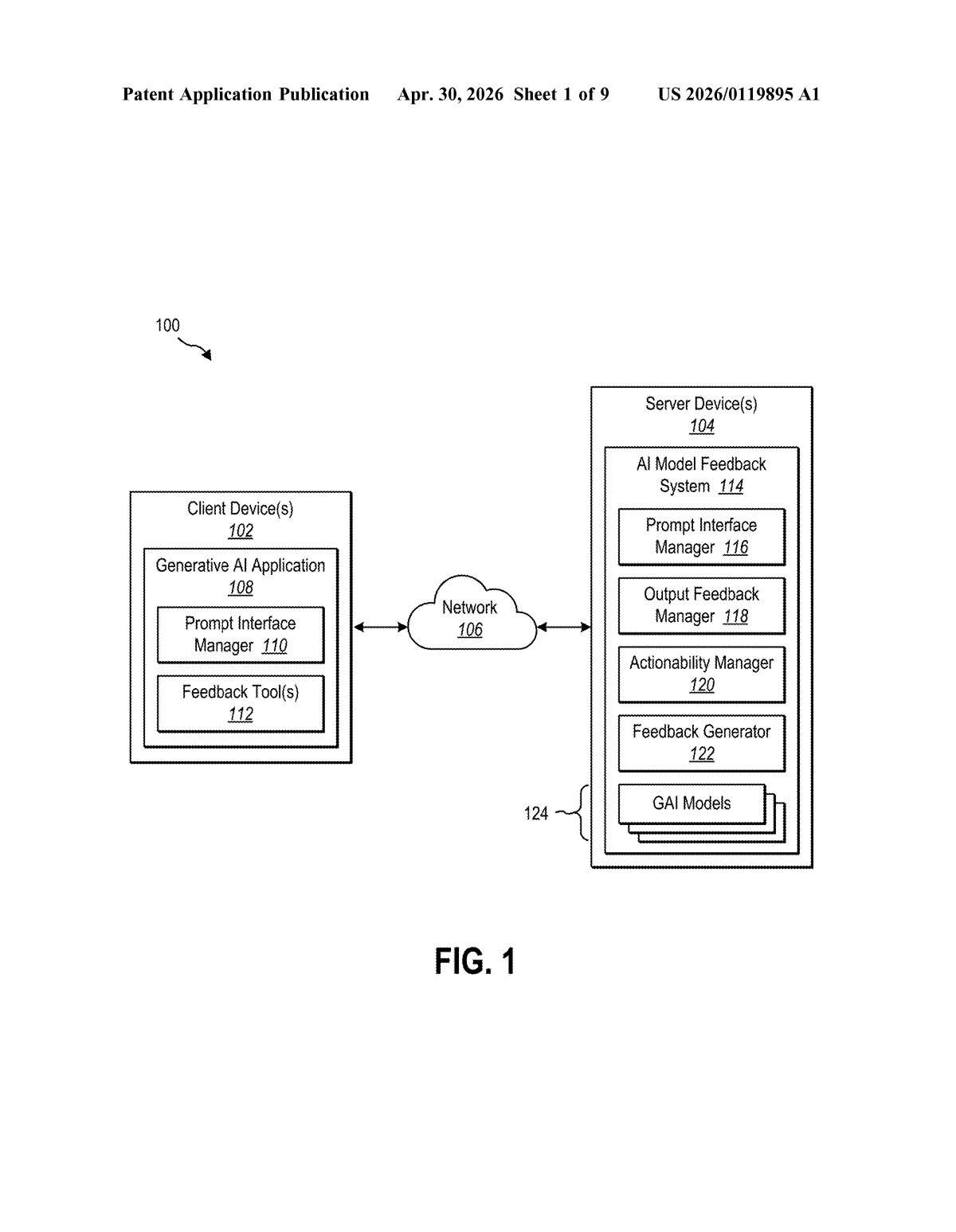

The system sits between you and the underlying AI model, acting as a kind of prompt quality manager. Here's the basic flow:

- You flag a bad output — the system surfaces a feedback request (a structured prompt asking what was wrong).

- Actionability check — before doing anything with your feedback, the system runs it through a check to verify that the additional information you've provided would actually be usable by the AI model (i.e., it's not too vague, contradictory, or out of scope).

- Feedback icon generation — the system creates an interactive UI element that surfaces the proposed additions so you can review or trigger the rewrite.

- Modified prompt generation — your original prompt and the new contextual items are merged into a single updated prompt.

- New output — the revised prompt is fed back into the generative AI model to produce an improved response.

The "actionability check" is the most technically interesting piece. Rather than blindly appending your feedback to the prompt, the system evaluates whether a modified prompt would meaningfully change the model's behavior. Think of it as a filter that stops unhelpful noise from making things worse.

The patent also references a Feedback Generator component — likely a separate AI model — that synthesizes the user's complaint into structured "feedback hints," which are then woven into the updated prompt. This suggests the rewriting itself is AI-assisted, not a simple string concatenation.

What this means for Copilot and everyday AI users

For everyday users, this closes a frustrating loop. Today, when an AI assistant gets something wrong, the burden of figuring out why and crafting a better follow-up falls entirely on you. A system like this shifts some of that cognitive work back to the product — which is where it belongs if AI assistants are going to be genuinely useful rather than just impressive demos.

For Microsoft, the obvious home for this is Copilot across Microsoft 365 — Word, Excel, Teams, and Outlook are all places where an AI giving a bad answer has real productivity consequences. A tighter feedback-to-repair loop would make Copilot feel less like a capable-but-brittle tool and more like something you can actually rely on. It's also the kind of infrastructure improvement that compounds: better prompts mean better outputs mean more trust.

This is genuinely useful, unglamorous infrastructure work — the kind of thing that makes the difference between an AI assistant you keep using and one you quietly abandon after a week. Microsoft has 21 inventors on this filing, which suggests it's a serious internal investment rather than a speculative patent grab. The actionability check is the clever bit; without it, you'd just be piling noise on top of noise.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.