Microsoft Patents a Distributed Antialiasing Engine for Smoother Cloud-Streamed Graphics

Jagged edges are the telltale sign of a game or app running on someone else's hardware. Microsoft's new patent proposes splitting the antialiasing workload between server and client so streamed visuals look sharper — without hammering either end alone.

How Microsoft splits edge-smoothing across server and client

Imagine you're playing a cloud-streamed game — the frames are rendered on a remote server and sent to your screen as video. The problem is that standard video compression loves smooth gradients and hates sharp edges, so the crisp outlines of a character or UI element can arrive at your device looking soft, blurry, or stepped.

Microsoft's patent describes a smarter pipeline: instead of trying to fix those jagged edges entirely on the server before sending the video, the server detects edges in the rendered frame, packages that edge information as a kind of invisible map, and hides it inside the video stream. Your device's client-side engine then reads that map and uses it to reconstruct clean, smooth edges locally — blending the server-rendered frame with whatever your device generated itself.

The result is that neither the server nor your device has to do all the heavy lifting alone. The server handles the analytical geometry; your device handles the final pixel-level blending. It's a divide-and-conquer approach to a problem that every streaming platform quietly wrestles with.

How the edge image gets encoded into a depth frame

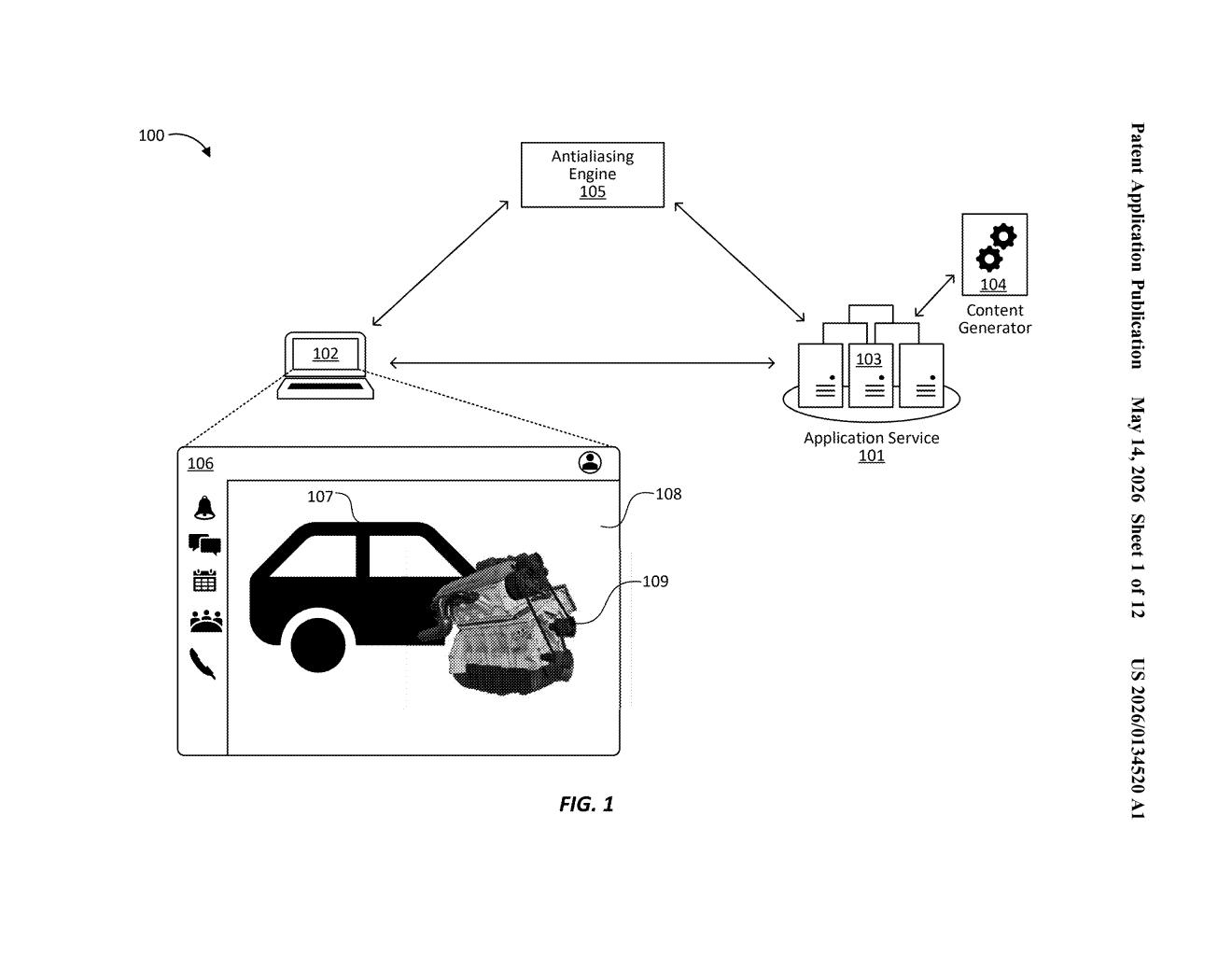

The patent describes an antialiasing engine (a system for smoothing jagged edges in rendered images) split across two tiers: a server-side content generator and a client-side decoder.

On the server, the engine works in three steps:

- Edge detection: It scans the rendered frame and identifies foreground/background boundaries — the outlines of objects.

- Edge image generation: It creates a per-pixel proximity map showing how close each pixel is to a detected edge, essentially a soft mask of where geometry boundaries live.

- Analytical edge encoding: It traces the edge image to produce a clean, vector-like analytical edge (a mathematically precise description of the boundary rather than a jagged raster line) and encodes it into the depth frame of a standard video stream — a channel that typically carries depth/Z-buffer data and is often underused in compressed video.

On the client, the engine decodes that embedded edge data from the depth channel and samples it to reconstruct the analytical edge. It then composites the server-rendered frame with a locally generated second frame, using the reconstructed edge as a precise blending guide. The term morphological antialiasing refers to a class of post-processing techniques that analyze image geometry (shapes and edges) rather than rendering multiple samples per pixel — making it cheaper to run than traditional multisampling.

What this means for cloud gaming and remote rendering

Cloud gaming and remote desktop services (think Xbox Cloud Gaming, GeForce NOW, or Azure Virtual Desktop) all face the same core problem: video compression destroys fine edge detail, and there's no practical way to re-render on the client without knowing what the server originally drew. This patent's trick of piggybacking edge metadata onto the depth channel of an existing video stream is elegant — it doesn't require a separate side channel or higher bitrate, it just uses bandwidth that's already being transmitted.

For you as an end user, the practical upshot would be sharper text, cleaner UI outlines, and less of that characteristic soft-focus look that streaming games and apps tend to have. For Microsoft, it's a potential differentiator for Azure-hosted rendering and the Xbox ecosystem — making cloud-delivered graphics feel more like local rendering without requiring faster internet.

This is solid, unsexy infrastructure work that addresses a real and persistent problem in cloud streaming. The depth-channel encoding trick is genuinely clever — it's the kind of solution that looks obvious in hindsight but requires knowing the full stack intimately. Don't expect a press release, but do expect something like this quietly shipping in Azure or Xbox Cloud Gaming within a product cycle or two.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.