Samsung Patents a Real-Time Video System That Labels Every Pixel Across Frames

Imagine a camera that doesn't just detect the car in a video — it labels every single pixel in every frame, keeps track of which car is which as it moves, and does it all in sequence without rewinding the footage. That's what Samsung is patenting here.

What Samsung's video panoptic segmentation actually does

Think about watching a busy street scene on video. Your eye can instantly tell the road apart from the sidewalk, and you know that the red car at 0:02 is the same red car at 0:10 even after it briefly goes behind a bus. Teaching a computer to do all of that — at once, in real time, for every pixel — is genuinely hard.

Samsung's patent describes a system that handles this in one unified pipeline. It processes a stream of video frames, assigns a label to every pixel (is this sky? a person? a specific car?), and also keeps a running memory of each individual object so it doesn't lose track of them between frames. Most existing systems either do the labeling part or the tracking part well — not both together cleanly.

The clever bit is a component called an online tracker that takes the AI's understanding of the current frame and cross-references it against what it understood in the previous frame. This lets the system stay consistent without having to process the entire video clip at once.

How Samsung's encoder, decoder, and tracker pipeline fits together

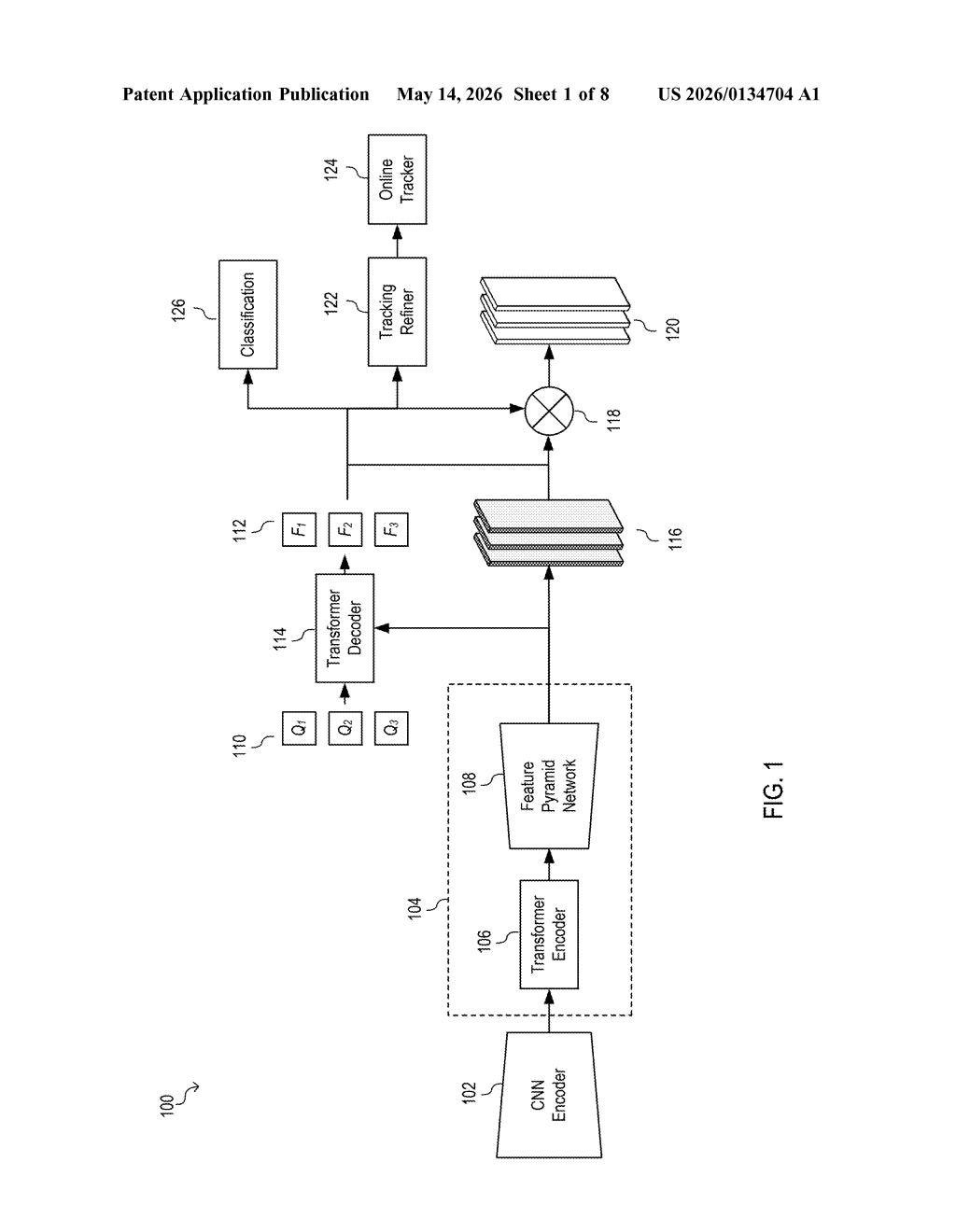

The system chains four components together into a single end-to-end pipeline:

- CNN Encoder: A convolutional neural network (a type of image-recognition AI) processes each video frame and produces multi-scale feature maps — essentially the same image analyzed at different zoom levels simultaneously, so both fine details and broad context are captured.

- Pixel Decoder: Refines those multi-scale maps into mask feature representations — dense spatial grids that the downstream components use to draw precise per-pixel boundaries.

- Transformer Decoder: Produces two outputs — query embeddings (compact vector summaries of each detected object or region) and mask predictions (the actual pixel-level outlines). Think of query embeddings as a fingerprint for each object in the frame.

- Online Tracker: Matches the current frame's query embeddings against the previous frame's embeddings, then refines them. This is what gives the system temporal consistency — the same person or car gets the same identity label across the whole clip.

The final output is a panoptic segmentation result — a frame-by-frame map that simultaneously handles semantic labels (this region is road, that region is sky) and instance labels (this is car #1, that is car #2), unified across time.

What this means for Samsung cameras and on-device AI

For Samsung, this kind of capability is a building block for smarter cameras and edge-AI devices. A Galaxy phone or a surveillance camera with this system could do real-time scene understanding — separating subjects from backgrounds for video bokeh effects, powering driver-assistance features, or enabling richer AR overlays — without sending video to the cloud.

The broader importance is efficiency. By handling semantic labeling and instance tracking in a single pass rather than two separate systems, the architecture reduces computational overhead. That matters a lot when you're trying to run this kind of AI on a phone chip or an embedded processor rather than a data center GPU.

This is solid, focused computer-vision infrastructure work — not a splashy consumer feature, but exactly the kind of patent that ends up quietly powering future camera modes and video AI on Samsung's devices. The online tracker approach for maintaining inter-frame consistency is the genuinely interesting piece here, and it's a real engineering problem worth solving.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.